Jeffrey C. Fried

Summarization of ICU Patient Motion from Multimodal Multiview Videos

Jun 28, 2017

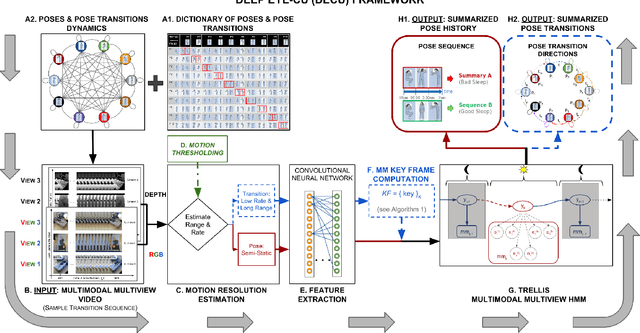

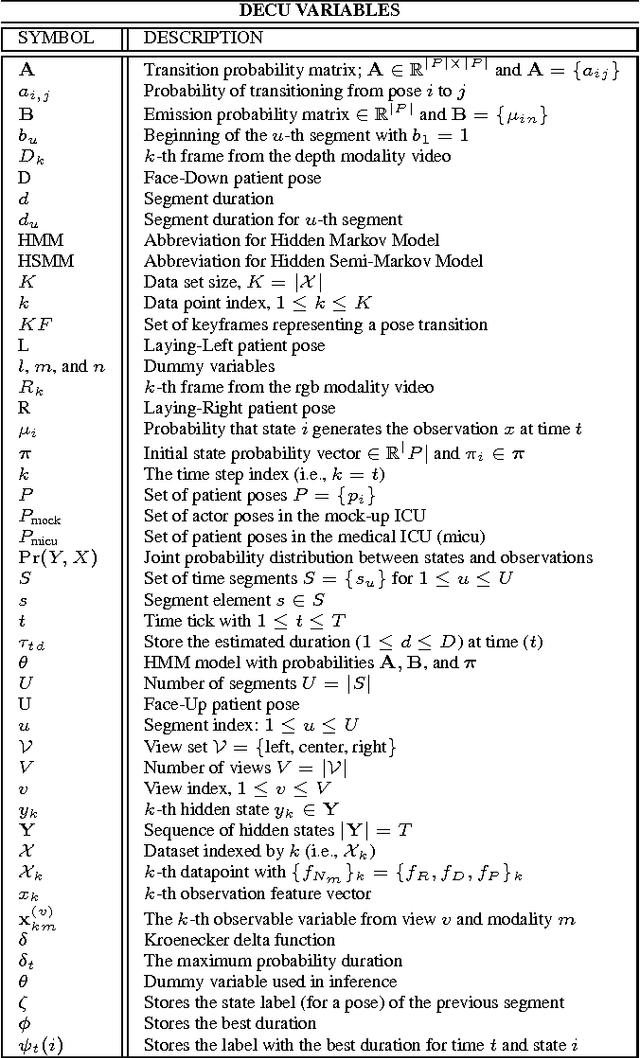

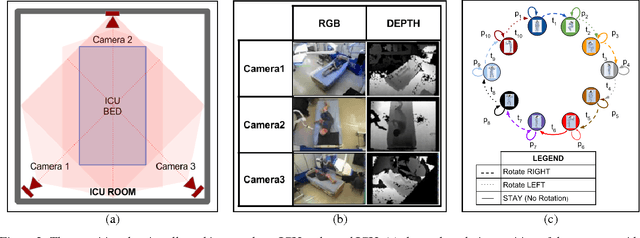

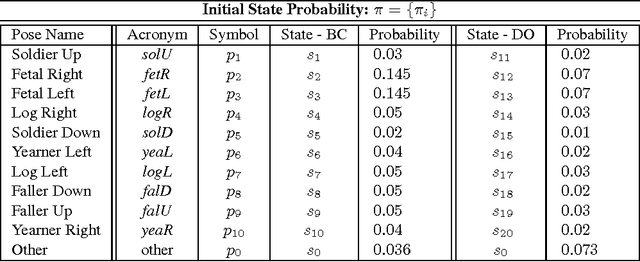

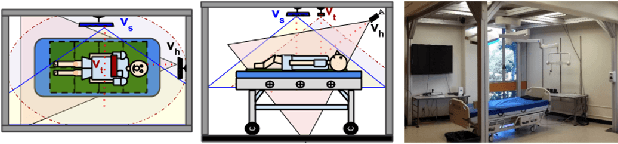

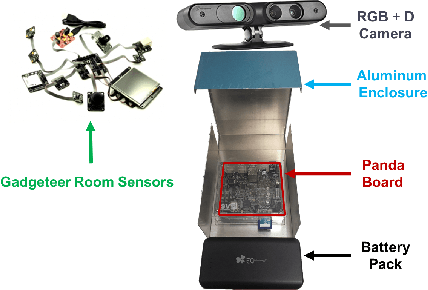

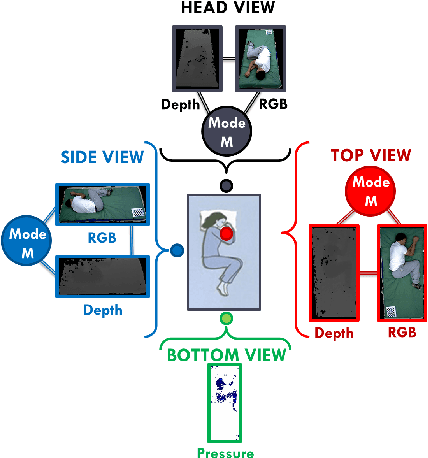

Abstract:Clinical observations indicate that during critical care at the hospitals, patients sleep positioning and motion affect recovery. Unfortunately, there is no formal medical protocol to record, quantify, and analyze patient motion. There is a small number of clinical studies, which use manual analysis of sleep poses and motion recordings to support medical benefits of patient positioning and motion monitoring. Manual processes are not scalable, are prone to human errors, and strain an already taxed healthcare workforce. This study introduces DECU (Deep Eye-CU): an autonomous mulitmodal multiview system, which addresses these issues by autonomously monitoring healthcare environments and enabling the recording and analysis of patient sleep poses and motion. DECU uses three RGB-D cameras to monitor patient motion in a medical Intensive Care Unit (ICU). The algorithms in DECU estimate pose direction at different temporal resolutions and use keyframes to efficiently represent pose transition dynamics. DECU combines deep features computed from the data with a modified version of Hidden Markov Model to more flexibly model sleep pose duration, analyze pose patterns, and summarize patient motion. Extensive experimental results are presented. The performance of DECU is evaluated in ideal (BC: Bright and Clear/occlusion-free) and natural (DO: Dark and Occluded) scenarios at two motion resolutions in a mock-up and a real ICU. The results indicate that deep features allow DECU to match the classification performance of engineered features in BC scenes and increase the accuracy by up to 8% in DO scenes. In addition, the overall pose history summarization tracing accuracy shows an average detection rate of 85% in BC and of 76% in DO scenes. The proposed keyframe estimation algorithm allows DECU to reach an average 78% transition classification accuracy.

Eye-CU: Sleep Pose Classification for Healthcare using Multimodal Multiview Data

Feb 22, 2016

Abstract:Manual analysis of body poses of bed-ridden patients requires staff to continuously track and record patient poses. Two limitations in the dissemination of pose-related therapies are scarce human resources and unreliable automated systems. This work addresses these issues by introducing a new method and a new system for robust automated classification of sleep poses in an Intensive Care Unit (ICU) environment. The new method, coupled-constrained Least-Squares (cc-LS), uses multimodal and multiview (MM) data and finds the set of modality trust values that minimizes the difference between expected and estimated labels. The new system, Eye-CU, is an affordable multi-sensor modular system for unobtrusive data collection and analysis in healthcare. Experimental results indicate that the performance of cc-LS matches the performance of existing methods in ideal scenarios. This method outperforms the latest techniques in challenging scenarios by 13% for those with poor illumination and by 70% for those with both poor illumination and occlusions. Results also show that a reduced Eye-CU configuration can classify poses without pressure information with only a slight drop in its performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge