Jean-Pascal Pfister

On gauge freedom, conservativity and intrinsic dimensionality estimation in diffusion models

Feb 06, 2024

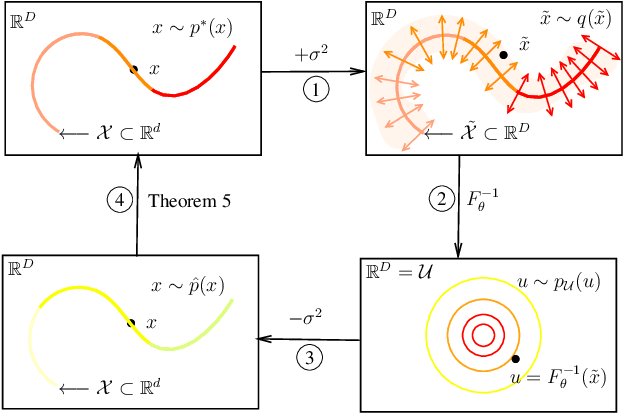

Abstract:Diffusion models are generative models that have recently demonstrated impressive performances in terms of sampling quality and density estimation in high dimensions. They rely on a forward continuous diffusion process and a backward continuous denoising process, which can be described by a time-dependent vector field and is used as a generative model. In the original formulation of the diffusion model, this vector field is assumed to be the score function (i.e. it is the gradient of the log-probability at a given time in the diffusion process). Curiously, on the practical side, most studies on diffusion models implement this vector field as a neural network function and do not constrain it be the gradient of some energy function (that is, most studies do not constrain the vector field to be conservative). Even though some studies investigated empirically whether such a constraint will lead to a performance gain, they lead to contradicting results and failed to provide analytical results. Here, we provide three analytical results regarding the extent of the modeling freedom of this vector field. {Firstly, we propose a novel decomposition of vector fields into a conservative component and an orthogonal component which satisfies a given (gauge) freedom. Secondly, from this orthogonal decomposition, we show that exact density estimation and exact sampling is achieved when the conservative component is exactly equals to the true score and therefore conservativity is neither necessary nor sufficient to obtain exact density estimation and exact sampling. Finally, we show that when it comes to inferring local information of the data manifold, constraining the vector field to be conservative is desirable.

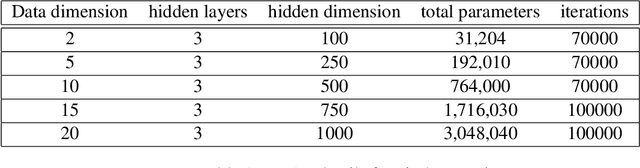

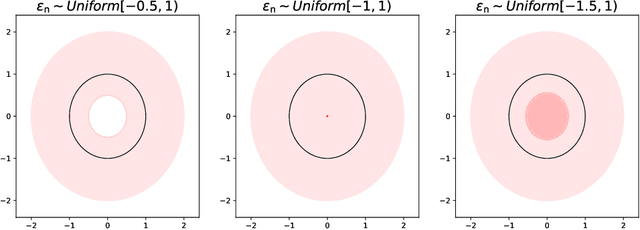

Density estimation on low-dimensional manifolds: an inflation-deflation approach

Jun 02, 2021

Abstract:Normalizing Flows (NFs) are universal density estimators based on Neuronal Networks. However, this universality is limited: the density's support needs to be diffeomorphic to a Euclidean space. In this paper, we propose a novel method to overcome this limitation without sacrificing universality. The proposed method inflates the data manifold by adding noise in the normal space, trains an NF on this inflated manifold, and, finally, deflates the learned density. Our main result provides sufficient conditions on the manifold and the specific choice of noise under which the corresponding estimator is exact. Our method has the same computational complexity as NFs and does not require computing an inverse flow. We also show that, if the embedding dimension is much larger than the manifold dimension, noise in the normal space can be well approximated by Gaussian noise. This allows to use our method for approximating arbitrary densities on non-flat manifolds provided that the manifold dimension is known.

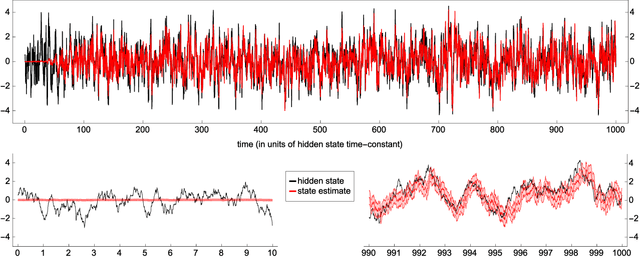

Online Maximum Likelihood Estimation of the Parameters of Partially Observed Diffusion Processes

Oct 15, 2018

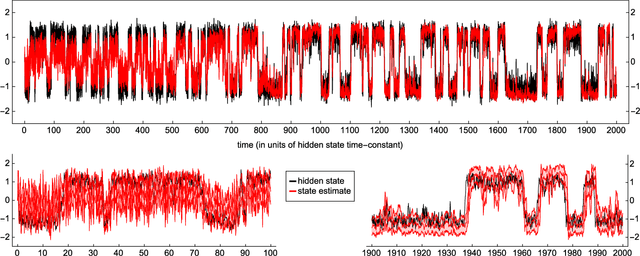

Abstract:We revisit the problem of estimating the parameters of a partially observed diffusion process, consisting of a hidden state process and an observed process, with a continuous time parameter. The estimation is to be done online, i.e. the parameter estimate should be updated recursively based on the observation filtration. We provide a theoretical analysis of the stochastic gradient ascent algorithm on the incomplete-data log-likelihood. The convergence of the algorithm is proved under suitable conditions regarding the ergodicity of the process consisting of state, filter, and tangent filter. Additionally, our parameter estimation is shown numerically to have the potential of improving suboptimal filters, and can be applied even when the system is not identifiable due to parameter redundancies. Online parameter estimation is a challenging problem that is ubiquitous in fields such as robotics, neuroscience, or finance in order to design adaptive filters and optimal controllers for unknown or changing systems. Despite this, theoretical analysis of convergence is currently lacking for most of these algorithms. This article sheds new light on the theory of convergence in continuous time.

Propagation of moments in Hawkes networks

Sep 18, 2018

Abstract:The present paper provides a mathematical description of high-order moments of spiking activity in a recurrently-connected network of Hawkes processes. It extends previous studies that have explored the case of a (linear) Hawkes network driven by deterministic rate functions to the case of a stimulation by external inputs (rate functions or spike trains) with arbitrary correlation structure. Our approach describes the spatio-temporal filtering induced by the afferent and recurrent connectivities using operators of the input moments. This algebraic viewpoint provides intuition about how the network ingredients shape the input-output mapping for moments, as well as cumulants.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge