Jean Pierre Allamaa

Learning Based NMPC Adaptation for Autonomous Driving using Parallelized Digital Twin

Feb 26, 2024

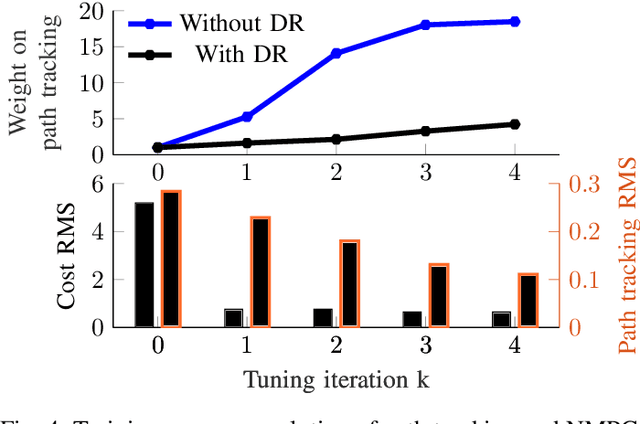

Abstract:In this work, we address the problem of transferring an autonomous driving (AD) module from one domain to another, in particular from simulation to the real world (Sim2Real). We propose a data-efficient method for online and on-the-fly learning based adaptation for parametrizable control architectures such that the target closed-loop performance is optimized under several uncertainty sources such as model mismatches, environment changes and task choice. The novelty of the work resides in leveraging black-box optimization enabled by executable digital twins, with data-driven hyper-parameter tuning through derivative-free methods to directly adapt in real-time the AD module. Our proposed method requires a minimal amount of interaction with the real-world in the randomization and online training phase. Specifically, we validate our approach in real-world experiments and show the ability to transfer and safely tune a nonlinear model predictive controller in less than 10 minutes, eliminating the need of day-long manual tuning and hours-long machine learning training phases. Our results show that the online adapted NMPC directly compensates for disturbances, avoids overtuning in simulation and for one specific task, and it generalizes for less than 15cm of tracking accuracy over a multitude of trajectories, and leads to 83% tracking improvement.

Reinforcement Learning from Simulation to Real World Autonomous Driving using Digital Twin

Nov 27, 2022

Abstract:Reinforcement learning (RL) is a promising solution for autonomous vehicles to deal with complex and uncertain traffic environments. The RL training process is however expensive, unsafe, and time consuming. Algorithms are often developed first in simulation and then transferred to the real world, leading to a common sim2real challenge that performance decreases when the domain changes. In this paper, we propose a transfer learning process to minimize the gap by exploiting digital twin technology, relying on a systematic and simultaneous combination of virtual and real world data coming from vehicle dynamics and traffic scenarios. The model and testing environment are evolved from model, hardware to vehicle in the loop and proving ground testing stages, similar to standard development cycle in automotive industry. In particular, we also integrate other transfer learning techniques such as domain randomization and adaptation in each stage. The simulation and real data are gradually incorporated to accelerate and make the transfer learning process more robust. The proposed RL methodology is applied to develop a path following steering controller for an autonomous electric vehicle. After learning and deploying the real-time RL control policy on the vehicle, we obtained satisfactory and safe control performance already from the first deployment, demonstrating the advantages of the proposed digital twin based learning process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge