Jason Kim

PromptHelper: A Prompt Recommender System for Encouraging Creativity in AI Chatbot Interactions

Jan 22, 2026Abstract:Prompting is central to interaction with AI systems, yet many users struggle to explore alternative directions, articulate creative intent, or understand how variations in prompts shape model outputs. We introduce prompt recommender systems (PRS) as an interaction approach that supports exploration, suggesting contextually relevant follow-up prompts. We present PromptHelper, a PRS prototype integrated into an AI chatbot that surfaces semantically diverse prompt suggestions while users work on real writing tasks. We evaluate PromptHelper in a 2x2 fully within-subjects study (N=32) across creative and academic writing tasks. Results show that PromptHelper significantly increases users' perceived exploration and expressiveness without increasing cognitive workload. Qualitative findings illustrate how prompt recommendations help users branch into new directions, overcome uncertainty about what to ask next, and better articulate their intent. We discuss implications for designing AI interfaces that scaffold exploratory interaction while preserving user agency, and release open-source resources to support research on prompt recommendation.

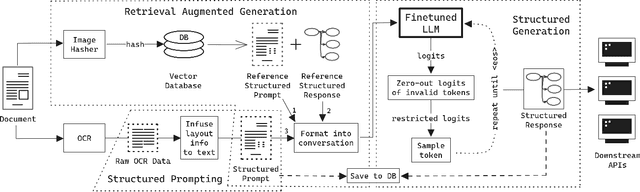

Retrieval Augmented Structured Generation: Business Document Information Extraction As Tool Use

May 30, 2024

Abstract:Business Document Information Extraction (BDIE) is the problem of transforming a blob of unstructured information (raw text, scanned documents, etc.) into a structured format that downstream systems can parse and use. It has two main tasks: Key-Information Extraction (KIE) and Line Items Recognition (LIR). In this paper, we argue that BDIE is best modeled as a Tool Use problem, where the tools are these downstream systems. We then present Retrieval Augmented Structured Generation (RASG), a novel general framework for BDIE that achieves state of the art (SOTA) results on both KIE and LIR tasks on BDIE benchmarks. The contributions of this paper are threefold: (1) We show, with ablation benchmarks, that Large Language Models (LLMs) with RASG are already competitive with or surpasses current SOTA Large Multimodal Models (LMMs) without RASG on BDIE benchmarks. (2) We propose a new metric class for Line Items Recognition, General Line Items Recognition Metric (GLIRM), that is more aligned with practical BDIE use cases compared to existing metrics, such as ANLS*, DocILE, and GriTS. (3) We provide a heuristic algorithm for backcalculating bounding boxes of predicted line items and tables without the need for vision encoders. Finally, we claim that, while LMMs might sometimes offer marginal performance benefits, LLMs + RASG is oftentimes superior given real-world applications and constraints of BDIE.

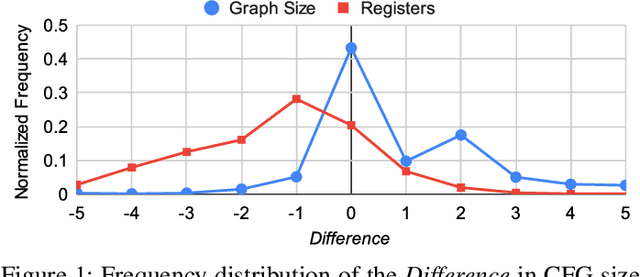

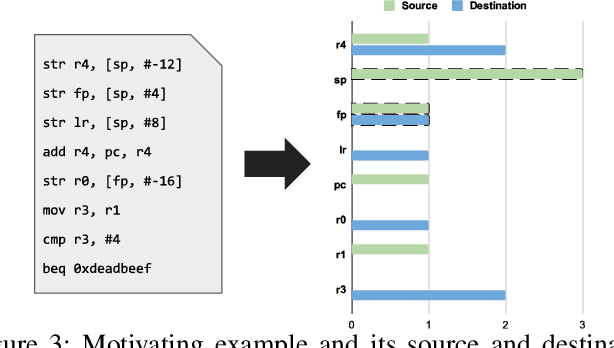

Revisiting Lightweight Compiler Provenance Recovery on ARM Binaries

May 06, 2023

Abstract:A binary's behavior is greatly influenced by how the compiler builds its source code. Although most compiler configuration details are abstracted away during compilation, recovering them is useful for reverse engineering and program comprehension tasks on unknown binaries, such as code similarity detection. We observe that previous work has thoroughly explored this on x86-64 binaries. However, there has been limited investigation of ARM binaries, which are increasingly prevalent. In this paper, we extend previous work with a shallow-learning model that efficiently and accurately recovers compiler configuration properties for ARM binaries. We apply opcode and register-derived features, that have previously been effective on x86-64 binaries, to ARM binaries. Furthermore, we compare this work with Pizzolotto et al., a recent architecture-agnostic model that uses deep learning, whose dataset and code are available. We observe that the lightweight features are reproducible on ARM binaries. We achieve over 99% accuracy, on par with state-of-the-art deep learning approaches, while achieving a 583-times speedup during training and 3,826-times speedup during inference. Finally, we also discuss findings of overfitting that was previously undetected in prior work.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge