Jakub Prokop

Panoptic Segmentation of Mammograms with Text-To-Image Diffusion Model

Jul 19, 2024

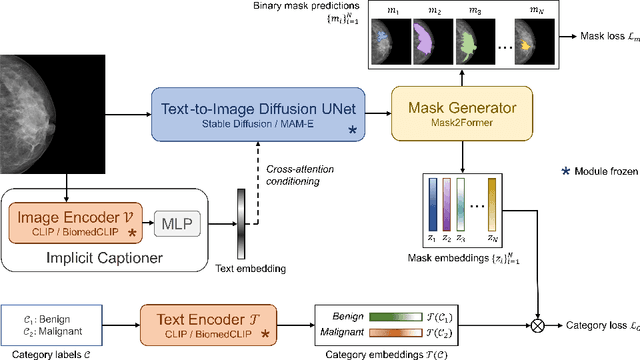

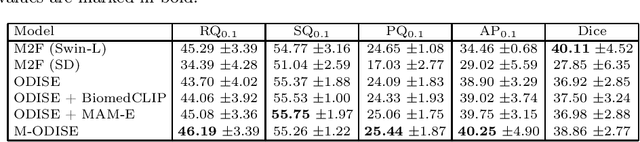

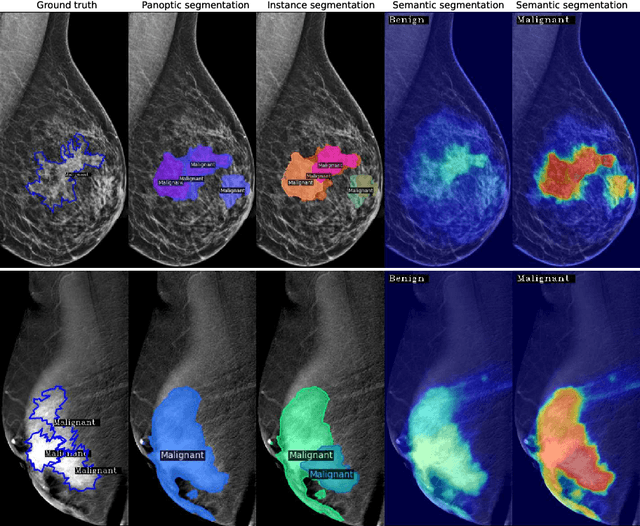

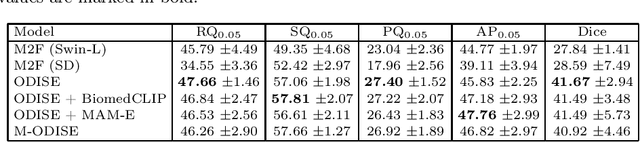

Abstract:Mammography is crucial for breast cancer surveillance and early diagnosis. However, analyzing mammography images is a demanding task for radiologists, who often review hundreds of mammograms daily, leading to overdiagnosis and overtreatment. Computer-Aided Diagnosis (CAD) systems have been developed to assist in this process, but their capabilities, particularly in lesion segmentation, remained limited. With the contemporary advances in deep learning their performance may be improved. Recently, vision-language diffusion models emerged, demonstrating outstanding performance in image generation and transferability to various downstream tasks. We aim to harness their capabilities for breast lesion segmentation in a panoptic setting, which encompasses both semantic and instance-level predictions. Specifically, we propose leveraging pretrained features from a Stable Diffusion model as inputs to a state-of-the-art panoptic segmentation architecture, resulting in accurate delineation of individual breast lesions. To bridge the gap between natural and medical imaging domains, we incorporated a mammography-specific MAM-E diffusion model and BiomedCLIP image and text encoders into this framework. We evaluated our approach on two recently published mammography datasets, CDD-CESM and VinDr-Mammo. For the instance segmentation task, we noted 40.25 AP0.1 and 46.82 AP0.05, as well as 25.44 PQ0.1 and 26.92 PQ0.05. For the semantic segmentation task, we achieved Dice scores of 38.86 and 40.92, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge