J. Tanner Slagel

Stochastic Newton and Quasi-Newton Methods for Large Linear Least-squares Problems

Feb 23, 2017

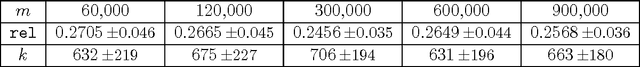

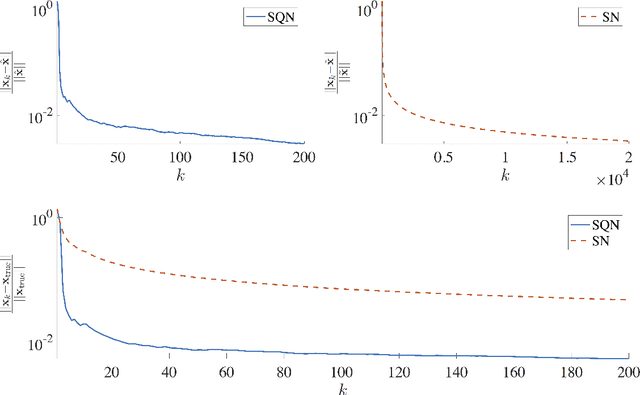

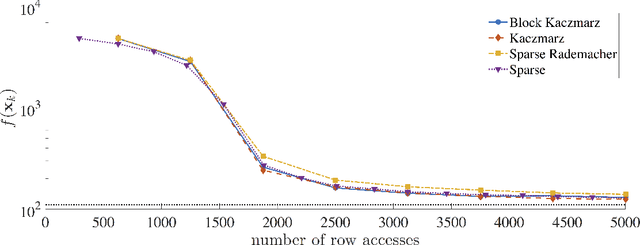

Abstract:We describe stochastic Newton and stochastic quasi-Newton approaches to efficiently solve large linear least-squares problems where the very large data sets present a significant computational burden (e.g., the size may exceed computer memory or data are collected in real-time). In our proposed framework, stochasticity is introduced in two different frameworks as a means to overcome these computational limitations, and probability distributions that can exploit structure and/or sparsity are considered. Theoretical results on consistency of the approximations for both the stochastic Newton and the stochastic quasi-Newton methods are provided. The results show, in particular, that stochastic Newton iterates, in contrast to stochastic quasi-Newton iterates, may not converge to the desired least-squares solution. Numerical examples, including an example from extreme learning machines, demonstrate the potential applications of these methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge