Ishan Sinha

Emergent Symbols through Binding in External Memory

Dec 29, 2020

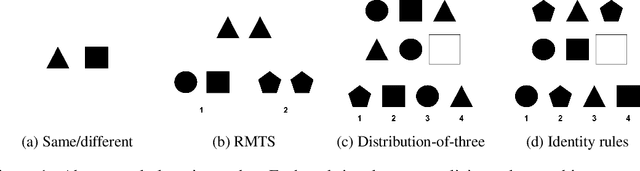

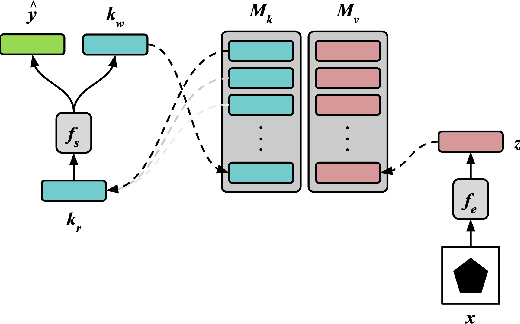

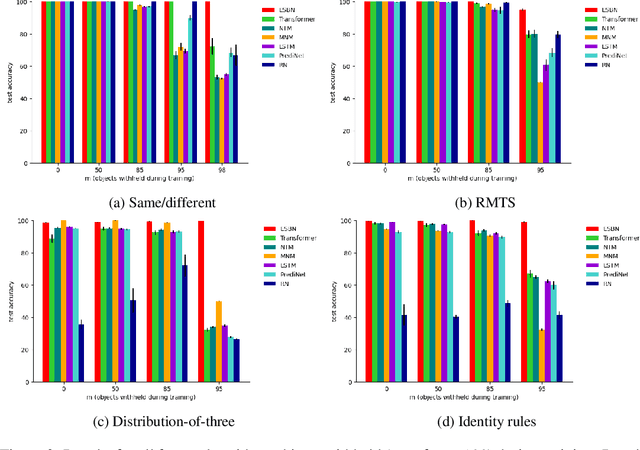

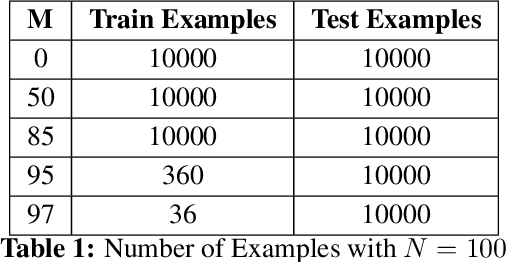

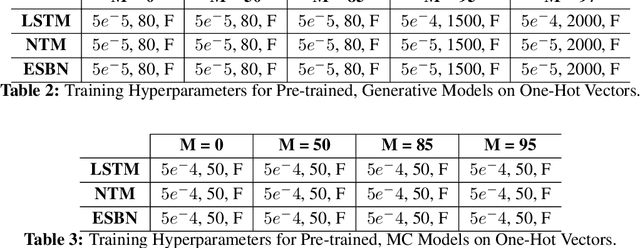

Abstract:A key aspect of human intelligence is the ability to infer abstract rules directly from high-dimensional sensory data, and to do so given only a limited amount of training experience. Deep neural network algorithms have proven to be a powerful tool for learning directly from high-dimensional data, but currently lack this capacity for data-efficient induction of abstract rules, leading some to argue that symbol-processing mechanisms will be necessary to account for this capacity. In this work, we take a step toward bridging this gap by introducing the Emergent Symbol Binding Network (ESBN), a recurrent network augmented with an external memory that enables a form of variable-binding and indirection. This binding mechanism allows symbol-like representations to emerge through the learning process without the need to explicitly incorporate symbol-processing machinery, enabling the ESBN to learn rules in a manner that is abstracted away from the particular entities to which those rules apply. Across a series of tasks, we show that this architecture displays nearly perfect generalization of learned rules to novel entities given only a limited number of training examples, and outperforms a number of other competitive neural network architectures.

A Memory-Augmented Neural Network Model of Abstract Rule Learning

Dec 15, 2020

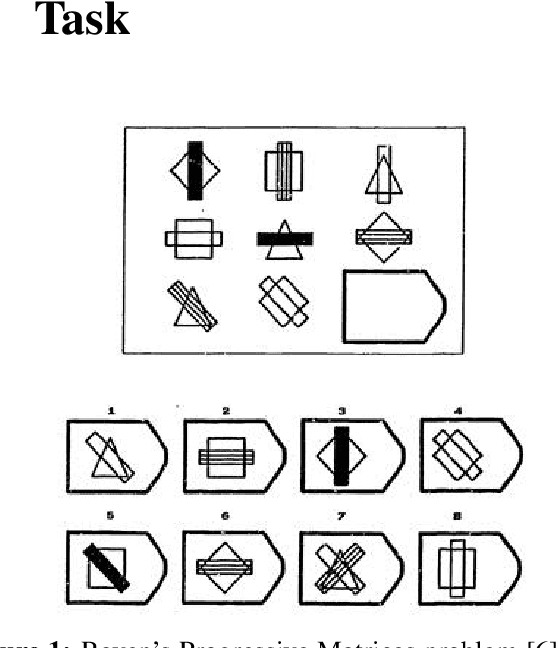

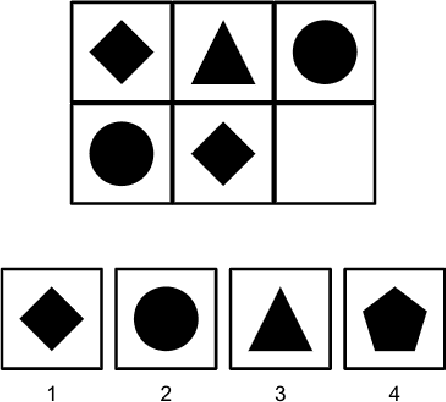

Abstract:Human intelligence is characterized by a remarkable ability to infer abstract rules from experience and apply these rules to novel domains. As such, designing neural network algorithms with this capacity is an important step toward the development of deep learning systems with more human-like intelligence. However, doing so is a major outstanding challenge, one that some argue will require neural networks to use explicit symbol-processing mechanisms. In this work, we focus on neural networks' capacity for arbitrary role-filler binding, the ability to associate abstract "roles" to context-specific "fillers," which many have argued is an important mechanism underlying the ability to learn and apply rules abstractly. Using a simplified version of Raven's Progressive Matrices, a hallmark test of human intelligence, we introduce a sequential formulation of a visual problem-solving task that requires this form of binding. Further, we introduce the Emergent Symbol Binding Network (ESBN), a recurrent neural network model that learns to use an external memory as a binding mechanism. This mechanism enables symbol-like variable representations to emerge through the ESBN's training process without the need for explicit symbol-processing machinery. We empirically demonstrate that the ESBN successfully learns the underlying abstract rule structure of our task and perfectly generalizes this rule structure to novel fillers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge