Inbar Ben-David

Learning Haptic-based Object Pose Estimation for In-hand Manipulation with Underactuated Robotic Hands

Jul 06, 2022

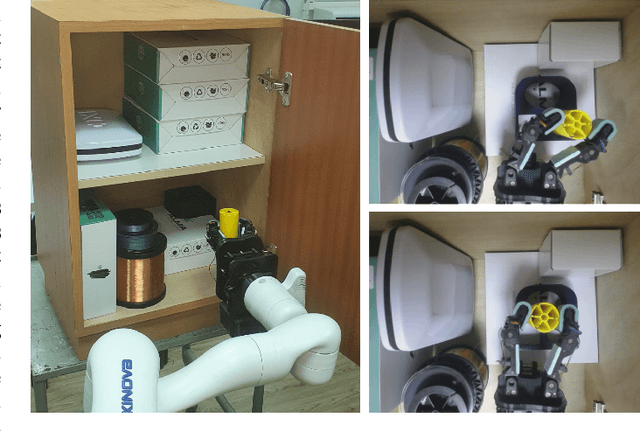

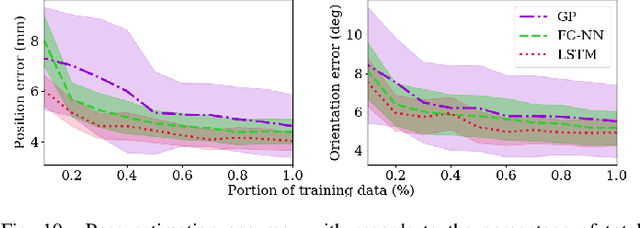

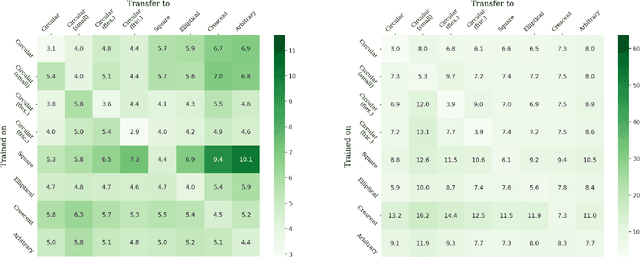

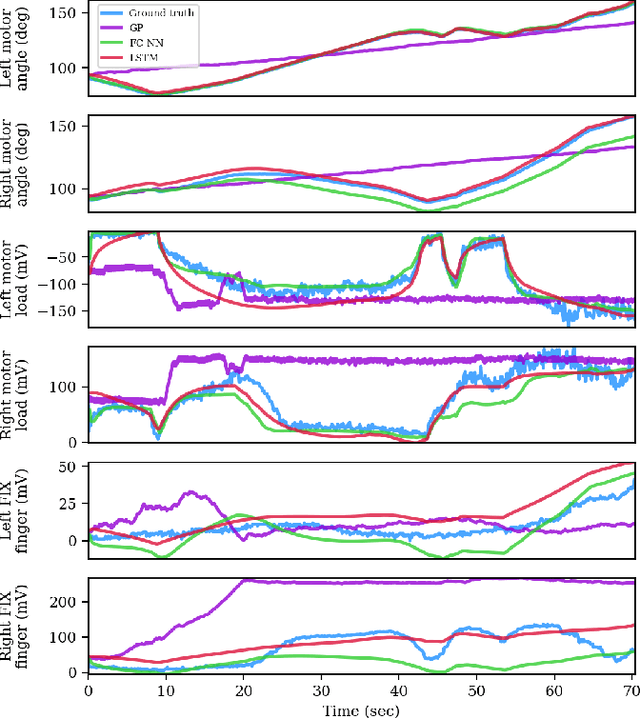

Abstract:Unlike traditional robotic hands, underactuated compliant hands are challenging to model due to inherent uncertainties. Consequently, pose estimation of a grasped object is usually performed based on visual perception. However, visual perception of the hand and object can be limited in occluded or partly-occluded environments. In this paper, we aim to explore the use of haptics, i.e., kinesthetic and tactile sensing, for pose estimation and in-hand manipulation with underactuated hands. Such haptic approach would mitigate occluded environments where line-of-sight is not always available. We put an emphasis on identifying the feature state representation of the system that does not include vision and can be obtained with simple and low-cost hardware. For tactile sensing, therefore, we propose a low-cost and flexible sensor that is mostly 3D printed along with the finger-tip and can provide implicit contact information. Taking a two-finger underactuated hand as a test-case, we analyze the contribution of kinesthetic and tactile features along with various regression models to the accuracy of the predictions. Furthermore, we propose a Model Predictive Control (MPC) approach which utilizes the pose estimation to manipulate objects to desired states solely based on haptics. We have conducted a series of experiments that validate the ability to estimate poses of various objects with different geometry, stiffness and texture, and show manipulation to goals in the workspace with relatively high accuracy.

Simple Kinesthetic Haptics for Object Recognition

Jun 11, 2022

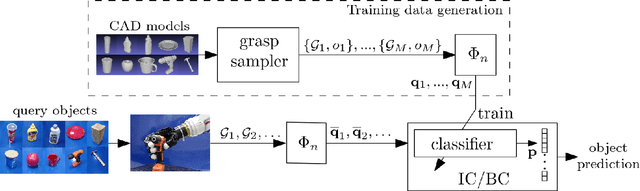

Abstract:Object recognition is an essential capability when performing various tasks. Humans naturally use either or both visual and tactile perception to extract object class and properties. Typical approaches for robots, however, require complex visual systems or multiple high-density tactile sensors which can be highly expensive. In addition, they usually require actual collection of a large dataset from real objects through direct interaction. In this paper, we propose a kinesthetic-based object recognition method that can be performed with any multi-fingered robotic hand in which the kinematics is known. The method does not require tactile sensors and is based on observing grasps of the objects. We utilize a unique and frame invariant parameterization of grasps to learn instances of object shapes. To train a classifier, training data is generated rapidly and solely in a computational process without interaction with real objects. We then propose and compare between two iterative algorithms that can integrate any trained classifier. The classifiers and algorithms are independent of any particular robot hand and, therefore, can be exerted on various ones. We show in experiments, that with few grasps, the algorithms acquire accurate classification. Furthermore, we show that the object recognition approach is scalable to objects of various sizes. Similarly, a global classifier is trained to identify general geometries (e.g., an ellipsoid or a box) rather than particular ones and demonstrated on a large set of objects. Full scale experiments and analysis are provided to show the performance of the method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge