Imen Chakroun

INRIA Lille - Nord Europe

Guidelines for enhancing data locality in selected machine learning algorithms

Jan 09, 2020

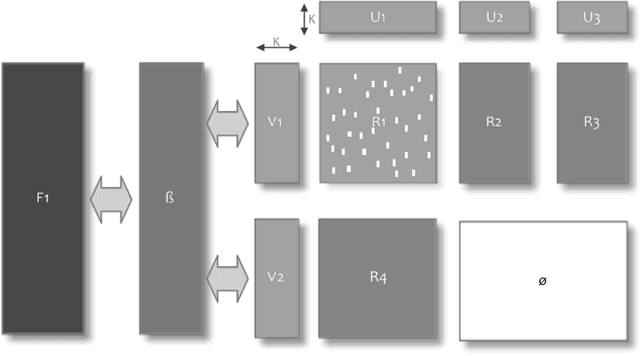

Abstract:To deal with the complexity of the new bigger and more complex generation of data, machine learning (ML) techniques are probably the first and foremost used. For ML algorithms to produce results in a reasonable amount of time, they need to be implemented efficiently. In this paper, we analyze one of the means to increase the performances of machine learning algorithms which is exploiting data locality. Data locality and access patterns are often at the heart of performance issues in computing systems due to the use of certain hardware techniques to improve performance. Altering the access patterns to increase locality can dramatically increase performance of a given algorithm. Besides, repeated data access can be seen as redundancy in data movement. Similarly, there can also be redundancy in the repetition of calculations. This work also identifies some of the opportunities for avoiding these redundancies by directly reusing computation results. We start by motivating why and how a more efficient implementation can be achieved by exploiting reuse in the memory hierarchy of modern instruction set processors. Next we document the possibilities of such reuse in some selected machine learning algorithms.

* European Commission Project: EPEEC - European joint Effort toward a Highly Productive Programming Environment for Heterogeneous Exascale Computing (EC-H2020-80151) This an extended version of arXiv:1904.11203

Reviewing Data Access Patterns and Computational Redundancy for Machine Learning Algorithms

Apr 25, 2019

Abstract:Machine learning (ML) is probably the first and foremost used technique to deal with the size and complexity of the new generation of data. In this paper, we analyze one of the means to increase the performances of ML algorithms which is exploiting data locality. Data locality and access patterns are often at the heart of performance issues in computing systems due to the use of certain hardware techniques to improve performance. Altering the access patterns to increase locality can dramatically increase performance of a given algorithm. Besides, repeated data access can be seen as redundancy in data movement. Similarly, there can also be redundancy in the repetition of calculations. This work also identifies some of the opportunities for avoiding these redundancies by directly reusing computation results. We document the possibilities of such reuse in some selected machine learning algorithms and give initial indicative results from our first experiments on data access improvement and algorithm redesign.

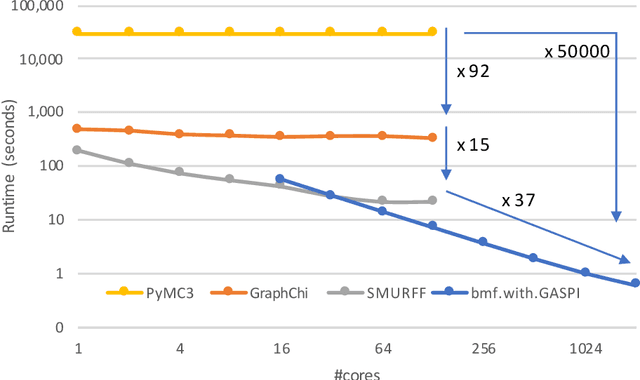

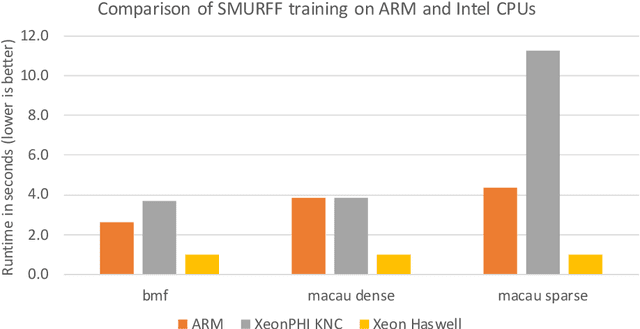

SMURFF: a High-Performance Framework for Matrix Factorization

Apr 04, 2019

Abstract:Bayesian Matrix Factorization (BMF) is a powerful technique for recommender systems because it produces good results and is relatively robust against overfitting. Yet BMF is more computationally intensive and thus more challenging to implement for large datasets. In this work we present SMURFF a high-performance feature-rich framework to compose and construct different Bayesian matrix-factorization methods. The framework has been successfully used in to do large scale runs of compound-activity prediction. SMURFF is available as open-source and can be used both on a supercomputer and on a desktop or laptop machine. Documentation and several examples are provided as Jupyter notebooks using SMURFF's high-level Python API.

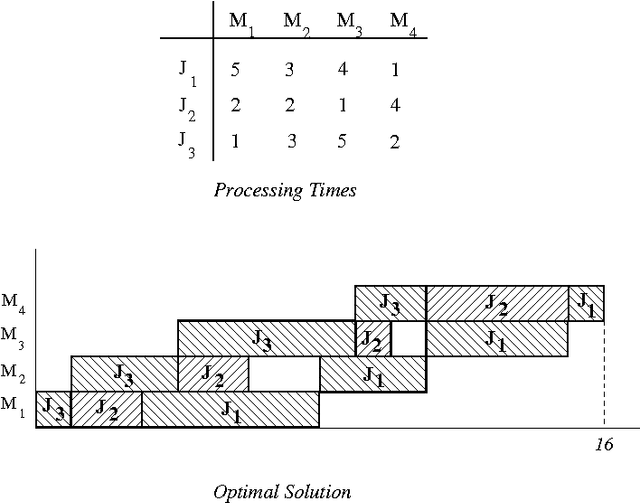

An Adaptative Multi-GPU based Branch-and-Bound. A Case Study: the Flow-Shop Scheduling Problem

Jun 21, 2012

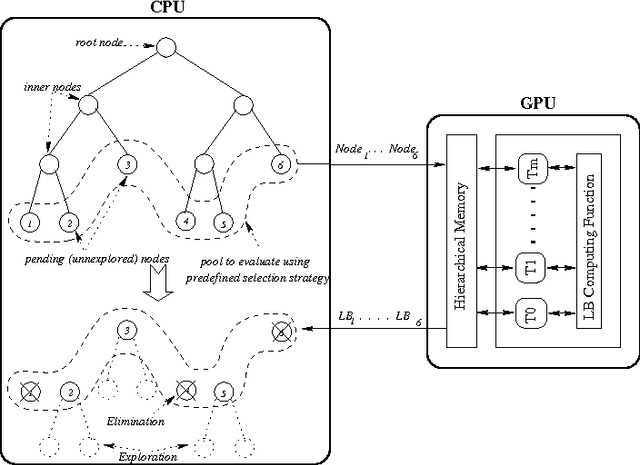

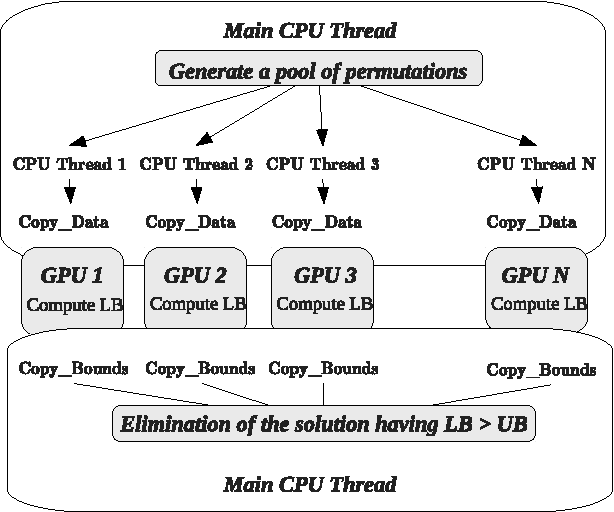

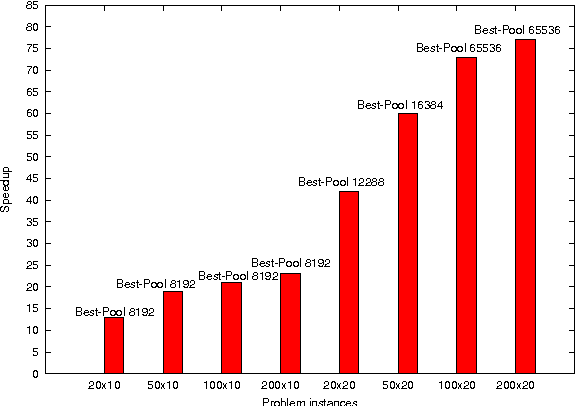

Abstract:Solving exactly Combinatorial Optimization Problems (COPs) using a Branch-and-Bound (B&B) algorithm requires a huge amount of computational resources. Therefore, we recently investigated designing B&B algorithms on top of graphics processing units (GPUs) using a parallel bounding model. The proposed model assumes parallelizing the evaluation of the lower bounds on pools of sub-problems. The results demonstrated that the size of the evaluated pool has a significant impact on the performance of B&B and that it depends strongly on the problem instance being solved. In this paper, we design an adaptative parallel B&B algorithm for solving permutation-based combinatorial optimization problems such as FSP (Flow-shop Scheduling Problem) on GPU accelerators. To do so, we propose a dynamic heuristic for parameter auto-tuning at runtime. Another challenge of this work is to exploit larger degrees of parallelism by using the combined computational power of multiple GPU devices. The approach has been applied to the permutation flow-shop problem. Extensive experiments have been carried out on well-known FSP benchmarks using an Nvidia Tesla S1070 Computing System equipped with two Tesla T10 GPUs. Compared to a CPU-based execution, accelerations up to 105 are achieved for large problem instances.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge