Ilke Oztekin

Modeling the Sequence of Brain Volumes by Local Mesh Models for Brain Decoding

Mar 03, 2016

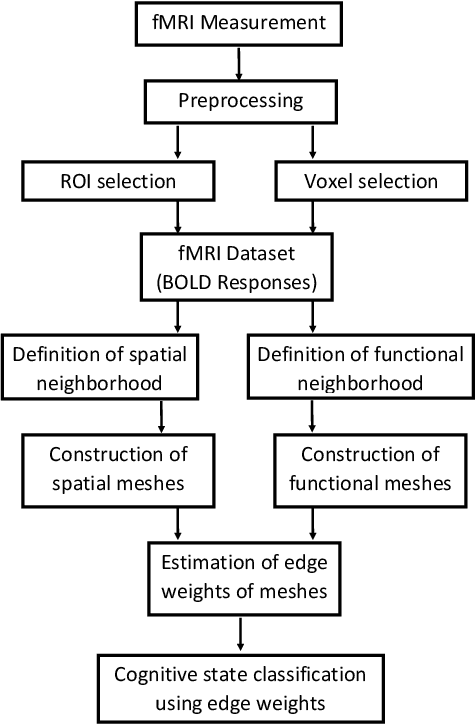

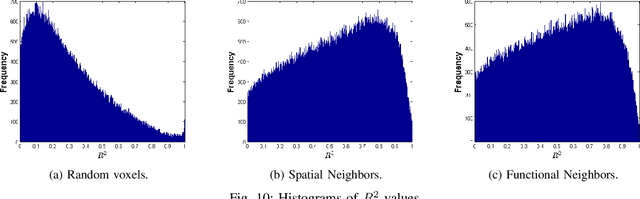

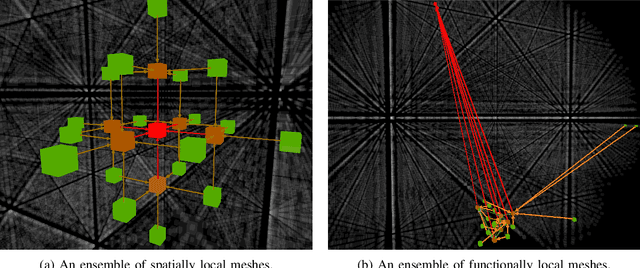

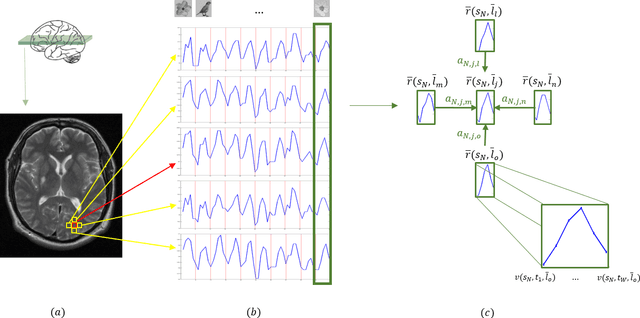

Abstract:We represent the sequence of fMRI (Functional Magnetic Resonance Imaging) brain volumes recorded during a cognitive stimulus by a graph which consists of a set of local meshes. The corresponding cognitive process, encoded in the brain, is then represented by these meshes each of which is estimated assuming a linear relationship among the voxel time series in a predefined locality. First, we define the concept of locality in two neighborhood systems, namely, the spatial and functional neighborhoods. Then, we construct spatially and functionally local meshes around each voxel, called seed voxel, by connecting it either to its spatial or functional p-nearest neighbors. The mesh formed around a voxel is a directed sub-graph with a star topology, where the direction of the edges is taken towards the seed voxel at the center of the mesh. We represent the time series recorded at each seed voxel in terms of linear combination of the time series of its p-nearest neighbors in the mesh. The relationships between a seed voxel and its neighbors are represented by the edge weights of each mesh, and are estimated by solving a linear regression equation. The estimated mesh edge weights lead to a better representation of information in the brain for encoding and decoding of the cognitive tasks. We test our model on a visual object recognition and emotional memory retrieval experiments using Support Vector Machines that are trained using the mesh edge weights as features. In the experimental analysis, we observe that the edge weights of the spatial and functional meshes perform better than the state-of-the-art brain decoding models.

Learning Deep Temporal Representations for Brain Decoding

Jan 12, 2015

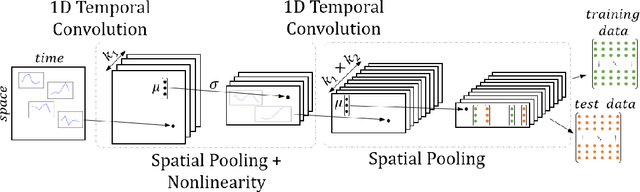

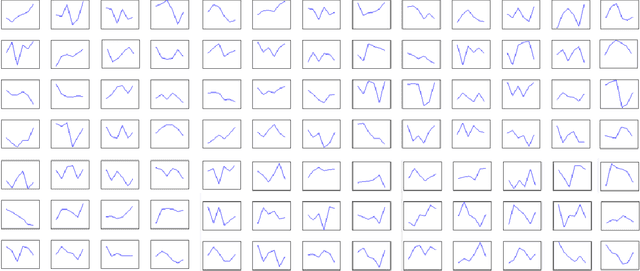

Abstract:Functional magnetic resonance imaging produces high dimensional data, with a less then ideal number of labelled samples for brain decoding tasks (predicting brain states). In this study, we propose a new deep temporal convolutional neural network architecture with spatial pooling for brain decoding which aims to reduce dimensionality of feature space along with improved classification performance. Temporal representations (filters) for each layer of the convolutional model are learned by leveraging unlabelled fMRI data in an unsupervised fashion with regularized autoencoders. Learned temporal representations in multiple levels capture the regularities in the temporal domain and are observed to be a rich bank of activation patterns which also exhibit similarities to the actual hemodynamic responses. Further, spatial pooling layers in the convolutional architecture reduce the dimensionality without losing excessive information. By employing the proposed temporal convolutional architecture with spatial pooling, raw input fMRI data is mapped to a non-linear, highly-expressive and low-dimensional feature space where the final classification is conducted. In addition, we propose a simple heuristic approach for hyper-parameter tuning when no validation data is available. Proposed method is tested on a ten class recognition memory experiment with nine subjects. The results support the efficiency and potential of the proposed model, compared to the baseline multi-voxel pattern analysis techniques.

Discriminative Functional Connectivity Measures for Brain Decoding

Mar 06, 2014

Abstract:We propose a statistical learning model for classifying cognitive processes based on distributed patterns of neural activation in the brain, acquired via functional magnetic resonance imaging (fMRI). In the proposed learning method, local meshes are formed around each voxel. The distance between voxels in the mesh is determined by using a functional neighbourhood concept. In order to define the functional neighbourhood, the similarities between the time series recorded for voxels are measured and functional connectivity matrices are constructed. Then, the local mesh for each voxel is formed by including the functionally closest neighbouring voxels in the mesh. The relationship between the voxels within a mesh is estimated by using a linear regression model. These relationship vectors, called Functional Connectivity aware Local Relational Features (FC-LRF) are then used to train a statistical learning machine. The proposed method was tested on a recognition memory experiment, including data pertaining to encoding and retrieval of words belonging to ten different semantic categories. Two popular classifiers, namely k-nearest neighbour (k-nn) and Support Vector Machine (SVM), are trained in order to predict the semantic category of the item being retrieved, based on activation patterns during encoding. The classification performance of the Functional Mesh Learning model, which range in 62%-71% is superior to the classical multi-voxel pattern analysis (MVPA) methods, which range in 40%-48%, for ten semantic categories.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge