Ignacio Albert-Smet

Optimizing Medical Treatment for Sepsis in Intensive Care: from Reinforcement Learning to Pre-Trial Evaluation

Mar 18, 2020

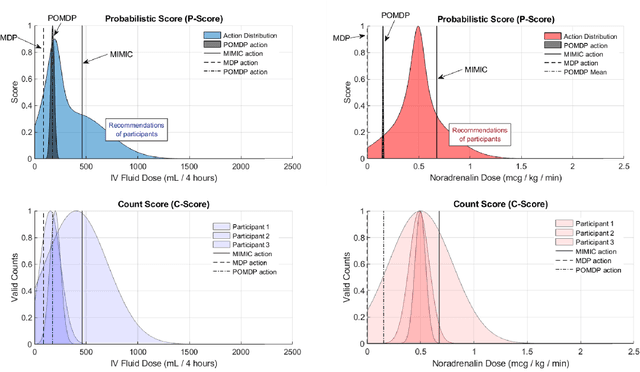

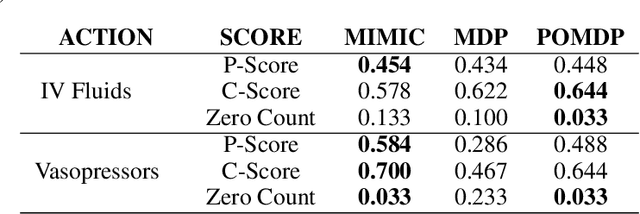

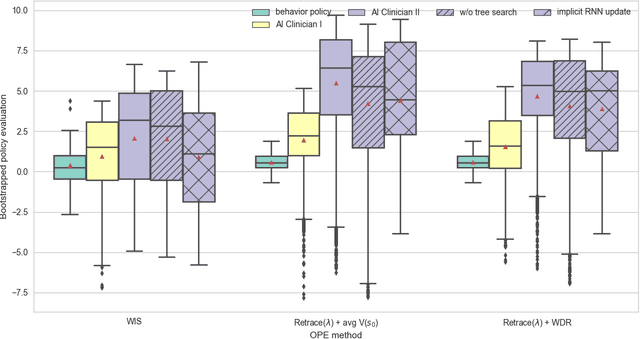

Abstract:Our aim is to establish a framework where reinforcement learning (RL) of optimizing interventions retrospectively allows us a regulatory compliant pathway to prospective clinical testing of the learned policies in a clinical deployment. We focus on infections in intensive care units which are one of the major causes of death and difficult to treat because of the complex and opaque patient dynamics, and the clinically debated, highly-divergent set of intervention policies required by each individual patient, yet intensive care units are naturally data rich. In our work, we build on RL approaches in healthcare ("AI Clinicians"), and learn off-policy continuous dosing policy of pharmaceuticals for sepsis treatment using historical intensive care data under partially observable MDPs (POMDPs). POMPDs capture uncertainty in patient state better by taking in all historical information, yielding an efficient representation, which we investigate through ablations. We compensate for the lack of exploration in our retrospective data by evaluating each encountered state with a best-first tree search. We mitigate state distributional shift by optimizing our policy in the vicinity of the clinicians' compound policy. Crucially, we evaluate our model recommendations using not only conventional policy evaluations but a novel framework that incorporates human experts: a model-agnostic pre-clinical evaluation method to estimate the accuracy and uncertainty of clinician's decisions versus our system recommendations when confronted with the same individual patient history ("shadow mode").

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge