Idriss Malek

Loss-Guided Auxiliary Agents for Overcoming Mode Collapse in GFlowNets

May 21, 2025Abstract:Although Generative Flow Networks (GFlowNets) are designed to capture multiple modes of a reward function, they often suffer from mode collapse in practice, getting trapped in early discovered modes and requiring prolonged training to find diverse solutions. Existing exploration techniques may rely on heuristic novelty signals. We propose Loss-Guided GFlowNets (LGGFN), a novel approach where an auxiliary GFlowNet's exploration is directly driven by the main GFlowNet's training loss. By prioritizing trajectories where the main model exhibits high loss, LGGFN focuses sampling on poorly understood regions of the state space. This targeted exploration significantly accelerates the discovery of diverse, high-reward samples. Empirically, across various benchmarks including grid environments, structured sequence generation, and Bayesian structure learning, LGGFN consistently enhances exploration efficiency and sample diversity compared to baselines. For instance, on a challenging sequence generation task, it discovered over 40 times more unique valid modes while simultaneously reducing the exploration error metric by approximately 99\%.

LLM-BABYBENCH: Understanding and Evaluating Grounded Planning and Reasoning in LLMs

May 17, 2025Abstract:Assessing the capacity of Large Language Models (LLMs) to plan and reason within the constraints of interactive environments is crucial for developing capable AI agents. We introduce $\textbf{LLM-BabyBench}$, a new benchmark suite designed specifically for this purpose. Built upon a textual adaptation of the procedurally generated BabyAI grid world, this suite evaluates LLMs on three fundamental aspects of grounded intelligence: (1) predicting the consequences of actions on the environment state ($\textbf{Predict}$ task), (2) generating sequences of low-level actions to achieve specified objectives ($\textbf{Plan}$ task), and (3) decomposing high-level instructions into coherent subgoal sequences ($\textbf{Decompose}$ task). We detail the methodology for generating the three corresponding datasets ($\texttt{LLM-BabyBench-Predict}$, $\texttt{-Plan}$, $\texttt{-Decompose}$) by extracting structured information from an expert agent operating within the text-based environment. Furthermore, we provide a standardized evaluation harness and metrics, including environment interaction for validating generated plans, to facilitate reproducible assessment of diverse LLMs. Initial baseline results highlight the challenges posed by these grounded reasoning tasks. The benchmark suite, datasets, data generation code, and evaluation code are made publicly available ($\href{https://github.com/choukrani/llm-babybench}{\text{GitHub}}$, $\href{https://huggingface.co/datasets/salem-mbzuai/LLM-BabyBench}{\text{HuggingFace}}$).

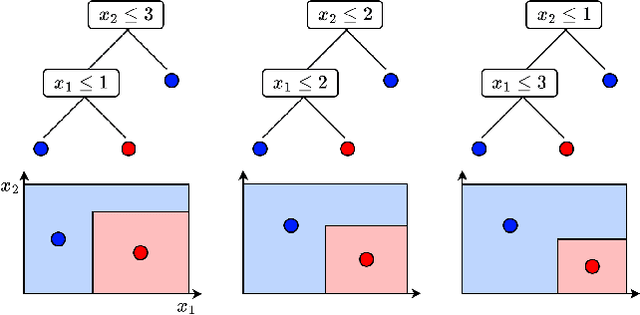

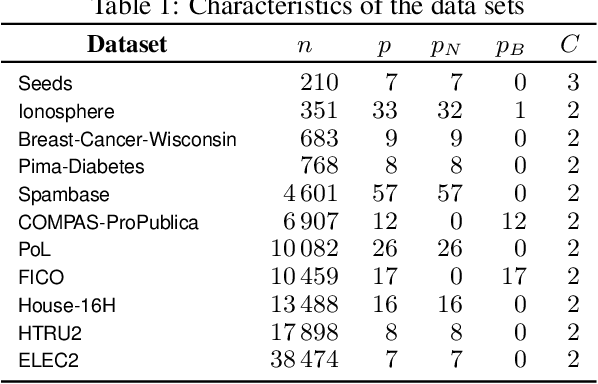

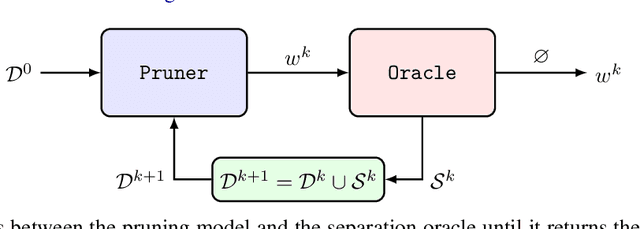

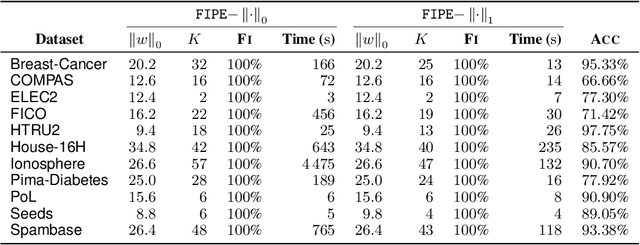

Free Lunch in the Forest: Functionally-Identical Pruning of Boosted Tree Ensembles

Aug 28, 2024

Abstract:Tree ensembles, including boosting methods, are highly effective and widely used for tabular data. However, large ensembles lack interpretability and require longer inference times. We introduce a method to prune a tree ensemble into a reduced version that is "functionally identical" to the original model. In other words, our method guarantees that the prediction function stays unchanged for any possible input. As a consequence, this pruning algorithm is lossless for any aggregated metric. We formalize the problem of functionally identical pruning on ensembles, introduce an exact optimization model, and provide a fast yet highly effective method to prune large ensembles. Our algorithm iteratively prunes considering a finite set of points, which is incrementally augmented using an adversarial model. In multiple computational experiments, we show that our approach is a "free lunch", significantly reducing the ensemble size without altering the model's behavior. Thus, we can preserve state-of-the-art performance at a fraction of the original model's size.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge