Houjian Guo

Using joint training speaker encoder with consistency loss to achieve cross-lingual voice conversion and expressive voice conversion

Jul 01, 2023

Abstract:Voice conversion systems have made significant advancements in terms of naturalness and similarity in common voice conversion tasks. However, their performance in more complex tasks such as cross-lingual voice conversion and expressive voice conversion remains imperfect. In this study, we propose a novel approach that combines a jointly trained speaker encoder and content features extracted from the cross-lingual speech recognition model Whisper to achieve high-quality cross-lingual voice conversion. Additionally, we introduce a speaker consistency loss to the joint encoder, which improves the similarity between the converted speech and the reference speech. To further explore the capabilities of the joint speaker encoder, we use the phonetic posteriorgram as the content feature, which enables the model to effectively reproduce both the speaker characteristics and the emotional aspects of the reference speech.

QuickVC: Any-to-many Voice Conversion Using Inverse Short-time Fourier Transform for Faster Conversion

Feb 23, 2023

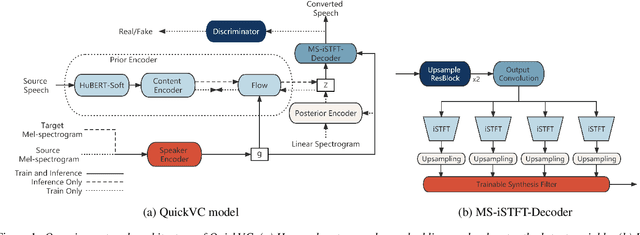

Abstract:With the development of automatic speech recognition (ASR) and text-to-speech (TTS) technology, high-quality voice conversion (VC) can be achieved by extracting source content information and target speaker information to reconstruct waveforms. However, current methods still require improvement in terms of inference speed. In this study, we propose a lightweight VITS-based VC model that uses the HuBERT-Soft model to extract content information features without speaker information. Through subjective and objective experiments on synthesized speech, the proposed model demonstrates competitive results in terms of naturalness and similarity. Importantly, unlike the original VITS model, we use the inverse short-time Fourier transform (iSTFT) to replace the most computationally expensive part. Experimental results show that our model can generate samples at over 5000 kHz on the 3090 GPU and over 250 kHz on the i9-10900K CPU, achieving competitive speed for the same hardware configuration.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge