Hoonsang Yoon

DSTEA: Dialogue State Tracking with Entity Adaptive Pre-training

Jul 08, 2022

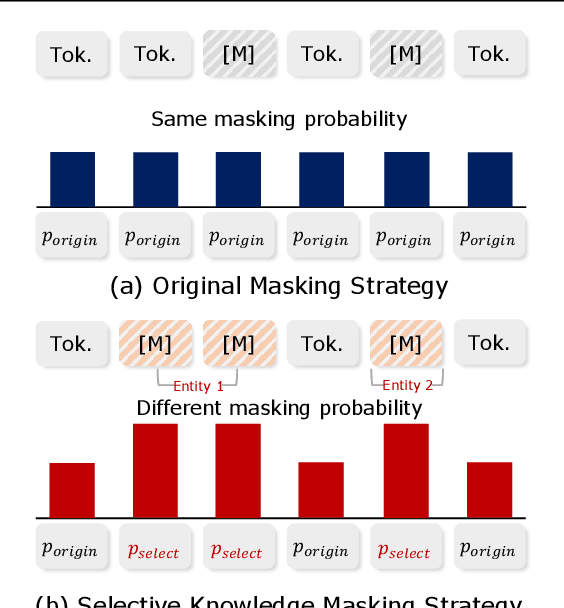

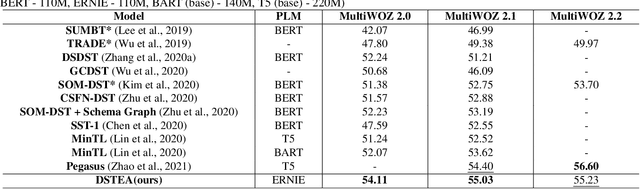

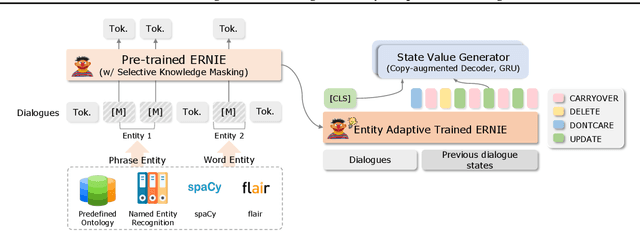

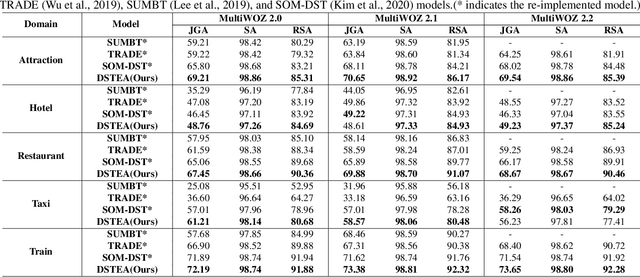

Abstract:Dialogue state tracking (DST) is a core sub-module of a dialogue system, which aims to extract the appropriate belief state (domain-slot-value) from a system and user utterances. Most previous studies have attempted to improve performance by increasing the size of the pre-trained model or using additional features such as graph relations. In this study, we propose dialogue state tracking with entity adaptive pre-training (DSTEA), a system in which key entities in a sentence are more intensively trained by the encoder of the DST model. DSTEA extracts important entities from input dialogues in four ways, and then applies selective knowledge masking to train the model effectively. Although DSTEA conducts only pre-training without directly infusing additional knowledge to the DST model, it achieved better performance than the best-known benchmark models on MultiWOZ 2.0, 2.1, and 2.2. The effectiveness of DSTEA was verified through various comparative experiments with regard to the entity type and different adaptive settings.

Mismatch between Multi-turn Dialogue and its Evaluation Metric in Dialogue State Tracking

Mar 31, 2022

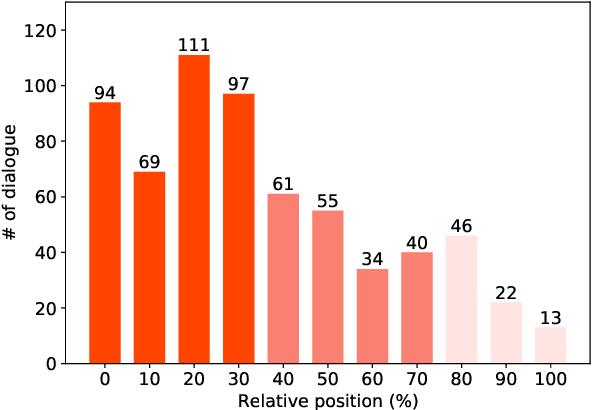

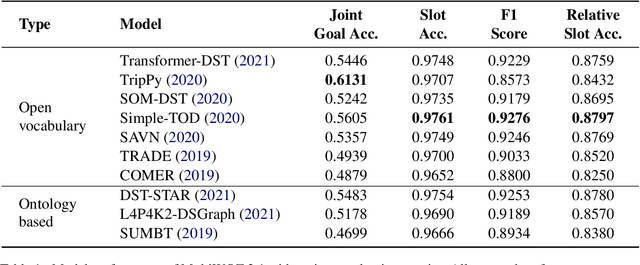

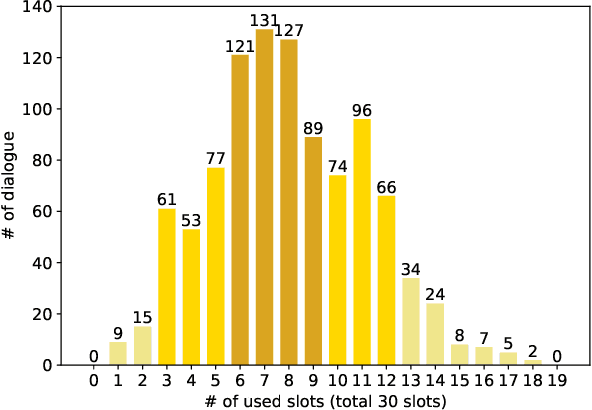

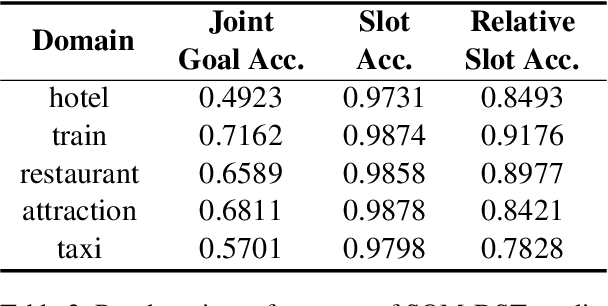

Abstract:Dialogue state tracking (DST) aims to extract essential information from multi-turn dialogue situations and take appropriate actions. A belief state, one of the core pieces of information, refers to the subject and its specific content, and appears in the form of domain-slot-value. The trained model predicts "accumulated" belief states in every turn, and joint goal accuracy and slot accuracy are mainly used to evaluate the prediction; however, we specify that the current evaluation metrics have a critical limitation when evaluating belief states accumulated as the dialogue proceeds, especially in the most used MultiWOZ dataset. Additionally, we propose relative slot accuracy to complement existing metrics. Relative slot accuracy does not depend on the number of predefined slots, and allows intuitive evaluation by assigning relative scores according to the turn of each dialogue. This study also encourages not solely the reporting of joint goal accuracy, but also various complementary metrics in DST tasks for the sake of a realistic evaluation.

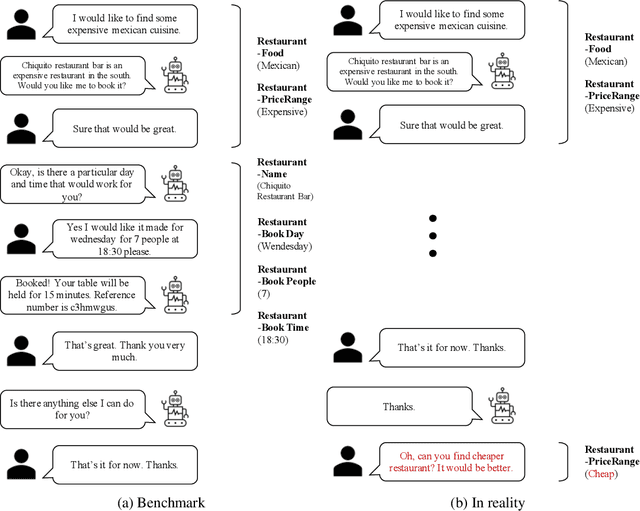

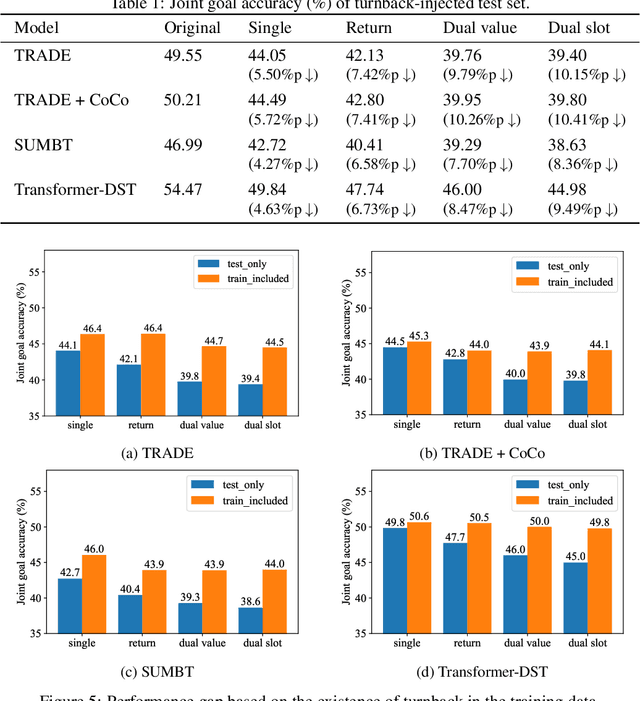

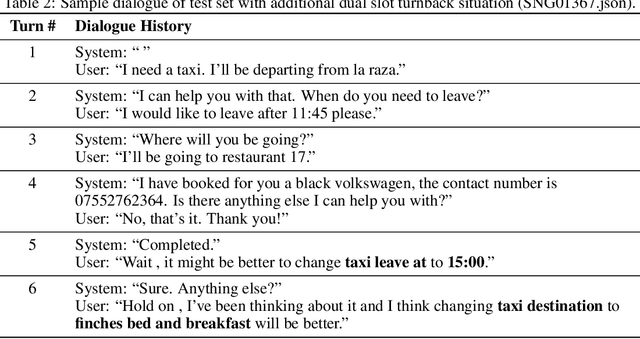

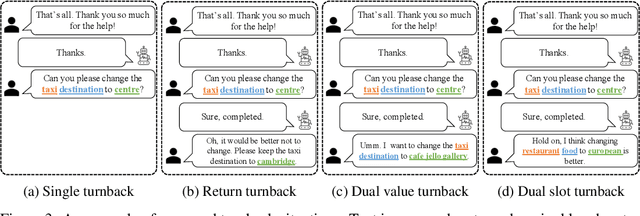

Oh My Mistake!: Toward Realistic Dialogue State Tracking including Turnback Utterances

Aug 28, 2021

Abstract:The primary purpose of dialogue state tracking (DST), a critical component of an end-to-end conversational system, is to build a model that responds well to real-world situations. Although we often change our minds during ordinary conversations, current benchmark datasets do not adequately reflect such occurrences and instead consist of over-simplified conversations, in which no one changes their mind during a conversation. As the main question inspiring the present study,``Are current benchmark datasets sufficiently diverse to handle casual conversations in which one changes their mind?'' We found that the answer is ``No'' because simply injecting template-based turnback utterances significantly degrades the DST model performance. The test joint goal accuracy on the MultiWOZ decreased by over 5\%p when the simplest form of turnback utterance was injected. Moreover, the performance degeneration worsens when facing more complicated turnback situations. However, we also observed that the performance rebounds when a turnback is appropriately included in the training dataset, implying that the problem is not with the DST models but rather with the construction of the benchmark dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge