Henry Turner

Speaker Anonymization with Distribution-Preserving X-Vector Generation for the VoicePrivacy Challenge 2020

Oct 26, 2020

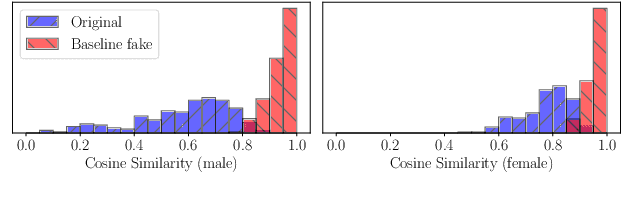

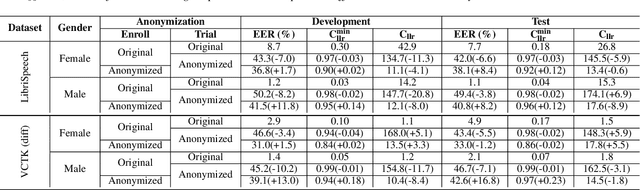

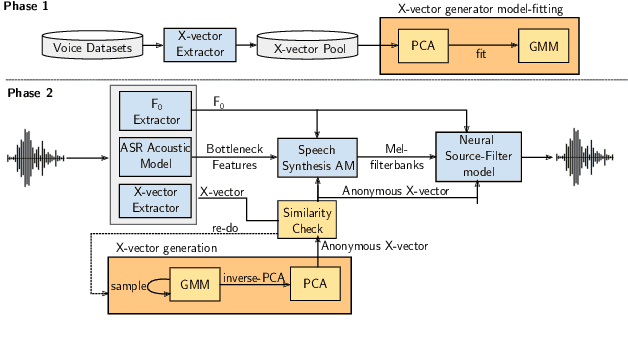

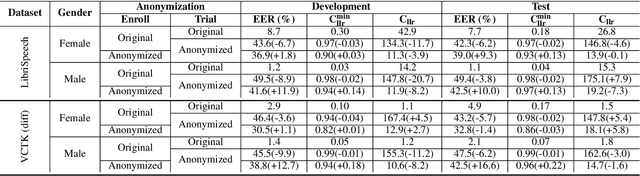

Abstract:In this paper, we present a Distribution-Preserving Voice Anonymization technique, as our submission to the VoicePrivacy Challenge 2020. We notice that the challenge baseline system generates fake X-vectors which are very similar to each other, significantly more so than those extracted from organic speakers. This difference arises from averaging many X-vectors from a pool of speakers in the anonymization processs, causing a loss of information. We propose a new method to generate fake X-vectors which overcomes these limitations by preserving the distributional properties of X-vectors and their intra-similarity. We use population data to learn the properties of the X-vector space, before fitting a generative model which we use to sample fake X-vectors. We show how this approach generates X-vectors that more closely follow the expected intra-similarity distribution of organic speaker X-vectors. Our method can be easily integrated with others as the anonymization component of the system and removes the need to distribute a pool of speakers to use during the anonymization. Our approach leads to an increase in EER of up to 16.8\% in males and 8.4\% in females in scenarios where enrollment and trial utterances are anonymized versus the baseline solution, demonstrating the diversity of our generated voices.

SLAP: Improving Physical Adversarial Examples with Short-Lived Adversarial Perturbations

Jul 08, 2020

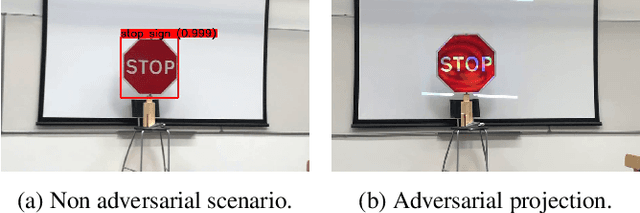

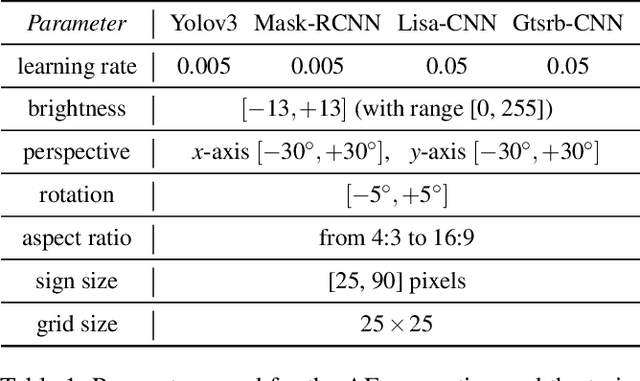

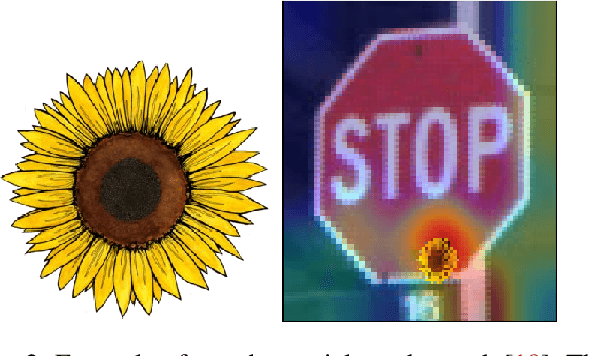

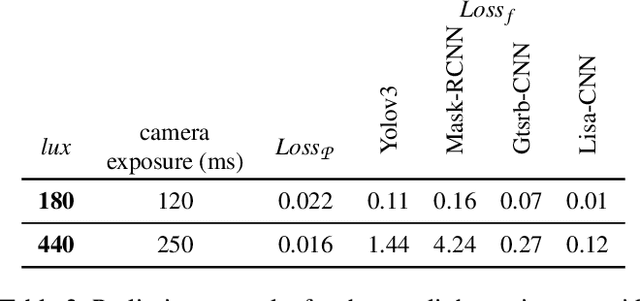

Abstract:Whilst significant research effort into adversarial examples (AE) has emerged in recent years, the main vector to realize these attacks in the real-world currently relies on static adversarial patches, which are limited in their conspicuousness and can not be modified once deployed. In this paper, we propose Short-Lived Adversarial Perturbations (SLAP), a novel technique that allows adversaries to realize robust, dynamic real-world AE from a distance. As we show in this paper, such attacks can be achieved using a light projector to shine a specifically crafted adversarial image in order to perturb real-world objects and transform them into AE. This allows the adversary greater control over the attack compared to adversarial patches: (i) projections can be dynamically turned on and off or modified at will, (ii) projections do not suffer from the locality constraint imposed by patches, making them harder to detect. We study the feasibility of SLAP in the self-driving scenario, targeting both object detector and traffic sign recognition tasks. We demonstrate that the proposed method generates AE that are robust to different environmental conditions for several networks and lighting conditions: we successfully cause misclassifications of state-of-the-art networks such as Yolov3 and Mask-RCNN with up to 98% success rate for a variety of angles and distances. Additionally, we demonstrate that AE generated with SLAP can bypass SentiNet, a recent AE detection method which relies on the fact that adversarial patches generate highly salient and localized areas in the input images.

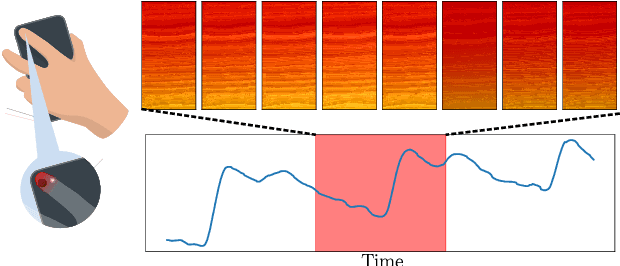

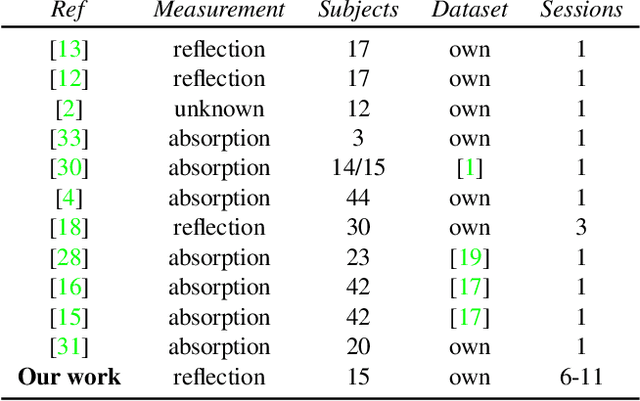

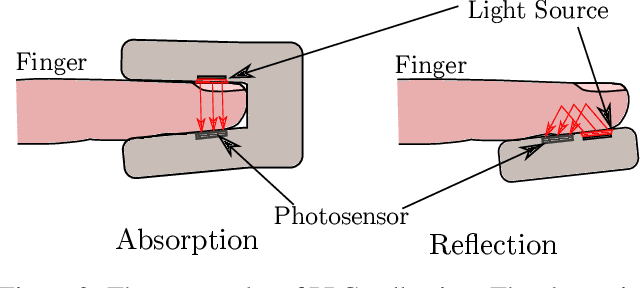

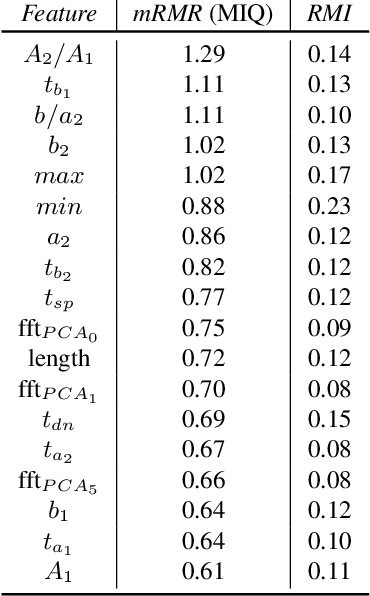

Seeing Red: PPG Biometrics Using Smartphone Cameras

Apr 15, 2020

Abstract:In this paper, we propose a system that enables photoplethysmogram (PPG)-based authentication by using a smartphone camera. PPG signals are obtained by recording a video from the camera as users are resting their finger on top of the camera lens. The signals can be extracted based on subtle changes in the video that are due to changes in the light reflection properties of the skin as the blood flows through the finger. We collect a dataset of PPG measurements from a set of 15 users over the course of 6-11 sessions per user using an iPhone X for the measurements. We design an authentication pipeline that leverages the uniqueness of each individual's cardiovascular system, identifying a set of distinctive features from each heartbeat. We conduct a set of experiments to evaluate the recognition performance of the PPG biometric trait, including cross-session scenarios which have been disregarded in previous work. We found that when aggregating sufficient samples for the decision we achieve an EER as low as 8%, but that the performance greatly decreases in the cross-session scenario, with an average EER of 20%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge