Haozhi Lan

Contrastive Graph Representation Learning with Adversarial Cross-view Reconstruction and Information Bottleneck

Aug 01, 2024

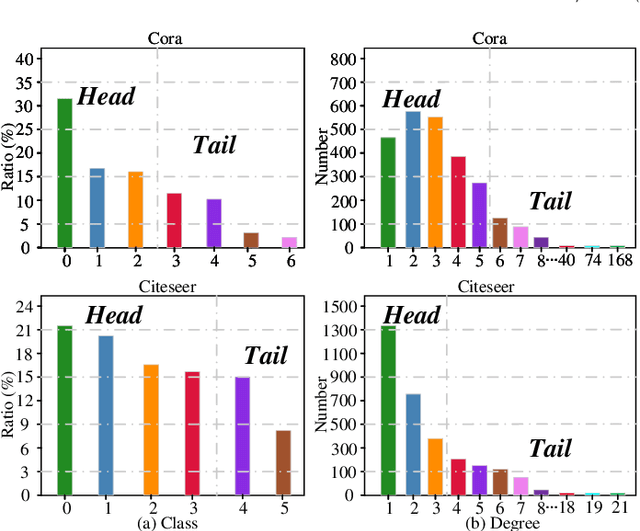

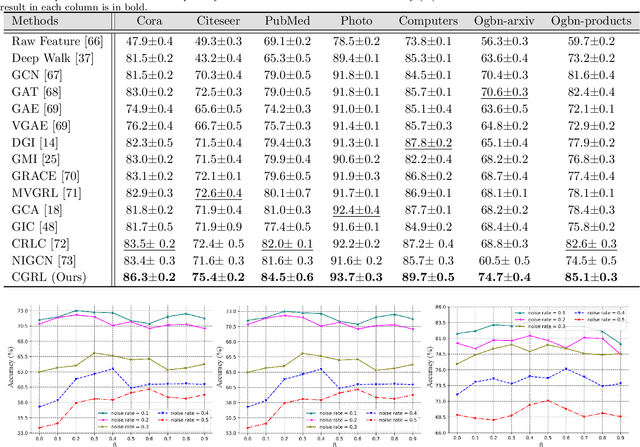

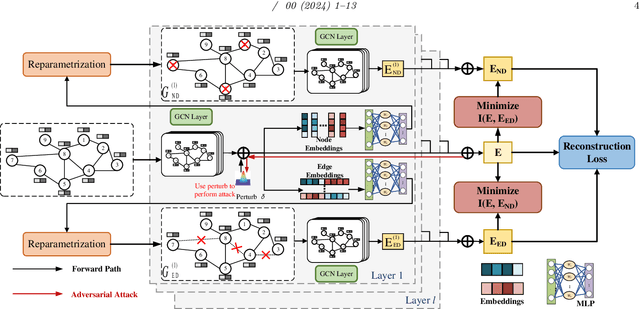

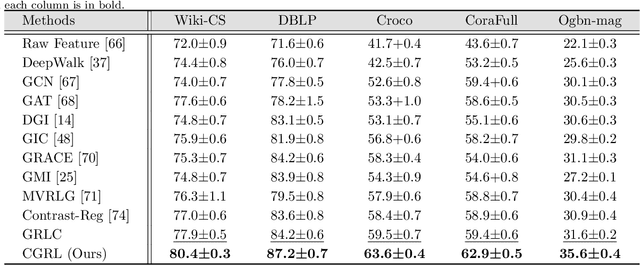

Abstract:Graph Neural Networks (GNNs) have received extensive research attention due to their powerful information aggregation capabilities. Despite the success of GNNs, most of them suffer from the popularity bias issue in a graph caused by a small number of popular categories. Additionally, real graph datasets always contain incorrect node labels, which hinders GNNs from learning effective node representations. Graph contrastive learning (GCL) has been shown to be effective in solving the above problems for node classification tasks. Most existing GCL methods are implemented by randomly removing edges and nodes to create multiple contrasting views, and then maximizing the mutual information (MI) between these contrasting views to improve the node feature representation. However, maximizing the mutual information between multiple contrasting views may lead the model to learn some redundant information irrelevant to the node classification task. To tackle this issue, we propose an effective Contrastive Graph Representation Learning with Adversarial Cross-view Reconstruction and Information Bottleneck (CGRL) for node classification, which can adaptively learn to mask the nodes and edges in the graph to obtain the optimal graph structure representation. Furthermore, we innovatively introduce the information bottleneck theory into GCLs to remove redundant information in multiple contrasting views while retaining as much information as possible about node classification. Moreover, we add noise perturbations to the original views and reconstruct the augmented views by constructing adversarial views to improve the robustness of node feature representation. Extensive experiments on real-world public datasets demonstrate that our method significantly outperforms existing state-of-the-art algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge