Gilad Gressel

GAVEL: Towards rule-based safety through activation monitoring

Jan 29, 2026Abstract:Large language models (LLMs) are increasingly paired with activation-based monitoring to detect and prevent harmful behaviors that may not be apparent at the surface-text level. However, existing activation safety approaches, trained on broad misuse datasets, struggle with poor precision, limited flexibility, and lack of interpretability. This paper introduces a new paradigm: rule-based activation safety, inspired by rule-sharing practices in cybersecurity. We propose modeling activations as cognitive elements (CEs), fine-grained, interpretable factors such as ''making a threat'' and ''payment processing'', that can be composed to capture nuanced, domain-specific behaviors with higher precision. Building on this representation, we present a practical framework that defines predicate rules over CEs and detects violations in real time. This enables practitioners to configure and update safeguards without retraining models or detectors, while supporting transparency and auditability. Our results show that compositional rule-based activation safety improves precision, supports domain customization, and lays the groundwork for scalable, interpretable, and auditable AI governance. We will release GAVEL as an open-source framework and provide an accompanying automated rule creation tool.

Love, Lies, and Language Models: Investigating AI's Role in Romance-Baiting Scams

Dec 22, 2025Abstract:Romance-baiting scams have become a major source of financial and emotional harm worldwide. These operations are run by organized crime syndicates that traffic thousands of people into forced labor, requiring them to build emotional intimacy with victims over weeks of text conversations before pressuring them into fraudulent cryptocurrency investments. Because the scams are inherently text-based, they raise urgent questions about the role of Large Language Models (LLMs) in both current and future automation. We investigate this intersection by interviewing 145 insiders and 5 scam victims, performing a blinded long-term conversation study comparing LLM scam agents to human operators, and executing an evaluation of commercial safety filters. Our findings show that LLMs are already widely deployed within scam organizations, with 87% of scam labor consisting of systematized conversational tasks readily susceptible to automation. In a week-long study, an LLM agent not only elicited greater trust from study participants (p=0.007) but also achieved higher compliance with requests than human operators (46% vs. 18% for humans). Meanwhile, popular safety filters detected 0.0% of romance baiting dialogues. Together, these results suggest that romance-baiting scams may be amenable to full-scale LLM automation, while existing defenses remain inadequate to prevent their expansion.

Are You Human? An Adversarial Benchmark to Expose LLMs

Oct 12, 2024Abstract:Large Language Models (LLMs) have demonstrated an alarming ability to impersonate humans in conversation, raising concerns about their potential misuse in scams and deception. Humans have a right to know if they are conversing to an LLM. We evaluate text-based prompts designed as challenges to expose LLM imposters in real-time. To this end we compile and release an open-source benchmark dataset that includes 'implicit challenges' that exploit an LLM's instruction-following mechanism to cause role deviation, and 'exlicit challenges' that test an LLM's ability to perform simple tasks typically easy for humans but difficult for LLMs. Our evaluation of 9 leading models from the LMSYS leaderboard revealed that explicit challenges successfully detected LLMs in 78.4% of cases, while implicit challenges were effective in 22.9% of instances. User studies validate the real-world applicability of our methods, with humans outperforming LLMs on explicit challenges (78% vs 22% success rate). Our framework unexpectedly revealed that many study participants were using LLMs to complete tasks, demonstrating its effectiveness in detecting both AI impostors and human misuse of AI tools. This work addresses the critical need for reliable, real-time LLM detection methods in high-stakes conversations.

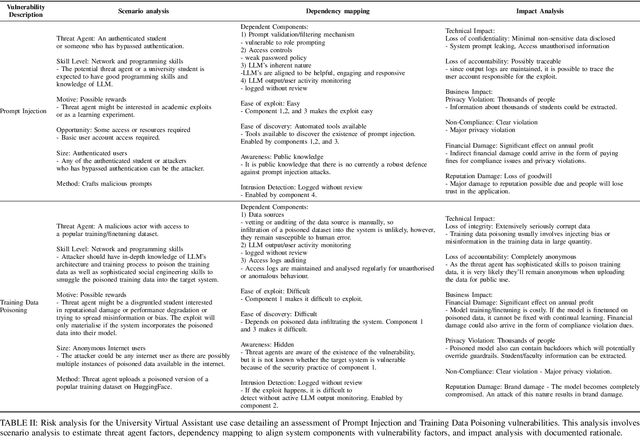

Mapping LLM Security Landscapes: A Comprehensive Stakeholder Risk Assessment Proposal

Mar 20, 2024

Abstract:The rapid integration of Large Language Models (LLMs) across diverse sectors has marked a transformative era, showcasing remarkable capabilities in text generation and problem-solving tasks. However, this technological advancement is accompanied by significant risks and vulnerabilities. Despite ongoing security enhancements, attackers persistently exploit these weaknesses, casting doubts on the overall trustworthiness of LLMs. Compounding the issue, organisations are deploying LLM-integrated systems without understanding the severity of potential consequences. Existing studies by OWASP and MITRE offer a general overview of threats and vulnerabilities but lack a method for directly and succinctly analysing the risks for security practitioners, developers, and key decision-makers who are working with this novel technology. To address this gap, we propose a risk assessment process using tools like the OWASP risk rating methodology which is used for traditional systems. We conduct scenario analysis to identify potential threat agents and map the dependent system components against vulnerability factors. Through this analysis, we assess the likelihood of a cyberattack. Subsequently, we conduct a thorough impact analysis to derive a comprehensive threat matrix. We also map threats against three key stakeholder groups: developers engaged in model fine-tuning, application developers utilizing third-party APIs, and end users. The proposed threat matrix provides a holistic evaluation of LLM-related risks, enabling stakeholders to make informed decisions for effective mitigation strategies. Our outlined process serves as an actionable and comprehensive tool for security practitioners, offering insights for resource management and enhancing the overall system security.

Automatic Endoscopic Ultrasound Station Recognition with Limited Data

Sep 22, 2023Abstract:Pancreatic cancer is a lethal form of cancer that significantly contributes to cancer-related deaths worldwide. Early detection is essential to improve patient prognosis and survival rates. Despite advances in medical imaging techniques, pancreatic cancer remains a challenging disease to detect. Endoscopic ultrasound (EUS) is the most effective diagnostic tool for detecting pancreatic cancer. However, it requires expert interpretation of complex ultrasound images to complete a reliable patient scan. To obtain complete imaging of the pancreas, practitioners must learn to guide the endoscope into multiple "EUS stations" (anatomical locations), which provide different views of the pancreas. This is a difficult skill to learn, involving over 225 proctored procedures with the support of an experienced doctor. We build an AI-assisted tool that utilizes deep learning techniques to identify these stations of the stomach in real time during EUS procedures. This computer-assisted diagnostic (CAD) will help train doctors more efficiently. Historically, the challenge faced in developing such a tool has been the amount of retrospective labeling required by trained clinicians. To solve this, we developed an open-source user-friendly labeling web app that streamlines the process of annotating stations during the EUS procedure with minimal effort from the clinicians. Our research shows that employing only 43 procedures with no hyperparameter fine-tuning obtained a balanced accuracy of 90%, comparable to the current state of the art. In addition, we employ Grad-CAM, a visualization technology that provides clinicians with interpretable and explainable visualizations.

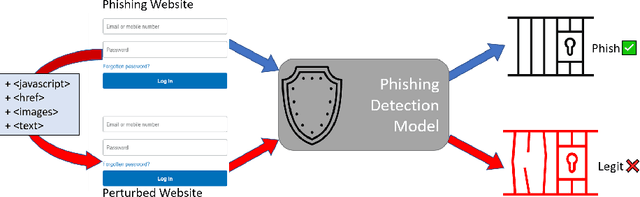

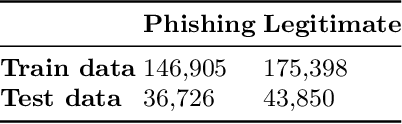

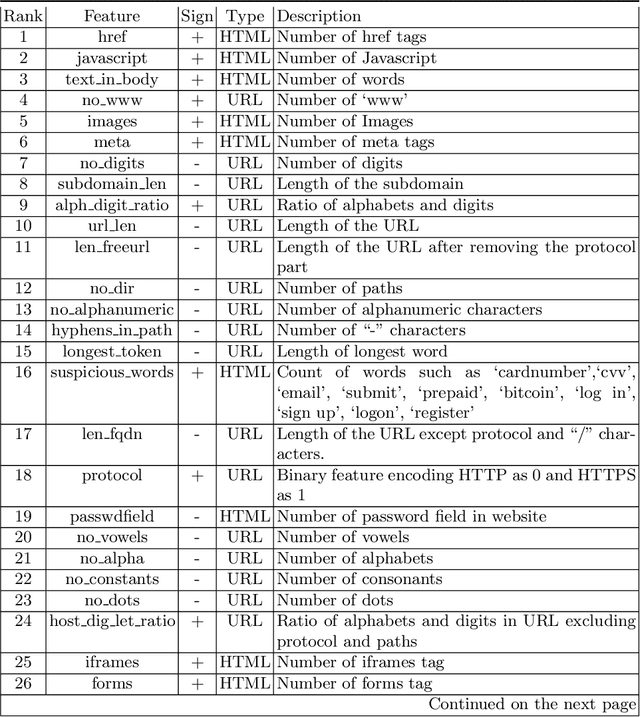

Feature Importance Guided Attack: A Model Agnostic Adversarial Attack

Jun 28, 2021

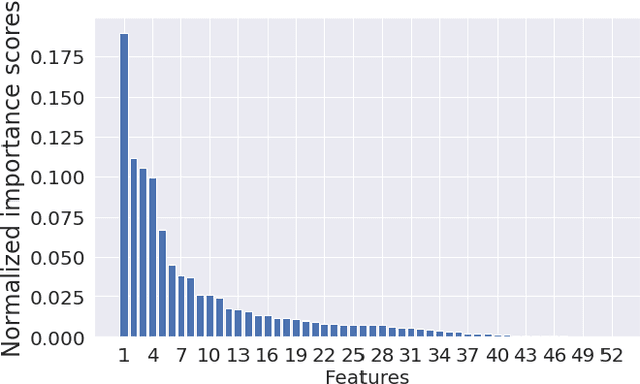

Abstract:Machine learning models are susceptible to adversarial attacks which dramatically reduce their performance. Reliable defenses to these attacks are an unsolved challenge. In this work, we present a novel evasion attack: the 'Feature Importance Guided Attack' (FIGA) which generates adversarial evasion samples. FIGA is model agnostic, it assumes no prior knowledge of the defending model's learning algorithm, but does assume knowledge of the feature representation. FIGA leverages feature importance rankings; it perturbs the most important features of the input in the direction of the target class we wish to mimic. We demonstrate FIGA against eight phishing detection models. We keep the attack realistic by perturbing phishing website features that an adversary would have control over. Using FIGA we are able to cause a reduction in the F1-score of a phishing detection model from 0.96 to 0.41 on average. Finally, we implement adversarial training as a defense against FIGA and show that while it is sometimes effective, it can be evaded by changing the parameters of FIGA.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge