Gerard Bailly

Cued Speech Generation Leveraging a Pre-trained Audiovisual Text-to-Speech Model

Jan 08, 2025

Abstract:This paper presents a novel approach for the automatic generation of Cued Speech (ACSG), a visual communication system used by people with hearing impairment to better elicit the spoken language. We explore transfer learning strategies by leveraging a pre-trained audiovisual autoregressive text-to-speech model (AVTacotron2). This model is reprogrammed to infer Cued Speech (CS) hand and lip movements from text input. Experiments are conducted on two publicly available datasets, including one recorded specifically for this study. Performance is assessed using an automatic CS recognition system. With a decoding accuracy at the phonetic level reaching approximately 77%, the results demonstrate the effectiveness of our approach.

Style Transfer and Extraction for the Handwritten Letters Using Deep Learning

Dec 10, 2018

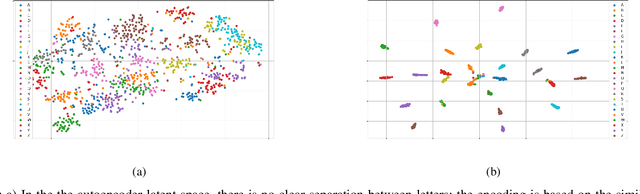

Abstract:How can we learn, transfer and extract handwriting styles using deep neural networks? This paper explores these questions using a deep conditioned autoencoder on the IRON-OFF handwriting data-set. We perform three experiments that systematically explore the quality of our style extraction procedure. First, We compare our model to handwriting benchmarks using multidimensional performance metrics. Second, we explore the quality of style transfer, i.e. how the model performs on new, unseen writers. In both experiments, we improve the metrics of state of the art methods by a large margin. Lastly, we analyze the latent space of our model, and we see that it separates consistently writing styles.

Handwriting styles: benchmarks and evaluation metrics

Sep 04, 2018

Abstract:Evaluating the style of handwriting generation is a challenging problem, since it is not well defined. It is a key component in order to develop in developing systems with more personalized experiences with humans. In this paper, we propose baseline benchmarks, in order to set anchors to estimate the relative quality of different handwriting style methods. This will be done using deep learning techniques, which have shown remarkable results in different machine learning tasks, learning classification, regression, and most relevant to our work, generating temporal sequences. We discuss the challenges associated with evaluating our methods, which is related to evaluation of generative models in general. We then propose evaluation metrics, which we find relevant to this problem, and we discuss how we evaluate the evaluation metrics. In this study, we use IRON-OFF dataset. To the best of our knowledge, there is no work done before in generating handwriting (either in terms of methodology or the performance metrics), our in exploring styles using this dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge