George T. Hall

Fast Perturbative Algorithm Configurators

Jul 07, 2020

Abstract:Recent work has shown that the ParamRLS and ParamILS algorithm configurators can tune some simple randomised search heuristics for standard benchmark functions in linear expected time in the size of the parameter space. In this paper we prove a linear lower bound on the expected time to optimise any parameter tuning problem for ParamRLS, ParamILS as well as for larger classes of algorithm configurators. We propose a harmonic mutation operator for perturbative algorithm configurators that provably tunes single-parameter algorithms in polylogarithmic time for unimodal and approximately unimodal (i.e., non-smooth, rugged with an underlying gradient towards the optimum) parameter spaces. It is suitable as a general-purpose operator since even on worst-case (e.g., deceptive) landscapes it is only by at most a logarithmic factor slower than the default ones used by ParamRLS and ParamILS. An experimental analysis confirms the superiority of the approach in practice for a number of configuration scenarios, including ones involving more than one parameter.

Analysis of the Performance of Algorithm Configurators for Search Heuristics with Global Mutation Operators

Apr 09, 2020

Abstract:Recently it has been proved that a simple algorithm configurator called ParamRLS can efficiently identify the optimal neighbourhood size to be used by stochastic local search to optimise two standard benchmark problem classes. In this paper we analyse the performance of algorithm configurators for tuning the more sophisticated global mutation operator used in standard evolutionary algorithms, which flips each of the $n$ bits independently with probability $\chi/n$ and the best value for $\chi$ has to be identified. We compare the performance of configurators when the best-found fitness values within the cutoff time $\kappa$ are used to compare configurations against the actual optimisation time for two standard benchmark problem classes, Ridge and LeadingOnes. We rigorously prove that all algorithm configurators that use optimisation time as performance metric require cutoff times that are at least as large as the expected optimisation time to identify the optimal configuration. Matters are considerably different if the fitness metric is used. To show this we prove that the simple ParamRLS-F configurator can identify the optimal mutation rates even when using cutoff times that are considerably smaller than the expected optimisation time of the best parameter value for both problem classes.

On the Impact of the Cutoff Time on the Performance of Algorithm Configurators

May 21, 2019

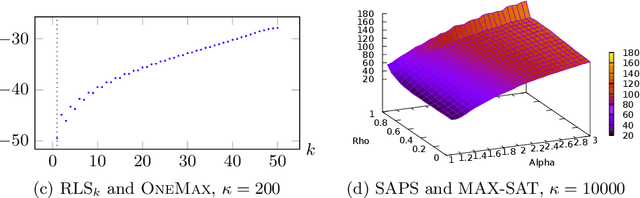

Abstract:Algorithm configurators are automated methods to optimise the parameters of an algorithm for a class of problems. We evaluate the performance of a simple random local search configurator (ParamRLS) for tuning the neighbourhood size $k$ of the RLS$_k$ algorithm. We measure performance as the expected number of configuration evaluations required to identify the optimal value for the parameter. We analyse the impact of the cutoff time $\kappa$ (the time spent evaluating a configuration for a problem instance) on the expected number of configuration evaluations required to find the optimal parameter value, where we compare configurations using either best found fitness values (ParamRLS-F) or optimisation times (ParamRLS-T). We consider tuning RLS$_k$ for a variant of the Ridge function class (Ridge*), where the performance of each parameter value does not change during the run, and for the OneMax function class, where longer runs favour smaller $k$. We rigorously prove that ParamRLS-F efficiently tunes RLS$_k$ for Ridge* for any $\kappa$ while ParamRLS-T requires at least quadratic $\kappa$. For OneMax ParamRLS-F identifies $k=1$ as optimal with linear $\kappa$ while ParamRLS-T requires a $\kappa$ of at least $\Omega(n\log n)$. For smaller $\kappa$ ParamRLS-F identifies that $k>1$ performs better while ParamRLS-T returns $k$ chosen uniformly at random.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge