George Alex Koulieris

Large-Scale Multi-Character Interaction Synthesis

May 20, 2025Abstract:Generating large-scale multi-character interactions is a challenging and important task in character animation. Multi-character interactions involve not only natural interactive motions but also characters coordinated with each other for transition. For example, a dance scenario involves characters dancing with partners and also characters coordinated to new partners based on spatial and temporal observations. We term such transitions as coordinated interactions and decompose them into interaction synthesis and transition planning. Previous methods of single-character animation do not consider interactions that are critical for multiple characters. Deep-learning-based interaction synthesis usually focuses on two characters and does not consider transition planning. Optimization-based interaction synthesis relies on manually designing objective functions that may not generalize well. While crowd simulation involves more characters, their interactions are sparse and passive. We identify two challenges to multi-character interaction synthesis, including the lack of data and the planning of transitions among close and dense interactions. Existing datasets either do not have multiple characters or do not have close and dense interactions. The planning of transitions for multi-character close and dense interactions needs both spatial and temporal considerations. We propose a conditional generative pipeline comprising a coordinatable multi-character interaction space for interaction synthesis and a transition planning network for coordinations. Our experiments demonstrate the effectiveness of our proposed pipeline for multicharacter interaction synthesis and the applications facilitated by our method show the scalability and transferability.

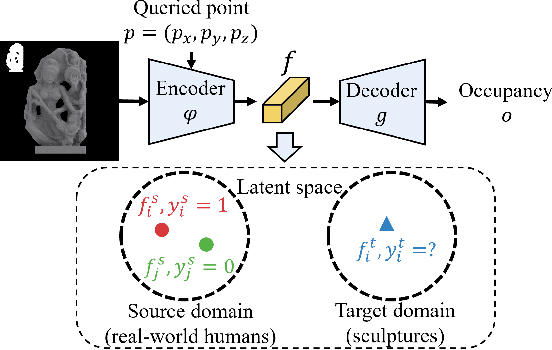

3D Reconstruction of Sculptures from Single Images via Unsupervised Domain Adaptation on Implicit Models

Oct 09, 2022

Abstract:Acquiring the virtual equivalent of exhibits, such as sculptures, in virtual reality (VR) museums, can be labour-intensive and sometimes infeasible. Deep learning based 3D reconstruction approaches allow us to recover 3D shapes from 2D observations, among which single-view-based approaches can reduce the need for human intervention and specialised equipment in acquiring 3D sculptures for VR museums. However, there exist two challenges when attempting to use the well-researched human reconstruction methods: limited data availability and domain shift. Considering sculptures are usually related to humans, we propose our unsupervised 3D domain adaptation method for adapting a single-view 3D implicit reconstruction model from the source (real-world humans) to the target (sculptures) domain. We have compared the generated shapes with other methods and conducted ablation studies as well as a user study to demonstrate the effectiveness of our adaptation method. We also deploy our results in a VR application.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge