Ge-Yang Ke

Optimizing Evaluation Metrics for Multi-Task Learning via the Alternating Direction Method of Multipliers

Oct 12, 2022

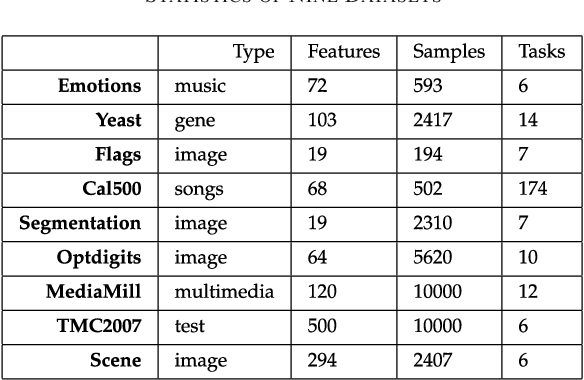

Abstract:Multi-task learning (MTL) aims to improve the generalization performance of multiple tasks by exploiting the shared factors among them. Various metrics (e.g., F-score, Area Under the ROC Curve) are used to evaluate the performances of MTL methods. Most existing MTL methods try to minimize either the misclassified errors for classification or the mean squared errors for regression. In this paper, we propose a method to directly optimize the evaluation metrics for a large family of MTL problems. The formulation of MTL that directly optimizes evaluation metrics is the combination of two parts: (1) a regularizer defined on the weight matrix over all tasks, in order to capture the relatedness of these tasks; (2) a sum of multiple structured hinge losses, each corresponding to a surrogate of some evaluation metric on one task. This formulation is challenging in optimization because both of its parts are non-smooth. To tackle this issue, we propose a novel optimization procedure based on the alternating direction scheme of multipliers, where we decompose the whole optimization problem into a sub-problem corresponding to the regularizer and another sub-problem corresponding to the structured hinge losses. For a large family of MTL problems, the first sub-problem has closed-form solutions. To solve the second sub-problem, we propose an efficient primal-dual algorithm via coordinate ascent. Extensive evaluation results demonstrate that, in a large family of MTL problems, the proposed MTL method of directly optimization evaluation metrics has superior performance gains against the corresponding baseline methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge