Gérard Dreyfus

Tongue contour extraction from ultrasound images based on deep neural network

May 19, 2016

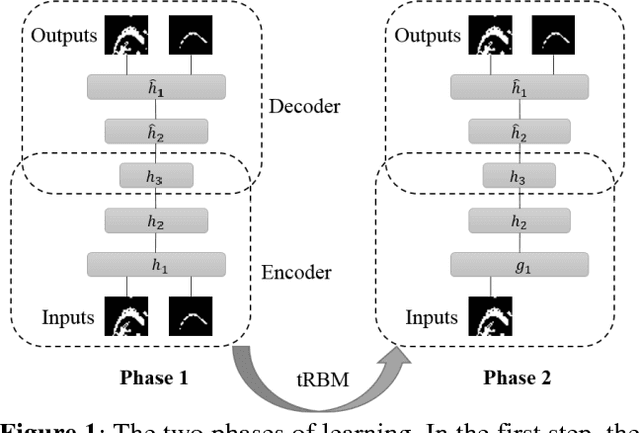

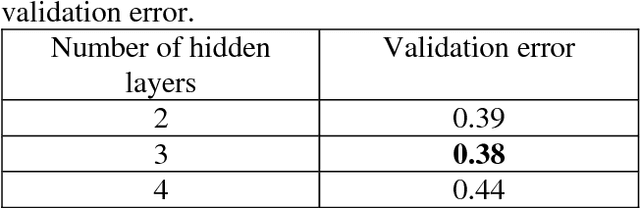

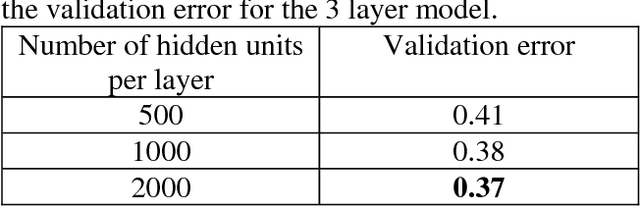

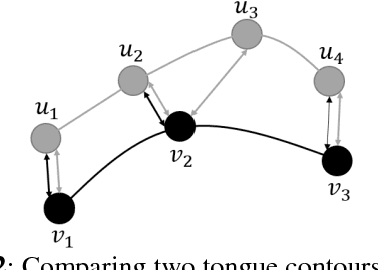

Abstract:Studying tongue motion during speech using ultrasound is a standard procedure, but automatic ultrasound image labelling remains a challenge, as standard tongue shape extraction methods typically require human intervention. This article presents a method based on deep neural networks to automatically extract tongue contour from ultrasound images on a speech dataset. We use a deep autoencoder trained to learn the relationship between an image and its related contour, so that the model is able to automatically reconstruct contours from the ultrasound image alone. In this paper, we use an automatic labelling algorithm instead of time-consuming hand-labelling during the training process, and estimate the performances of both automatic labelling and contour extraction as compared to hand-labelling. Observed results show quality scores comparable to the state of the art.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge