Fu Lele

Multi-View Clustering from the Perspective of Mutual Information

Feb 17, 2023Abstract:Exploring the complementary information of multi-view data to improve clustering effects is a crucial issue in multi-view clustering. In this paper, we propose a novel model based on information theory termed Informative Multi-View Clustering (IMVC), which extracts the common and view-specific information hidden in multi-view data and constructs a clustering-oriented comprehensive representation. More specifically, we concatenate multiple features into a unified feature representation, then pass it through a encoder to retrieve the common representation across views. Simultaneously, the features of each view are sent to a encoder to produce a compact view-specific representation, respectively. Thus, we constrain the mutual information between the common representation and view-specific representations to be minimal for obtaining multi-level information. Further, the common representation and view-specific representation are spliced to model the refined representation of each view, which is fed into a decoder to reconstruct the initial data with maximizing their mutual information. In order to form a comprehensive representation, the common representation and all view-specific representations are concatenated. Furthermore, to accommodate the comprehensive representation better for the clustering task, we maximize the mutual information between an instance and its k-nearest neighbors to enhance the intra-cluster aggregation, thus inducing well separation of different clusters at the overall aspect. Finally, we conduct extensive experiments on six benchmark datasets, and the experimental results indicate that the proposed IMVC outperforms other methods.

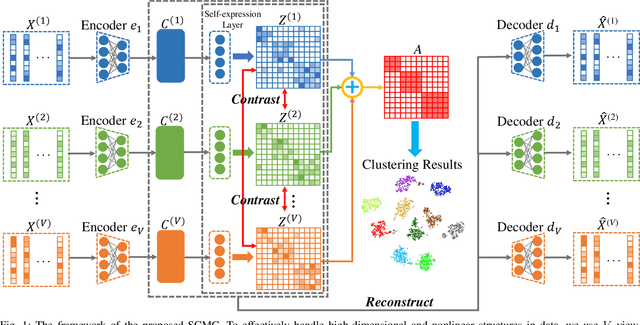

Subspace-Contrastive Multi-View Clustering

Oct 13, 2022

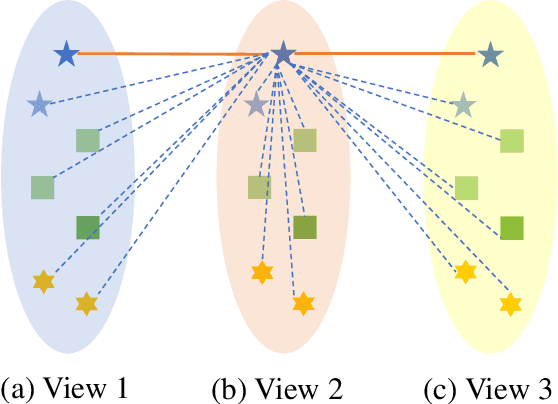

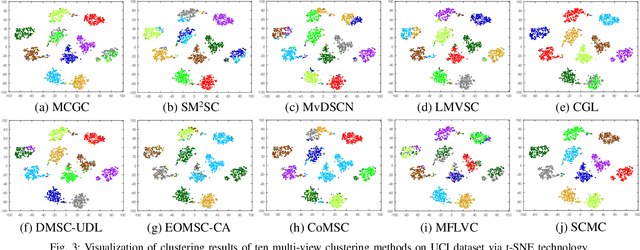

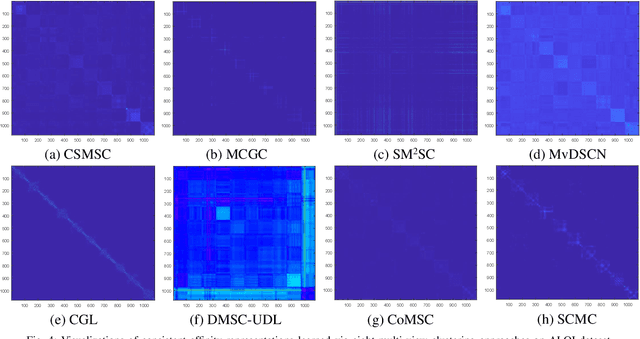

Abstract:Most multi-view clustering methods are limited by shallow models without sound nonlinear information perception capability, or fail to effectively exploit complementary information hidden in different views. To tackle these issues, we propose a novel Subspace-Contrastive Multi-View Clustering (SCMC) approach. Specifically, SCMC utilizes view-specific auto-encoders to map the original multi-view data into compact features perceiving its nonlinear structures. Considering the large semantic gap of data from different modalities, we employ subspace learning to unify the multi-view data into a joint semantic space, namely the embedded compact features are passed through multiple self-expression layers to learn the subspace representations, respectively. In order to enhance the discriminability and efficiently excavate the complementarity of various subspace representations, we use the contrastive strategy to maximize the similarity between positive pairs while differentiate negative pairs. Thus, a weighted fusion scheme is developed to initially learn a consistent affinity matrix. Furthermore, we employ the graph regularization to encode the local geometric structure within varying subspaces for further fine-tuning the appropriate affinities between instances. To demonstrate the effectiveness of the proposed model, we conduct a large number of comparative experiments on eight challenge datasets, the experimental results show that SCMC outperforms existing shallow and deep multi-view clustering methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge