Frederick B. Mancoff

Neuromorphic Hebbian learning with magnetic tunnel junction synapses

Aug 21, 2023

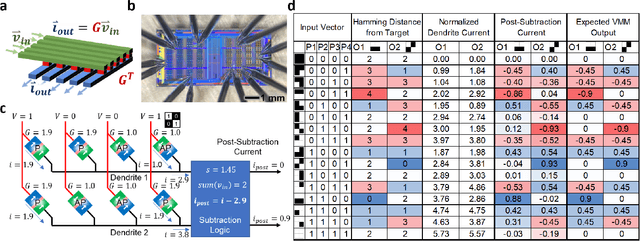

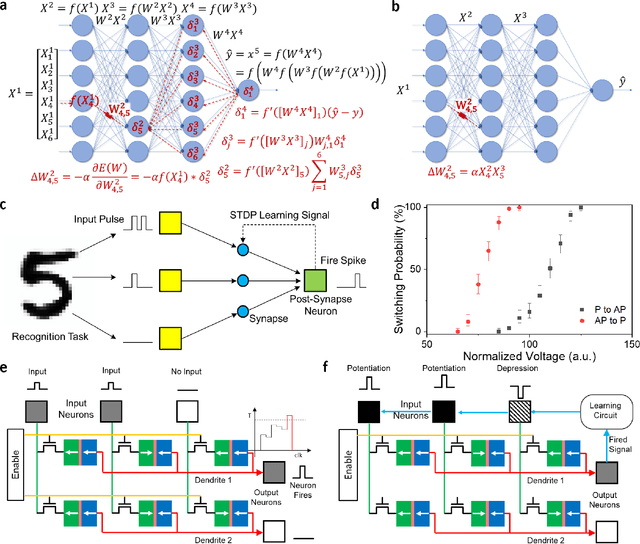

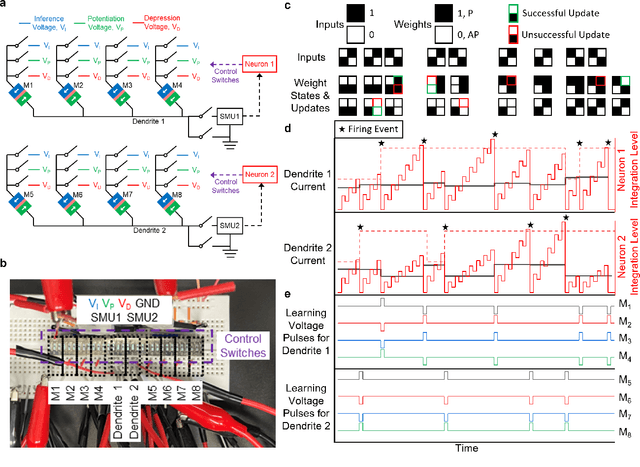

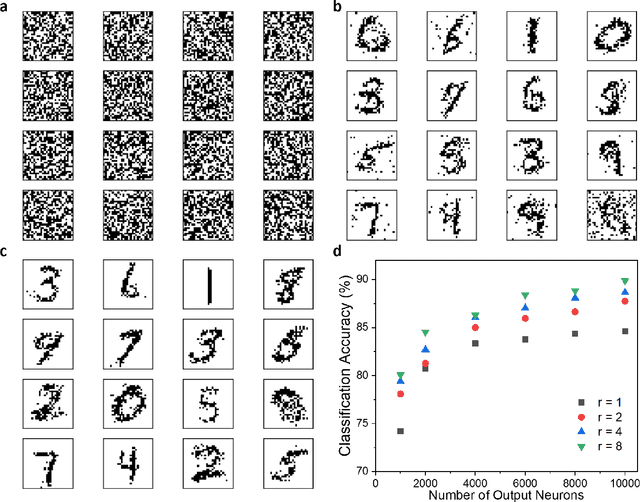

Abstract:Neuromorphic computing aims to mimic both the function and structure of biological neural networks to provide artificial intelligence with extreme efficiency. Conventional approaches store synaptic weights in non-volatile memory devices with analog resistance states, permitting in-memory computation of neural network operations while avoiding the costs associated with transferring synaptic weights from a memory array. However, the use of analog resistance states for storing weights in neuromorphic systems is impeded by stochastic writing, weights drifting over time through stochastic processes, and limited endurance that reduces the precision of synapse weights. Here we propose and experimentally demonstrate neuromorphic networks that provide high-accuracy inference thanks to the binary resistance states of magnetic tunnel junctions (MTJs), while leveraging the analog nature of their stochastic spin-transfer torque (STT) switching for unsupervised Hebbian learning. We performed the first experimental demonstration of a neuromorphic network directly implemented with MTJ synapses, for both inference and spike-timing-dependent plasticity learning. We also demonstrated through simulation that the proposed system for unsupervised Hebbian learning with stochastic STT-MTJ synapses can achieve competitive accuracies for MNIST handwritten digit recognition. By appropriately applying neuromorphic principles through hardware-aware design, the proposed STT-MTJ neuromorphic learning networks provide a pathway toward artificial intelligence hardware that learns autonomously with extreme efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge