Francisco de Assis Boldt

Towards a Universal Vibration Analysis Dataset: A Framework for Transfer Learning in Predictive Maintenance and Structural Health Monitoring

Apr 15, 2025Abstract:ImageNet has become a reputable resource for transfer learning, allowing the development of efficient ML models with reduced training time and data requirements. However, vibration analysis in predictive maintenance, structural health monitoring, and fault diagnosis, lacks a comparable large-scale, annotated dataset to facilitate similar advancements. To address this, a dataset framework is proposed that begins with bearing vibration data as an initial step towards creating a universal dataset for vibration-based spectrogram analysis for all machinery. The initial framework includes a collection of bearing vibration signals from various publicly available datasets. To demonstrate the advantages of this framework, experiments were conducted using a deep learning architecture, showing improvements in model performance when pre-trained on bearing vibration data and fine-tuned on a smaller, domain-specific dataset. These findings highlight the potential to parallel the success of ImageNet in visual computing but for vibration analysis. For future work, this research will include a broader range of vibration signals from multiple types of machinery, emphasizing spectrogram-based representations of the data. Each sample will be labeled according to machinery type, operational status, and the presence or type of faults, ensuring its utility for supervised and unsupervised learning tasks. Additionally, a framework for data preprocessing, feature extraction, and model training specific to vibration data will be developed. This framework will standardize methodologies across the research community, allowing for collaboration and accelerating progress in predictive maintenance, structural health monitoring, and related fields. By mirroring the success of ImageNet in visual computing, this dataset has the potential to improve the development of intelligent systems in industrial applications.

Enhancing Brazilian Sign Language Recognition through Skeleton Image Representation

Apr 29, 2024

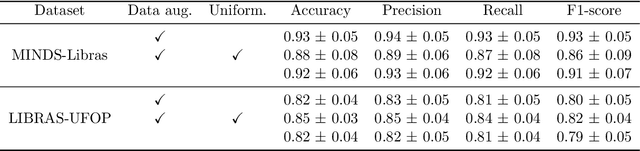

Abstract:Effective communication is paramount for the inclusion of deaf individuals in society. However, persistent communication barriers due to limited Sign Language (SL) knowledge hinder their full participation. In this context, Sign Language Recognition (SLR) systems have been developed to improve communication between signing and non-signing individuals. In particular, there is the problem of recognizing isolated signs (Isolated Sign Language Recognition, ISLR) of great relevance in the development of vision-based SL search engines, learning tools, and translation systems. This work proposes an ISLR approach where body, hands, and facial landmarks are extracted throughout time and encoded as 2-D images. These images are processed by a convolutional neural network, which maps the visual-temporal information into a sign label. Experimental results demonstrate that our method surpassed the state-of-the-art in terms of performance metrics on two widely recognized datasets in Brazilian Sign Language (LIBRAS), the primary focus of this study. In addition to being more accurate, our method is more time-efficient and easier to train due to its reliance on a simpler network architecture and solely RGB data as input.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge