Francisco J. Pulgar

AEkNN: An AutoEncoder kNN-based classifier with built-in dimensionality reduction

Mar 09, 2018

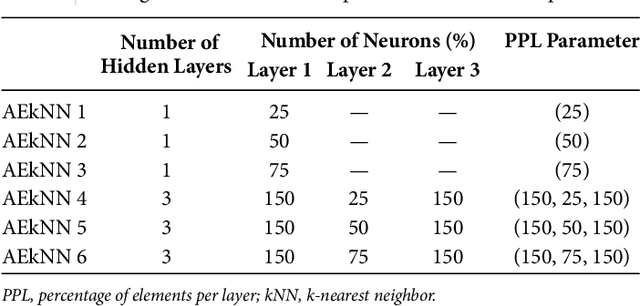

Abstract:High dimensionality, i.e. data having a large number of variables, tends to be a challenge for most machine learning tasks, including classification. A classifier usually builds a model representing how a set of inputs explain the outputs. The larger is the set of inputs and/or outputs, the more complex would be that model. There is a family of classification algorithms, known as lazy learning methods, which does not build a model. One of the best known members of this family is the kNN algorithm. Its strategy relies on searching a set of nearest neighbors, using the input variables as position vectors and computing distances among them. These distances loss significance in high-dimensional spaces. Therefore kNN, as many other classifiers, tends to worse its performance as the number of input variables grows. In this work AEkNN, a new kNN-based algorithm with built-in dimensionality reduction, is presented. Aiming to obtain a new representation of the data, having a lower dimensionality but with more informational features, AEkNN internally uses autoencoders. From this new feature vectors the computed distances should be more significant, thus providing a way to choose better neighbors. A experimental evaluation of the new proposal is conducted, analyzing several configurations and comparing them against the classical kNN algorithm. The obtained conclusions demonstrate that AEkNN offers better results in predictive and runtime performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge