Felix Morales

Traditional and accelerated gradient descent for neural architecture search

Jul 02, 2020

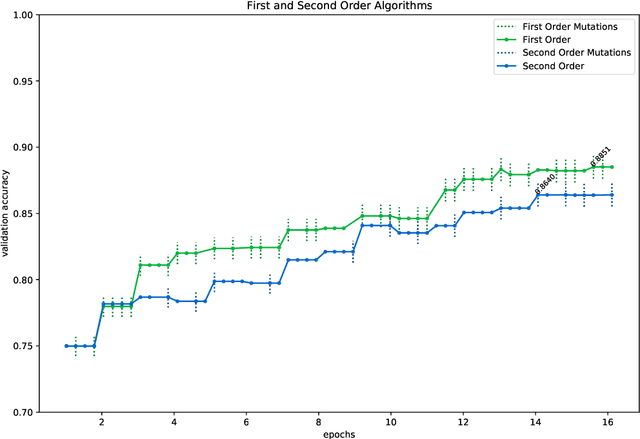

Abstract:In this paper, we introduce two algorithms for neural architecture search (NASGD and NASAGD) following the theoretical work by two of the authors [4], which aimed at introducing the conceptual basis for new notions of traditional and accelerated gradient descent algorithms for the optimization of a function on a semi-discrete space using ideas from optimal transport theory. Our methods, which use the network morphism framework introduced in [3] as a baseline, can analyze forty times as many architectures as the hill climbing methods [3,11] while using the same computational resources and time and achieving comparable levels of accuracy. For example, using NASGD on CIFAR-10, our method designs and trains networks with an error rate of 4.06 in only 12 hours on a single GPU.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge