Felix Hamann

IRT2: Inductive Linking and Ranking in Knowledge Graphs of Varying Scale

Jan 02, 2023Abstract:We address the challenge of building domain-specific knowledge models for industrial use cases, where labelled data and taxonomic information is initially scarce. Our focus is on inductive link prediction models as a basis for practical tools that support knowledge engineers with exploring text collections and discovering and linking new (so-called open-world) entities to the knowledge graph. We argue that - though neural approaches to text mining have yielded impressive results in the past years - current benchmarks do not reflect the typical challenges encountered in the industrial wild properly. Therefore, our first contribution is an open benchmark coined IRT2 (inductive reasoning with text) that (1) covers knowledge graphs of varying sizes (including very small ones), (2) comes with incidental, low-quality text mentions, and (3) includes not only triple completion but also ranking, which is relevant for supporting experts with discovery tasks. We investigate two neural models for inductive link prediction, one based on end-to-end learning and one that learns from the knowledge graph and text data in separate steps. These models compete with a strong bag-of-words baseline. The results show a significant advance in performance for the neural approaches as soon as the available graph data decreases for linking. For ranking, the results are promising, and the neural approaches outperform the sparse retriever by a wide margin.

Neural Entity Linking on Technical Service Tickets

May 19, 2020

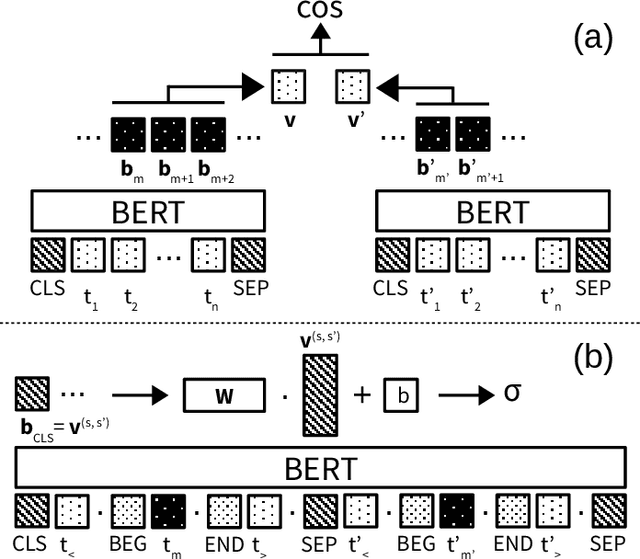

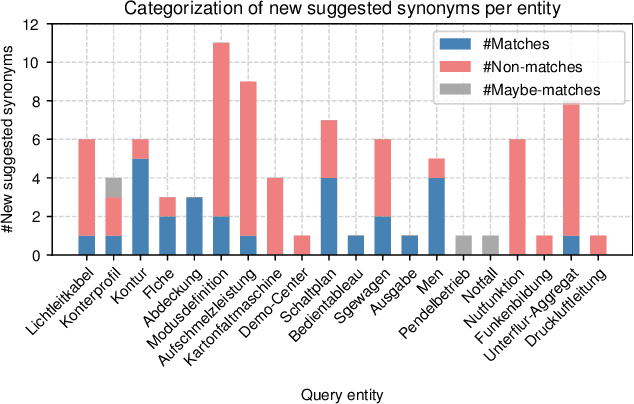

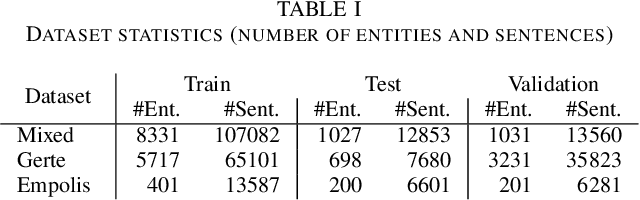

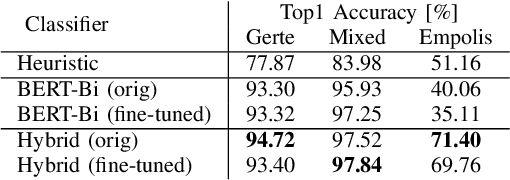

Abstract:Entity linking, the task of mapping textual mentions to known entities, has recently been tackled using contextualized neural networks. We address the question whether these results -- reported for large, high-quality datasets such as Wikipedia -- transfer to practical business use cases, where labels are scarce, text is low-quality, and terminology is highly domain-specific. Using an entity linking model based on BERT, a popular transformer network in natural language processing, we show that a neural approach outperforms and complements hand-coded heuristics, with improvements of about 20% top-1 accuracy. Also, the benefits of transfer learning on a large corpus are demonstrated, while fine-tuning proves difficult. Finally, we compare different BERT-based architectures and show that a simple sentence-wise encoding (Bi-Encoder) offers a fast yet efficient search in practice.

Hamming Sentence Embeddings for Information Retrieval

Aug 15, 2019

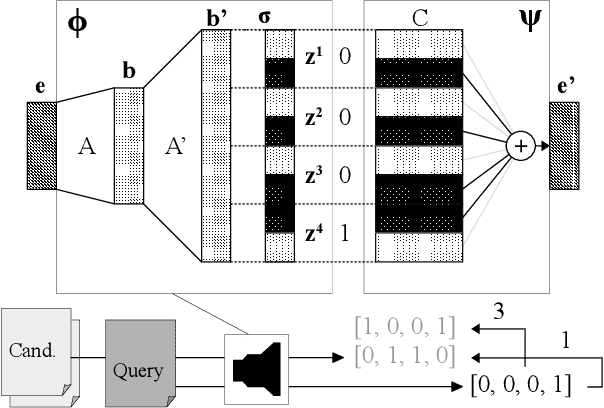

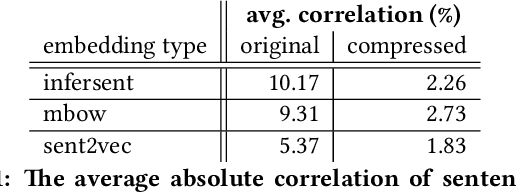

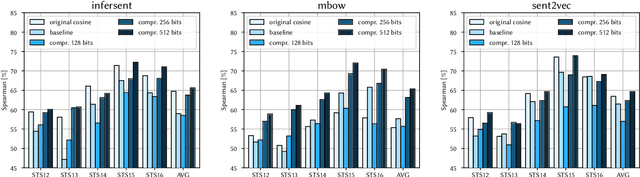

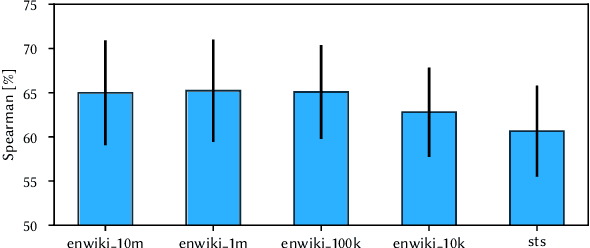

Abstract:In retrieval applications, binary hashes are known to offer significant improvements in terms of both memory and speed. We investigate the compression of sentence embeddings using a neural encoder-decoder architecture, which is trained by minimizing reconstruction error. Instead of employing the original real-valued embeddings, we use latent representations in Hamming space produced by the encoder for similarity calculations. In quantitative experiments on several benchmarks for semantic similarity tasks, we show that our compressed hamming embeddings yield a comparable performance to uncompressed embeddings (Sent2Vec, InferSent, Glove-BoW), at compression ratios of up to 256:1. We further demonstrate that our model strongly decorrelates input features, and that the compressor generalizes well when pre-trained on Wikipedia sentences. We publish the source code on Github and all experimental results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge