Fatima Zahrah

Embedding Privacy in Computational Social Science and Artificial Intelligence Research

Apr 17, 2024Abstract:Privacy is a human right. It ensures that individuals are free to engage in discussions, participate in groups, and form relationships online or offline without fear of their data being inappropriately harvested, analyzed, or otherwise used to harm them. Preserving privacy has emerged as a critical factor in research, particularly in the computational social science (CSS), artificial intelligence (AI) and data science domains, given their reliance on individuals' data for novel insights. The increasing use of advanced computational models stands to exacerbate privacy concerns because, if inappropriately used, they can quickly infringe privacy rights and lead to adverse effects for individuals - especially vulnerable groups - and society. We have already witnessed a host of privacy issues emerge with the advent of large language models (LLMs), such as ChatGPT, which further demonstrate the importance of embedding privacy from the start. This article contributes to the field by discussing the role of privacy and the primary issues that researchers working in CSS, AI, data science and related domains are likely to face. It then presents several key considerations for researchers to ensure participant privacy is best preserved in their research design, data collection and use, analysis, and dissemination of research results.

A Comparison of Online Hate on Reddit and 4chan: A Case Study of the 2020 US Election

Feb 02, 2022

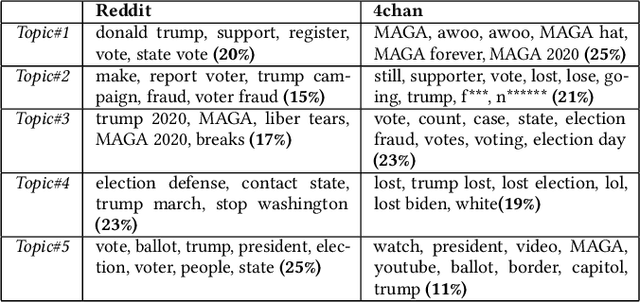

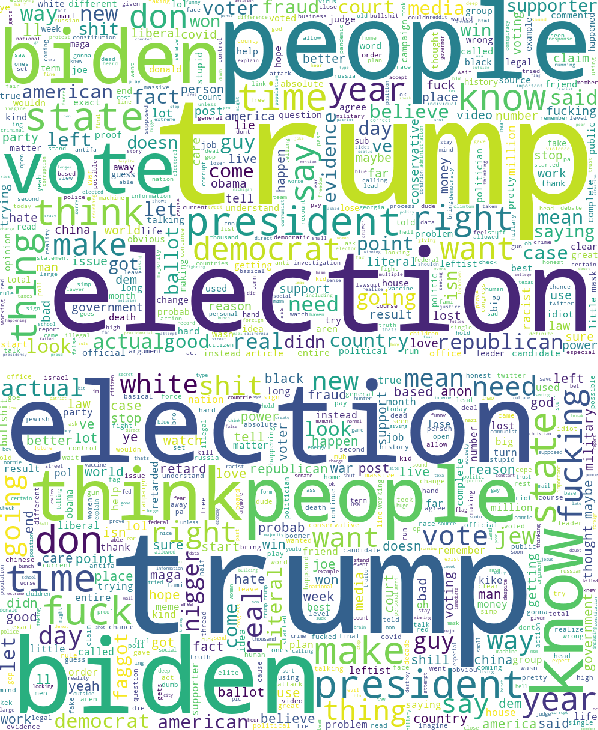

Abstract:The rapid integration of the Internet into our daily lives has led to many benefits but also to a number of new, wide-spread threats such as online hate, trolling, bullying, and generally aggressive behaviours. While research has traditionally explored online hate, in particular, on one platform, the reality is that such hate is a phenomenon that often makes use of multiple online networks. In this article, we seek to advance the discussion into online hate by harnessing a comparative approach, where we make use of various Natural Language Processing (NLP) techniques to computationally analyse hateful content from Reddit and 4chan relating to the 2020 US Presidential Elections. Our findings show how content and posting activity can differ depending on the platform being used. Through this, we provide initial comparison into the platform-specific behaviours of online hate, and how different platforms can serve specific purposes. We further provide several avenues for future research utilising a cross-platform approach so as to gain a more comprehensive understanding of the global hate ecosystem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge