Fangting Xie

PressTrack-HMR: Pressure-Based Top-Down Multi-Person Global Human Mesh Recovery

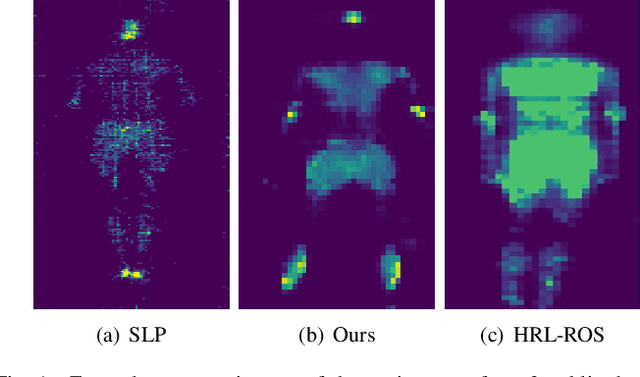

Nov 13, 2025Abstract:Multi-person global human mesh recovery (HMR) is crucial for understanding crowd dynamics and interactions. Traditional vision-based HMR methods sometimes face limitations in real-world scenarios due to mutual occlusions, insufficient lighting, and privacy concerns. Human-floor tactile interactions offer an occlusion-free and privacy-friendly alternative for capturing human motion. Existing research indicates that pressure signals acquired from tactile mats can effectively estimate human pose in single-person scenarios. However, when multiple individuals walk randomly on the mat simultaneously, how to distinguish intermingled pressure signals generated by different persons and subsequently acquire individual temporal pressure data remains a pending challenge for extending pressure-based HMR to the multi-person situation. In this paper, we present \textbf{PressTrack-HMR}, a top-down pipeline that recovers multi-person global human meshes solely from pressure signals. This pipeline leverages a tracking-by-detection strategy to first identify and segment each individual's pressure signal from the raw pressure data, and subsequently performs HMR for each extracted individual signal. Furthermore, we build a multi-person interaction pressure dataset \textbf{MIP}, which facilitates further research into pressure-based human motion analysis in multi-person scenarios. Experimental results demonstrate that our method excels in multi-person HMR using pressure data, with 89.2 $mm$ MPJPE and 112.6 $mm$ WA-MPJPE$_{100}$, and these showcase the potential of tactile mats for ubiquitous, privacy-preserving multi-person action recognition. Our dataset & code are available at https://github.com/Jiayue-Yuan/PressTrack-HMR.

Contrastive Learning-based User Identification with Limited Data on Smart Textiles

Sep 06, 2024

Abstract:Pressure-sensitive smart textiles are widely applied in the fields of healthcare, sports monitoring, and intelligent homes. The integration of devices embedded with pressure sensing arrays is expected to enable comprehensive scene coverage and multi-device integration. However, the implementation of identity recognition, a fundamental function in this context, relies on extensive device-specific datasets due to variations in pressure distribution across different devices. To address this challenge, we propose a novel user identification method based on contrastive learning. We design two parallel branches to facilitate user identification on both new and existing devices respectively, employing supervised contrastive learning in the feature space to promote domain unification. When encountering new devices, extensive data collection efforts are not required; instead, user identification can be achieved using limited data consisting of only a few simple postures. Through experimentation with two 8-subject pressure datasets (BedPressure and ChrPressure), our proposed method demonstrates the capability to achieve user identification across 12 sitting scenarios using only a dataset containing 2 postures. Our average recognition accuracy reaches 79.05%, representing an improvement of 2.62% over the best baseline model.

MassNet: A Deep Learning Approach for Body Weight Extraction from A Single Pressure Image

Mar 17, 2023

Abstract:Body weight, as an essential physiological trait, is of considerable significance in many applications like body management, rehabilitation, and drug dosing for patient-specific treatments. Previous works on the body weight estimation task are mainly vision-based, using 2D/3D, depth, or infrared images, facing problems in illumination, occlusions, and especially privacy issues. The pressure mapping mattress is a non-invasive and privacy-preserving tool to obtain the pressure distribution image over the bed surface, which strongly correlates with the body weight of the lying person. To extract the body weight from this image, we propose a deep learning-based model, including a dual-branch network to extract the deep features and pose features respectively. A contrastive learning module is also combined with the deep-feature branch to help mine the mutual factors across different postures of every single subject. The two groups of features are then concatenated for the body weight regression task. To test the model's performance over different hardware and posture settings, we create a pressure image dataset of 10 subjects and 23 postures, using a self-made pressure-sensing bedsheet. This dataset, which is made public together with this paper, together with a public dataset, are used for the validation. The results show that our model outperforms the state-of-the-art algorithms over both 2 datasets. Our research constitutes an important step toward fully automatic weight estimation in both clinical and at-home practice. Our dataset is available for research purposes at: https://github.com/USTCWzy/MassEstimation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge