Evzenie Coupkova

On the Rashomon ratio of infinite hypothesis sets

Apr 27, 2024Abstract:Given a classification problem and a family of classifiers, the Rashomon ratio measures the proportion of classifiers that yield less than a given loss. Previous work has explored the advantage of a large Rashomon ratio in the case of a finite family of classifiers. Here we consider the more general case of an infinite family. We show that a large Rashomon ratio guarantees that choosing the classifier with the best empirical accuracy among a random subset of the family, which is likely to improve generalizability, will not increase the empirical loss too much. We quantify the Rashomon ratio in two examples involving infinite classifier families in order to illustrate situations in which it is large. In the first example, we estimate the Rashomon ratio of the classification of normally distributed classes using an affine classifier. In the second, we obtain a lower bound for the Rashomon ratio of a classification problem with a modified Gram matrix when the classifier family consists of two-layer ReLU neural networks. In general, we show that the Rashomon ratio can be estimated using a training dataset along with random samples from the classifier family and we provide guarantees that such an estimation is close to the true value of the Rashomon ratio.

Asymptotic optimality and minimal complexity of classification by random projection

Aug 11, 2021

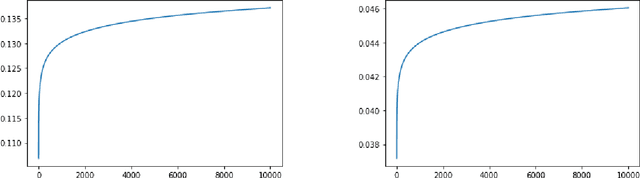

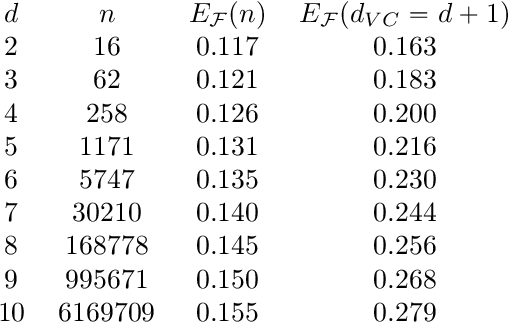

Abstract:The generalization error of a classifier is related to the complexity of the set of functions among which the classifier is chosen. Roughly speaking, the more complex the family, the greater the potential disparity between the training error and the population error of the classifier. This principle is embodied in layman's terms by Occam's razor principle, which suggests favoring low-complexity hypotheses over complex ones. We study a family of low-complexity classifiers consisting of thresholding the one-dimensional feature obtained by projecting the data on a random line after embedding it into a higher dimensional space parametrized by monomials of order up to k. More specifically, the extended data is projected n-times and the best classifier among those n (based on its performance on training data) is chosen. We obtain a bound on the generalization error of these low-complexity classifiers. The bound is less than that of any classifier with a non-trivial VC dimension, and thus less than that of a linear classifier. We also show that, given full knowledge of the class conditional densities, the error of the classifiers would converge to the optimal (Bayes) error as k and n go to infinity; if only a training dataset is given, we show that the classifiers will perfectly classify all the training points as k and n go to infinity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge