Erik Hallin

End-to-End Data Quality-Driven Framework for Machine Learning in Production Environment

Dec 16, 2025

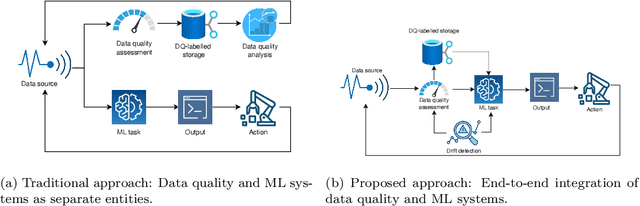

Abstract:This paper introduces a novel end-to-end framework that efficiently integrates data quality assessment with machine learning (ML) model operations in real-time production environments. While existing approaches treat data quality assessment and ML systems as isolated processes, our framework addresses the critical gap between theoretical methods and practical implementation by combining dynamic drift detection, adaptive data quality metrics, and MLOps into a cohesive, lightweight system. The key innovation lies in its operational efficiency, enabling real-time, quality-driven ML decision-making with minimal computational overhead. We validate the framework in a steel manufacturing company's Electroslag Remelting (ESR) vacuum pumping process, demonstrating a 12% improvement in model performance (R2 = 94%) and a fourfold reduction in prediction latency. By exploring the impact of data quality acceptability thresholds, we provide actionable insights into balancing data quality standards and predictive performance in industrial applications. This framework represents a significant advancement in MLOps, offering a robust solution for time-sensitive, data-driven decision-making in dynamic industrial environments.

* 38 Pages

Adaptive Data Quality Scoring Operations Framework using Drift-Aware Mechanism for Industrial Applications

Aug 13, 2024Abstract:Within data-driven artificial intelligence (AI) systems for industrial applications, ensuring the reliability of the incoming data streams is an integral part of trustworthy decision-making. An approach to assess data validity is data quality scoring, which assigns a score to each data point or stream based on various quality dimensions. However, certain dimensions exhibit dynamic qualities, which require adaptation on the basis of the system's current conditions. Existing methods often overlook this aspect, making them inefficient in dynamic production environments. In this paper, we introduce the Adaptive Data Quality Scoring Operations Framework, a novel framework developed to address the challenges posed by dynamic quality dimensions in industrial data streams. The framework introduces an innovative approach by integrating a dynamic change detector mechanism that actively monitors and adapts to changes in data quality, ensuring the relevance of quality scores. We evaluate the proposed framework performance in a real-world industrial use case. The experimental results reveal high predictive performance and efficient processing time, highlighting its effectiveness in practical quality-driven AI applications.

* 17 pages

Quality Assurance in MLOps Setting: An Industrial Perspective

Nov 24, 2022Abstract:Today, machine learning (ML) is widely used in industry to provide the core functionality of production systems. However, it is practically always used in production systems as part of a larger end-to-end software system that is made up of several other components in addition to the ML model. Due to production demand and time constraints, automated software engineering practices are highly applicable. The increased use of automated ML software engineering practices in industries such as manufacturing and utilities requires an automated Quality Assurance (QA) approach as an integral part of ML software. Here, QA helps reduce risk by offering an objective perspective on the software task. Although conventional software engineering has automated tools for QA data analysis for data-driven ML, the use of QA practices for ML in operation (MLOps) is lacking. This paper examines the QA challenges that arise in industrial MLOps and conceptualizes modular strategies to deal with data integrity and Data Quality (DQ). The paper is accompanied by real industrial use-cases from industrial partners. The paper also presents several challenges that may serve as a basis for future studies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge