Erik Buchmann

PRISMe: A Novel LLM-Powered Tool for Interactive Privacy Policy Assessment

Jan 27, 2025

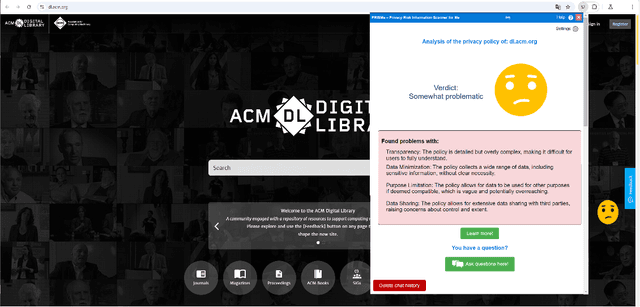

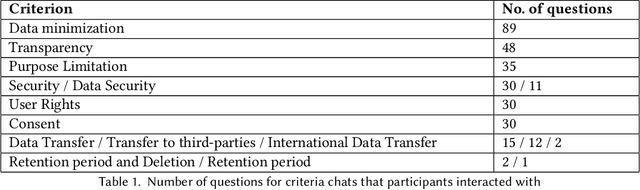

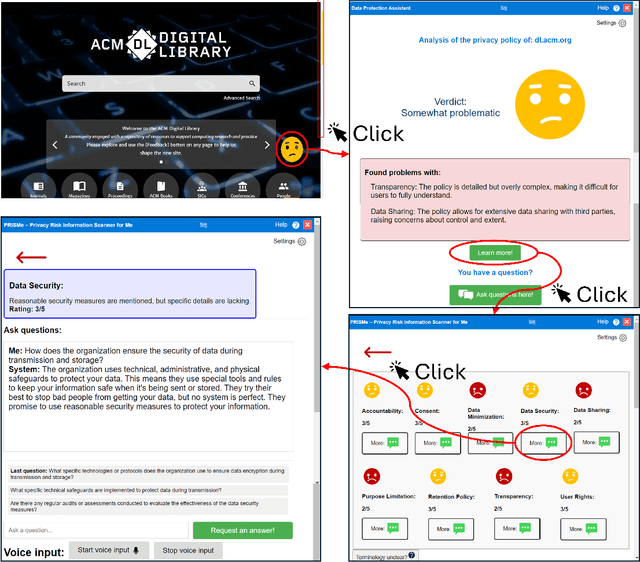

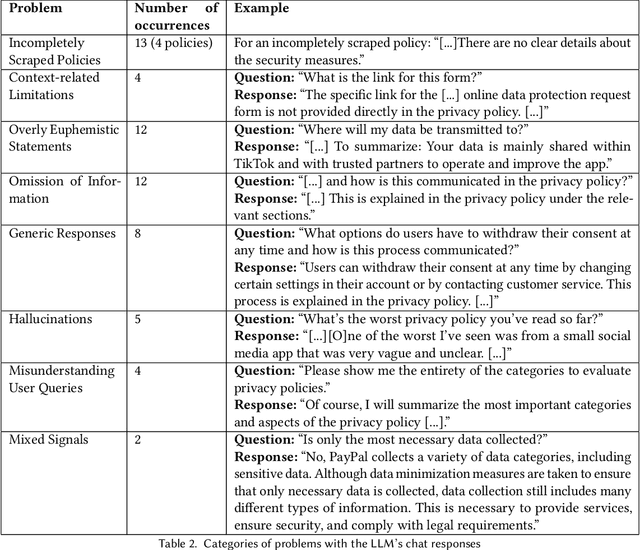

Abstract:Protecting online privacy requires users to engage with and comprehend website privacy policies, but many policies are difficult and tedious to read. We present PRISMe (Privacy Risk Information Scanner for Me), a novel Large Language Model (LLM)-driven privacy policy assessment tool, which helps users to understand the essence of a lengthy, complex privacy policy while browsing. The tool, a browser extension, integrates a dashboard and an LLM chat. One major contribution is the first rigorous evaluation of such a tool. In a mixed-methods user study (N=22), we evaluate PRISMe's efficiency, usability, understandability of the provided information, and impacts on awareness. While our tool improves privacy awareness by providing a comprehensible quick overview and a quality chat for in-depth discussion, users note issues with consistency and building trust in the tool. From our insights, we derive important design implications to guide future policy analysis tools.

Legally Binding but Unfair? Towards Assessing Fairness of Privacy Policies

Mar 12, 2024

Abstract:Privacy policies are expected to inform data subjects about their data protection rights. They should explain the data controller's data management practices, and make facts such as retention periods or data transfers to third parties transparent. Privacy policies only fulfill their purpose, if they are correctly perceived, interpreted, understood, and trusted by the data subject. Amongst others, this requires that a privacy policy is written in a fair way, e.g., it does not use polarizing terms, does not require a certain education, or does not assume a particular social background. In this work-in-progress paper, we outline our approach to assessing fairness in privacy policies. To this end, we identify from fundamental legal sources and fairness research, how the dimensions informational fairness, representational fairness and ethics/morality are related to privacy policies. We propose options to automatically assess policies in these fairness dimensions, based on text statistics, linguistic methods and artificial intelligence. Finally, we conduct initial experiments with German privacy policies to provide evidence that our approach is applicable. Our experiments indicate that there are indeed issues in all three dimensions of fairness. For example, our approach finds out if a policy discriminates against individuals with impaired reading skills or certain demographics, and identifies questionable ethics. This is important, as future privacy policies may be used in a corpus for legal artificial intelligence models.

Federated Learning on Transcriptomic Data: Model Quality and Performance Trade-Offs

Feb 22, 2024

Abstract:Machine learning on large-scale genomic or transcriptomic data is important for many novel health applications. For example, precision medicine tailors medical treatments to patients on the basis of individual biomarkers, cellular and molecular states, etc. However, the data required is sensitive, voluminous, heterogeneous, and typically distributed across locations where dedicated machine learning hardware is not available. Due to privacy and regulatory reasons, it is also problematic to aggregate all data at a trusted third party.Federated learning is a promising solution to this dilemma, because it enables decentralized, collaborative machine learning without exchanging raw data. In this paper, we perform comparative experiments with the federated learning frameworks TensorFlow Federated and Flower. Our test case is the training of disease prognosis and cell type classification models. We train the models with distributed transcriptomic data, considering both data heterogeneity and architectural heterogeneity. We measure model quality, robustness against privacy-enhancing noise, computational performance and resource overhead. Each of the federated learning frameworks has different strengths. However, our experiments confirm that both frameworks can readily build models on transcriptomic data, without transferring personal raw data to a third party with abundant computational resources.

Fairness Certification for Natural Language Processing and Large Language Models

Jan 03, 2024Abstract:Natural Language Processing (NLP) plays an important role in our daily lives, particularly due to the enormous progress of Large Language Models (LLM). However, NLP has many fairness-critical use cases, e.g., as an expert system in recruitment or as an LLM-based tutor in education. Since NLP is based on human language, potentially harmful biases can diffuse into NLP systems and produce unfair results, discriminate against minorities or generate legal issues. Hence, it is important to develop a fairness certification for NLP approaches. We follow a qualitative research approach towards a fairness certification for NLP. In particular, we have reviewed a large body of literature on algorithmic fairness, and we have conducted semi-structured expert interviews with a wide range of experts from that area. We have systematically devised six fairness criteria for NLP, which can be further refined into 18 sub-categories. Our criteria offer a foundation for operationalizing and testing processes to certify fairness, both from the perspective of the auditor and the audited organization.

A Privacy-Preserving Federated Learning Approach for Kernel methods

Jun 05, 2023Abstract:It is challenging to implement Kernel methods, if the data sources are distributed and cannot be joined at a trusted third party for privacy reasons. It is even more challenging, if the use case rules out privacy-preserving approaches that introduce noise. An example for such a use case is machine learning on clinical data. To realize exact privacy preserving computation of kernel methods, we propose FLAKE, a Federated Learning Approach for KErnel methods on horizontally distributed data. With FLAKE, the data sources mask their data so that a centralized instance can compute a Gram matrix without compromising privacy. The Gram matrix allows to calculate many kernel matrices, which can be used to train kernel-based machine learning algorithms such as Support Vector Machines. We prove that FLAKE prevents an adversary from learning the input data or the number of input features under a semi-honest threat model. Experiments on clinical and synthetic data confirm that FLAKE is outperforming the accuracy and efficiency of comparable methods. The time needed to mask the data and to compute the Gram matrix is several orders of magnitude less than the time a Support Vector Machine needs to be trained. Thus, FLAKE can be applied to many use cases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge