Elizabeth Foster

Deepwound: Automated Postoperative Wound Assessment and Surgical Site Surveillance through Convolutional Neural Networks

Jul 11, 2018

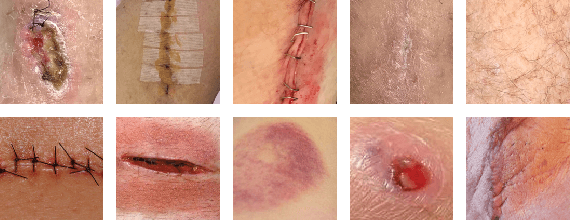

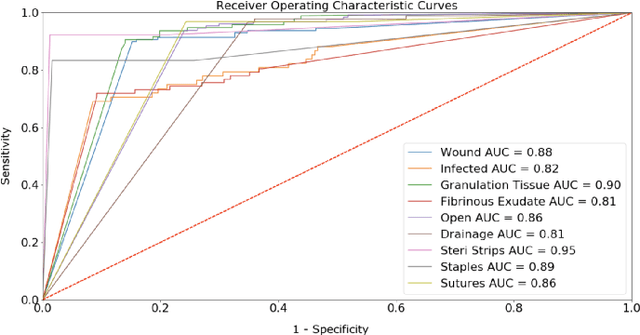

Abstract:Postoperative wound complications are a significant cause of expense for hospitals, doctors, and patients. Hence, an effective method to diagnose the onset of wound complications is strongly desired. Algorithmically classifying wound images is a difficult task due to the variability in the appearance of wound sites. Convolutional neural networks (CNNs), a subgroup of artificial neural networks that have shown great promise in analyzing visual imagery, can be leveraged to categorize surgical wounds. We present a multi-label CNN ensemble, Deepwound, trained to classify wound images using only image pixels and corresponding labels as inputs. Our final computational model can accurately identify the presence of nine labels: drainage, fibrinous exudate, granulation tissue, surgical site infection, open wound, staples, steri strips, and sutures. Our model achieves receiver operating curve (ROC) area under curve (AUC) scores, sensitivity, specificity, and F1 scores superior to prior work in this area. Smartphones provide a means to deliver accessible wound care due to their increasing ubiquity. Paired with deep neural networks, they offer the capability to provide clinical insight to assist surgeons during postoperative care. We also present a mobile application frontend to Deepwound that assists patients in tracking their wound and surgical recovery from the comfort of their home.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge