Get our free extension to see links to code for papers anywhere online!Free add-on: code for papers everywhere!Free add-on: See code for papers anywhere!

Diego Palacios

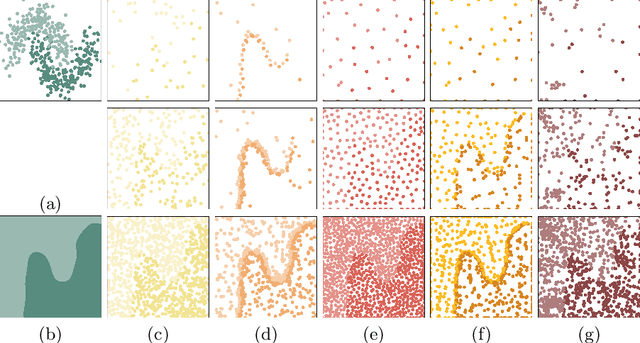

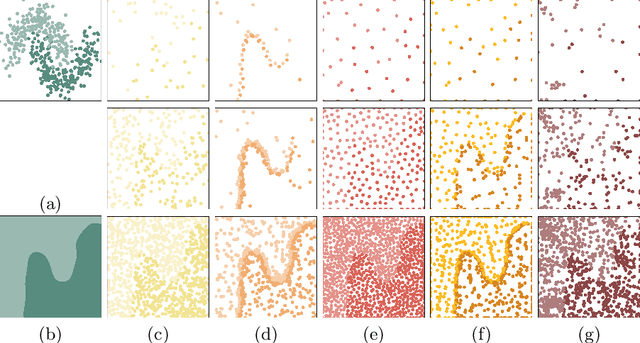

Sampling Unknown Decision Functions to Build Classifier Copies

Oct 01, 2019Figures and Tables:

Abstract:Copies have been proposed as a viable alternative to endow machine learning models with properties and features that adapt them to changing needs. A fundamental step of the copying process is generating an unlabelled set of points to explore the decision behavior of the targeted classifier throughout the input space. In this article we propose two sampling strategies to produce such sets. We validate them in six well-known problems and compare them with two standard methods. We evaluate our proposals in terms of both their accuracy performance and their computational cost.

Via

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge