Diego Aghi

Deep Semantic Segmentation at the Edge for Autonomous Navigation in Vineyard Rows

Jul 01, 2021

Abstract:Precision agriculture is a fast-growing field that aims at introducing affordable and effective automation into agricultural processes. Nowadays, algorithmic solutions for navigation in vineyards require expensive sensors and high computational workloads that preclude large-scale applicability of autonomous robotic platforms in real business case scenarios. From this perspective, our novel proposed control leverages the latest advancement in machine perception and edge AI techniques to achieve highly affordable and reliable navigation inside vineyard rows with low computational and power consumption. Indeed, using a custom-trained segmentation network and a low-range RGB-D camera, we are able to take advantage of the semantic information of the environment to produce smooth trajectories and stable control in different vineyards scenarios. Moreover, the segmentation maps generated by the control algorithm itself could be directly exploited as filters for a vegetative assessment of the crop status. Extensive experimentations and evaluations against real-world data and simulated environments demonstrated the effectiveness and intrinsic robustness of our methodology.

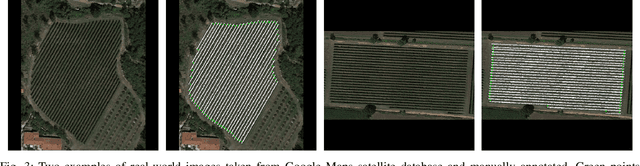

DeepWay: a Deep Learning Estimator for Unmanned Ground Vehicle Global Path Planning

Oct 30, 2020

Abstract:Agriculture 3.0 and 4.0 have gradually introduced service robotics and automation into several agricultural processes, mostly improving crops quality and seasonal yield. Row-based crops are the perfect settings to test and deploy smart machines capable of monitoring and manage the harvest. In this context, global path planning is essential either for ground or aerial vehicles, and it is the starting point for every type of mission plan. Nevertheless, little attention has been currently given to this problem by the research community and global path planning automation is still far to be solved. In order to generate a viable path for an autonomous machine, the presented research proposes a feature learning fully convolutional model capable of estimating waypoints given an occupancy grid map. In particular, we apply the proposed data-driven methodology to the specific case of row-based crops with the general objective to generate a global path able to cover the extension of the crop completely. Extensive experimentation with a custom made synthetic dataset and real satellite-derived images of different scenarios have proved the effectiveness of our methodology and demonstrated the feasibility of an end-to-end and completely autonomous global path planner.

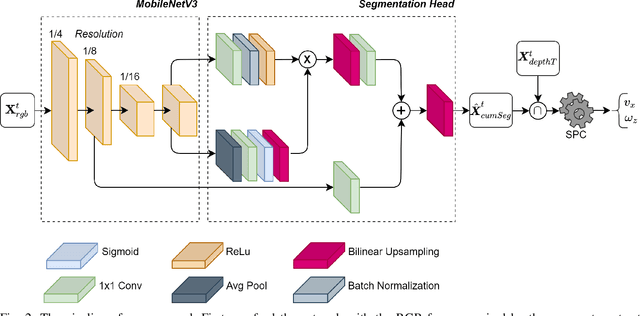

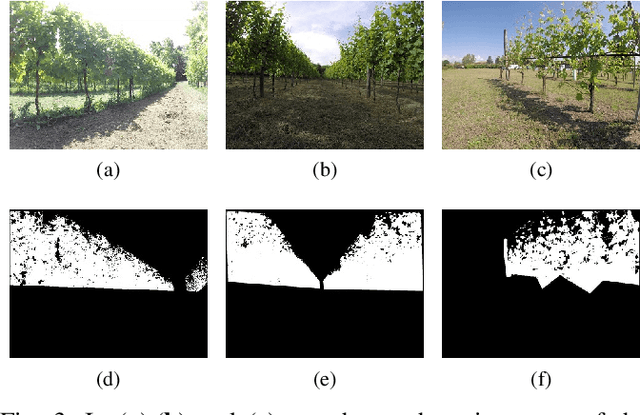

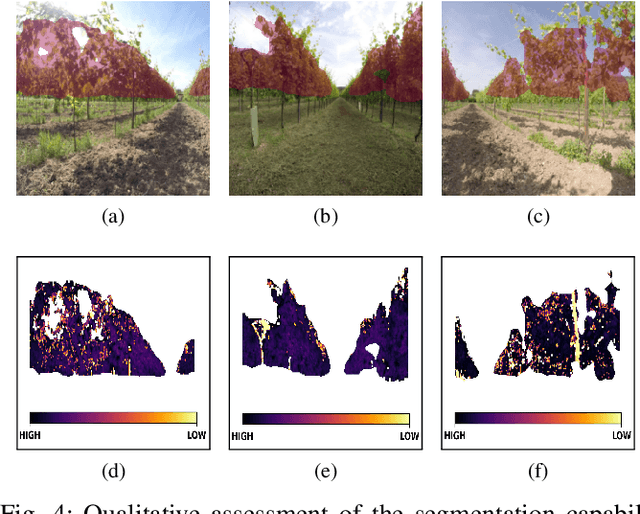

Local Motion Planner for Autonomous Navigation in Vineyards with a RGB-D Camera-Based Algorithm and Deep Learning Synergy

May 26, 2020

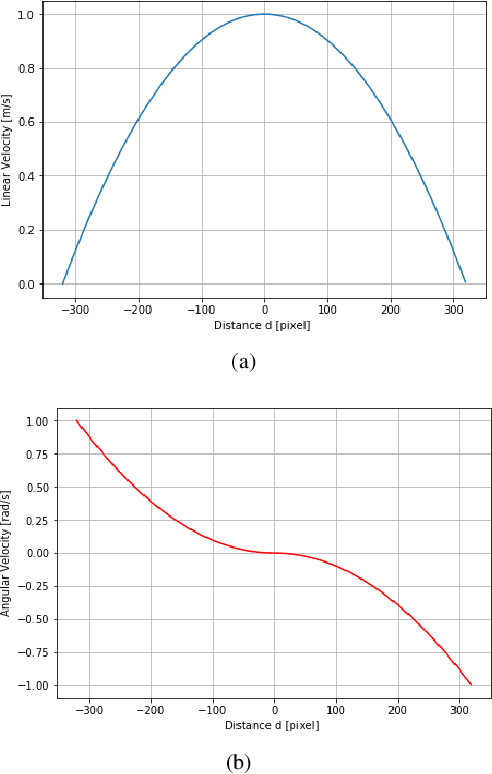

Abstract:With the advent of agriculture 3.0 and 4.0, researchers are increasingly focusing on the development of innovative smart farming and precision agriculture technologies by introducing automation and robotics into the agricultural processes. Autonomous agricultural field machines have been gaining significant attention from farmers and industries to reduce costs, human workload, and required resources. Nevertheless, achieving sufficient autonomous navigation capabilities requires the simultaneous cooperation of different processes; localization, mapping, and path planning are just some of the steps that aim at providing to the machine the right set of skills to operate in semi-structured and unstructured environments. In this context, this study presents a low-cost local motion planner for autonomous navigation in vineyards based only on an RGB-D camera, low range hardware, and a dual layer control algorithm. The first algorithm exploits the disparity map and its depth representation to generate a proportional control for the robotic platform. Concurrently, a second back-up algorithm, based on representations learning and resilient to illumination variations, can take control of the machine in case of a momentaneous failure of the first block. Moreover, due to the double nature of the system, after initial training of the deep learning model with an initial dataset, the strict synergy between the two algorithms opens the possibility of exploiting new automatically labeled data, coming from the field, to extend the existing model knowledge. The machine learning algorithm has been trained and tested, using transfer learning, with acquired images during different field surveys in the North region of Italy and then optimized for on-device inference with model pruning and quantization. Finally, the overall system has been validated with a customized robot platform in the relevant environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge