Dexter Neo

VORD: Visual Ordinal Calibration for Mitigating Object Hallucinations in Large Vision-Language Models

Dec 20, 2024Abstract:Large Vision-Language Models (LVLMs) have made remarkable developments along with the recent surge of large language models. Despite their advancements, LVLMs have a tendency to generate plausible yet inaccurate or inconsistent information based on the provided source content. This phenomenon, also known as ``hallucinations" can have serious downstream implications during the deployment of LVLMs. To address this, we present VORD a simple and effective method that alleviates hallucinations by calibrating token predictions based on ordinal relationships between modified image pairs. VORD is presented in two forms: 1.) a minimalist training-free variant which eliminates implausible tokens from modified image pairs, and 2.) a trainable objective function that penalizes unlikely tokens. Our experiments demonstrate that VORD delivers better calibration and effectively mitigates object hallucinations on a wide-range of LVLM benchmarks.

FER-C: Benchmarking Out-of-Distribution Soft Calibration for Facial Expression Recognition

Dec 16, 2023Abstract:We present a soft benchmark for calibrating facial expression recognition (FER). While prior works have focused on identifying affective states, we find that FER models are uncalibrated. This is particularly true when out-of-distribution (OOD) shifts further exacerbate the ambiguity of facial expressions. While most OOD benchmarks provide hard labels, we argue that the ground-truth labels for evaluating FER models should be soft in order to better reflect the ambiguity behind facial behaviours. Our framework proposes soft labels that closely approximates the average information loss based on different types of OOD shifts. Finally, we show the benefits of calibration on five state-of-the-art FER algorithms tested on our benchmark.

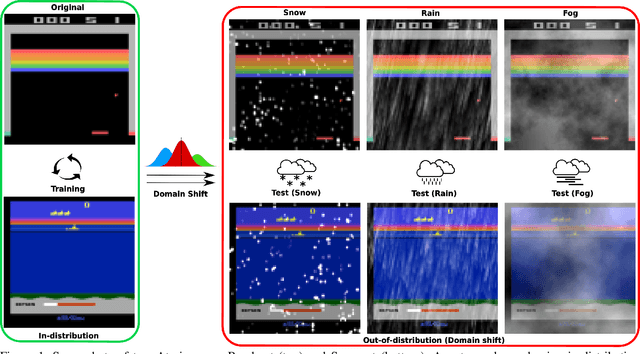

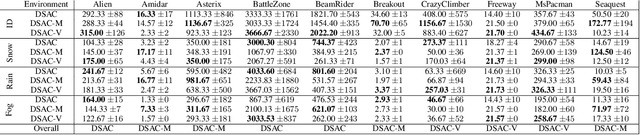

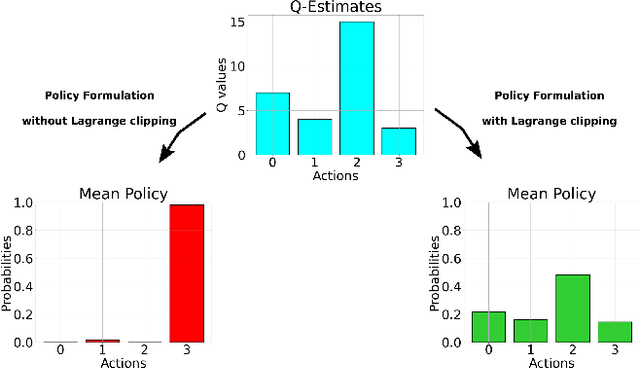

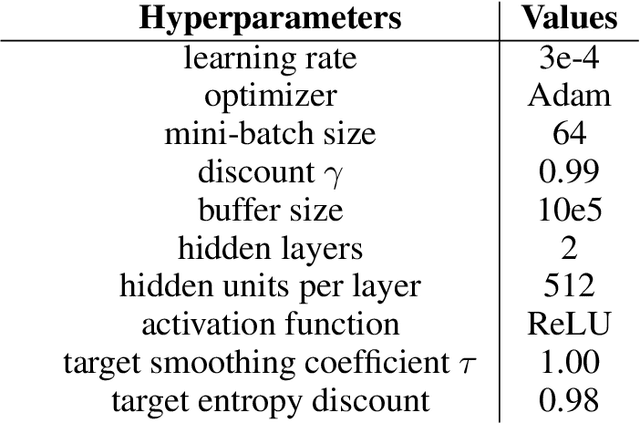

DSAC-C: Constrained Maximum Entropy for Robust Discrete Soft-Actor Critic

Oct 26, 2023

Abstract:We present a novel extension to the family of Soft Actor-Critic (SAC) algorithms. We argue that based on the Maximum Entropy Principle, discrete SAC can be further improved via additional statistical constraints derived from a surrogate critic policy. Furthermore, our findings suggests that these constraints provide an added robustness against potential domain shifts, which are essential for safe deployment of reinforcement learning agents in the real-world. We provide theoretical analysis and show empirical results on low data regimes for both in-distribution and out-of-distribution variants of Atari 2600 games.

MaxEnt Loss: Constrained Maximum Entropy for Calibration under Out-of-Distribution Shift

Oct 26, 2023

Abstract:We present a new loss function that addresses the out-of-distribution (OOD) calibration problem. While many objective functions have been proposed to effectively calibrate models in-distribution, our findings show that they do not always fare well OOD. Based on the Principle of Maximum Entropy, we incorporate helpful statistical constraints observed during training, delivering better model calibration without sacrificing accuracy. We provide theoretical analysis and show empirically that our method works well in practice, achieving state-of-the-art calibration on both synthetic and real-world benchmarks.

Morphset:Augmenting categorical emotion datasets with dimensional affect labels using face morphing

Mar 04, 2021

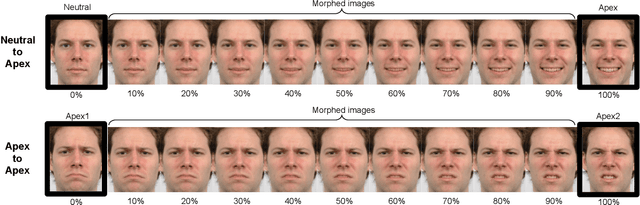

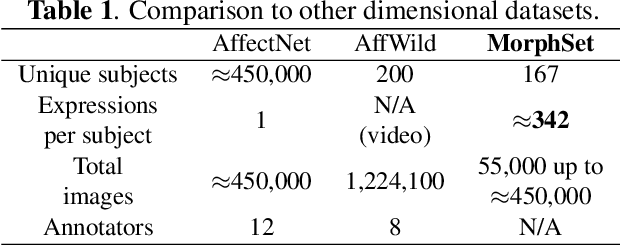

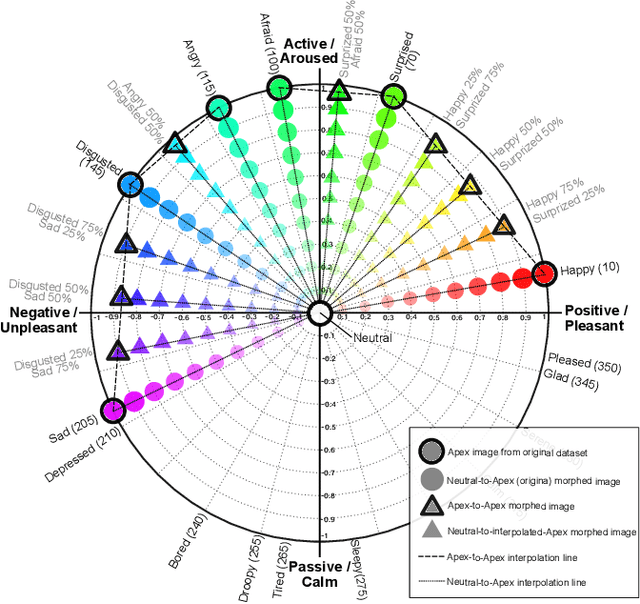

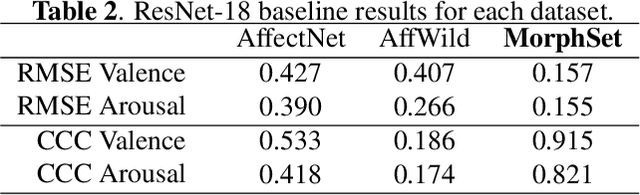

Abstract:Emotion recognition and understanding is a vital componentin human-machine interaction. Dimensional models of affectsuch as those using valence and arousal have advantages overtraditional categorical ones due to the complexity of emo-tional states in humans. However, dimensional emotion an-notations are difficult and expensive to collect, therefore theyare still limited in the affective computing community. To ad-dress these issues, we propose a method to generate syntheticimages from existing categorical emotion datasets using facemorphing, with full control over the resulting sample distri-bution as well as dimensional labels in the circumplex space,while achieving augmentation factors of at least 20x or more.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge