Daniel Williamson

University of Exeter

stCEG: An R Package for Modelling Events over Spatial Areas Using Chain Event Graphs

Jul 09, 2025

Abstract:stCEG is an R package which allows a user to fully specify a Chain Event Graph (CEG) model from data and to produce interactive plots. It includes functions for the user to visualise spatial variables they wish to include in the model. There is also a web-based graphical user interface (GUI) provided, increasing ease of use for those without knowledge of R. We demonstrate stCEG using a dataset of homicides in London, which is included in the package. stCEG is the first software package for CEGs that allows for full model customisation.

On the meaning of uncertainty for ethical AI: philosophy and practice

Sep 11, 2023Abstract:Whether and how data scientists, statisticians and modellers should be accountable for the AI systems they develop remains a controversial and highly debated topic, especially given the complexity of AI systems and the difficulties in comparing and synthesising competing claims arising from their deployment for data analysis. This paper proposes to address this issue by decreasing the opacity and heightening the accountability of decision making using AI systems, through the explicit acknowledgement of the statistical foundations that underpin their development and the ways in which these dictate how their results should be interpreted and acted upon by users. In turn, this enhances (1) the responsiveness of the models to feedback, (2) the quality and meaning of uncertainty on their outputs and (3) their transparency to evaluation. To exemplify this approach, we extend Posterior Belief Assessment to offer a route to belief ownership from complex and competing AI structures. We argue that this is a significant way to bring ethical considerations into mathematical reasoning, and to implement ethical AI in statistical practice. We demonstrate these ideas within the context of competing models used to advise the UK government on the spread of the Omicron variant of COVID-19 during December 2021.

Linked Deep Gaussian Process Emulation for Model Networks

Jun 02, 2023

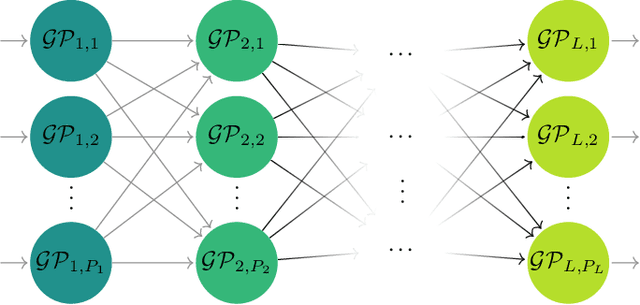

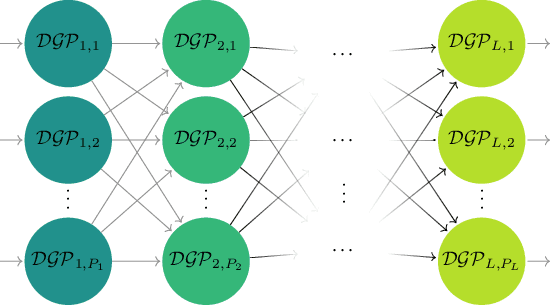

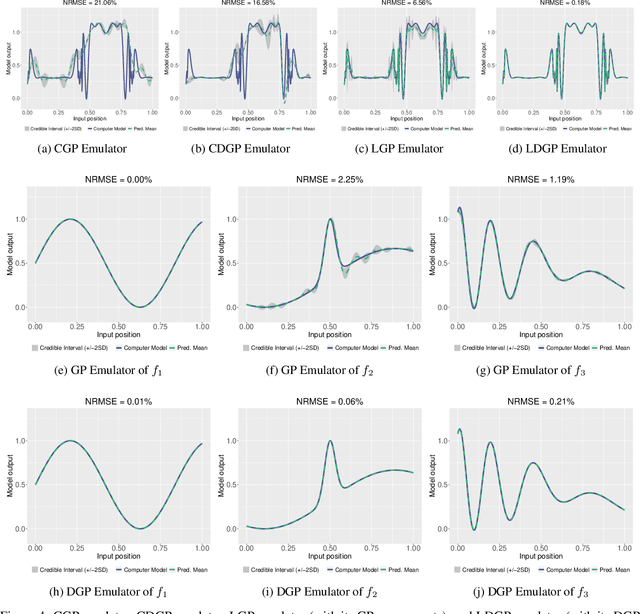

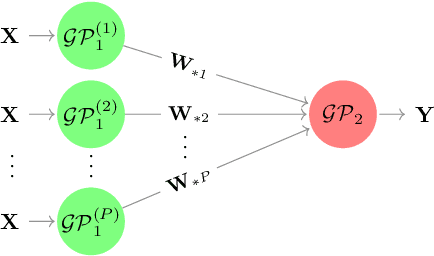

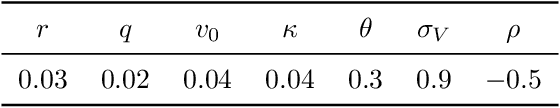

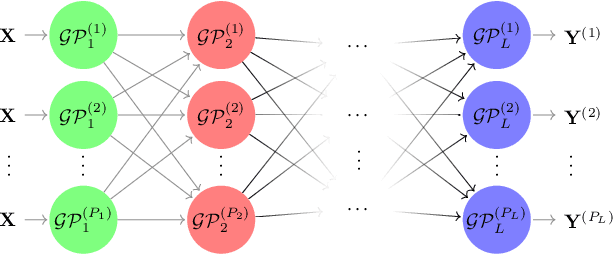

Abstract:Modern scientific problems are often multi-disciplinary and require integration of computer models from different disciplines, each with distinct functional complexities, programming environments, and computation times. Linked Gaussian process (LGP) emulation tackles this challenge through a divide-and-conquer strategy that integrates Gaussian process emulators of the individual computer models in a network. However, the required stationarity of the component Gaussian process emulators within the LGP framework limits its applicability in many real-world applications. In this work, we conceptualize a network of computer models as a deep Gaussian process with partial exposure of its hidden layers. We develop a method for inference for these partially exposed deep networks that retains a key strength of the LGP framework, whereby each model can be emulated separately using a DGP and then linked together. We show in both synthetic and empirical examples that our linked deep Gaussian process emulators exhibit significantly better predictive performance than standard LGP emulators in terms of accuracy and uncertainty quantification. They also outperform single DGPs fitted to the network as a whole because they are able to integrate information from the partially exposed hidden layers. Our methods are implemented in an R package $\texttt{dgpsi}$ that is freely available on CRAN.

Deep Gaussian Process Emulation using Stochastic Imputation

Jul 04, 2021

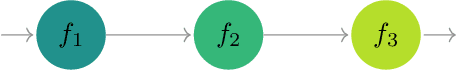

Abstract:We propose a novel deep Gaussian process (DGP) inference method for computer model emulation using stochastic imputation. By stochastically imputing the latent layers, the approach transforms the DGP into the linked GP, a state-of-the-art surrogate model formed by linking a system of feed-forward coupled GPs. This transformation renders a simple while efficient DGP training procedure that only involves optimizations of conventional stationary GPs. In addition, the analytically tractable mean and variance of the linked GP allows one to implement predictions from DGP emulators in a fast and accurate manner. We demonstrate the method in a series of synthetic examples and real-world applications, and show that it is a competitive candidate for efficient DGP surrogate modeling in comparison to the variational inference and the fully-Bayesian approach. A $\texttt{Python}$ package $\texttt{dgpsi}$ implementing the method is also produced and available at https://github.com/mingdeyu/DGP.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge