Get our free extension to see links to code for papers anywhere online!Free add-on: code for papers everywhere!Free add-on: See code for papers anywhere!

Daniel Salnikov

Lifted Coefficient of Determination: Fast model-free prediction intervals and likelihood-free model comparison

Oct 11, 2024Figures and Tables:

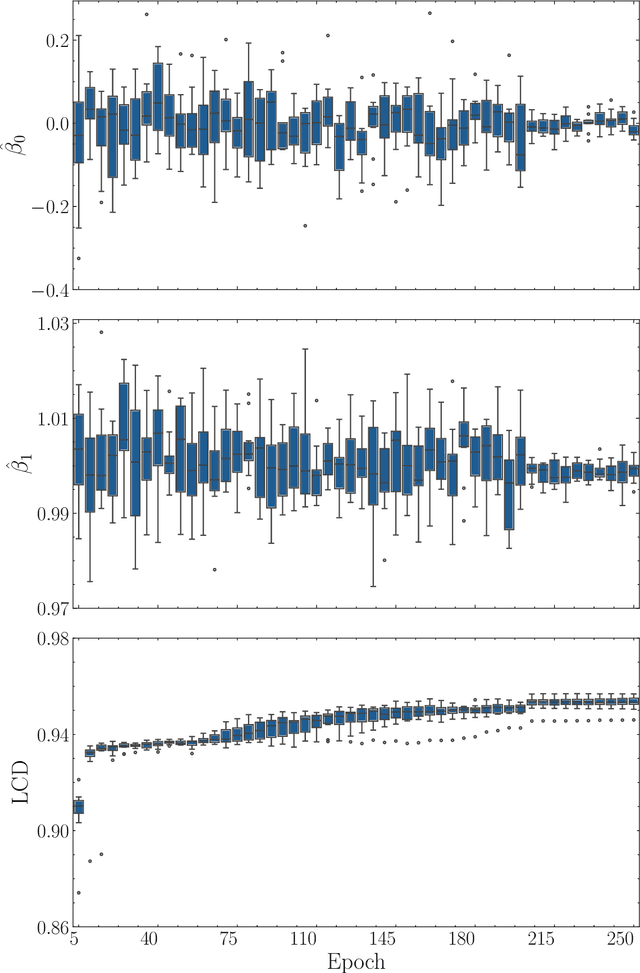

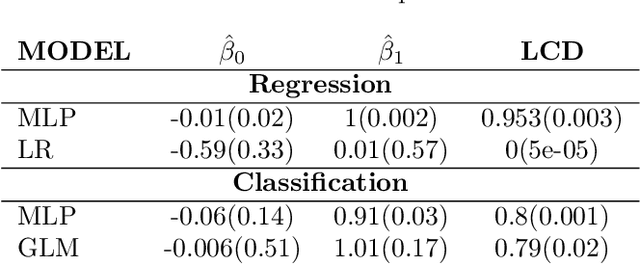

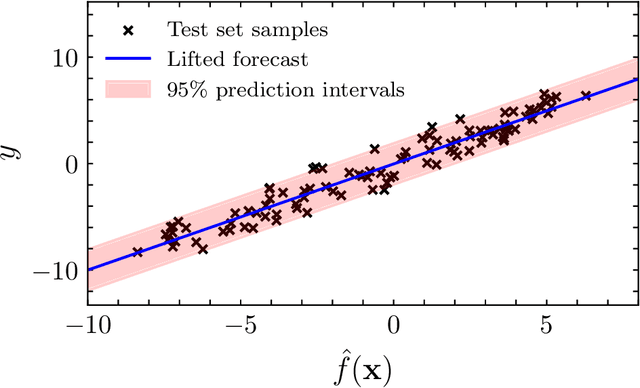

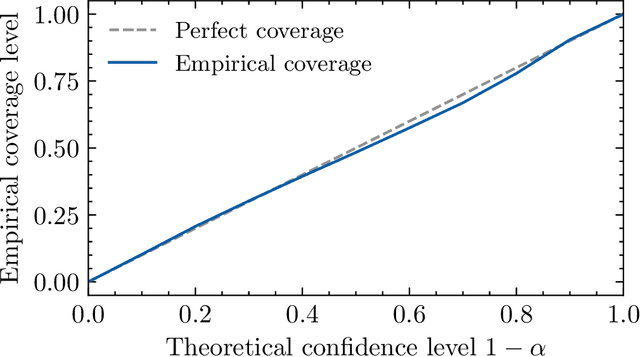

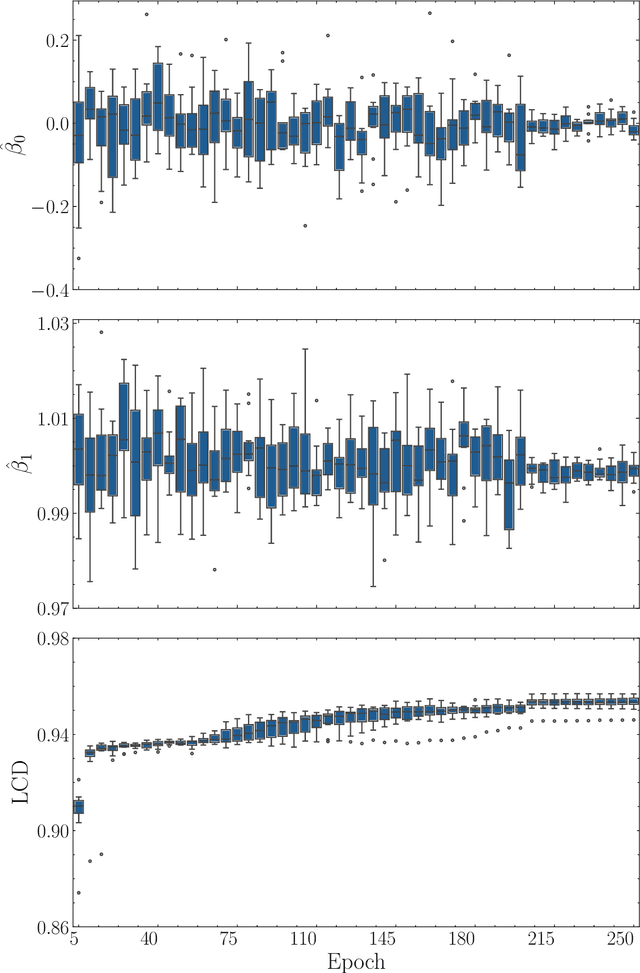

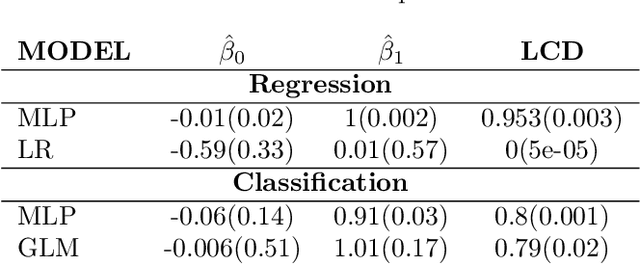

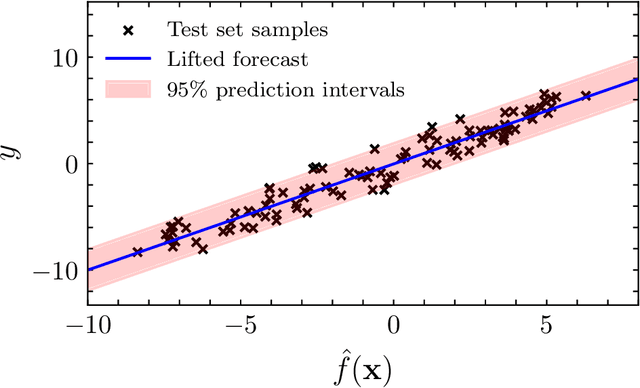

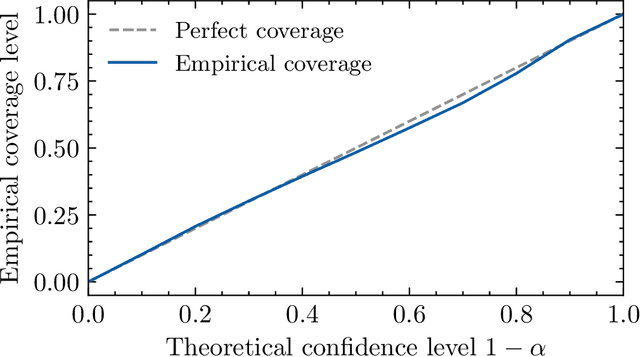

Abstract:We propose the $\textit{lifted linear model}$, and derive model-free prediction intervals that become tighter as the correlation between predictions and observations increases. These intervals motivate the $\textit{Lifted Coefficient of Determination}$, a model comparison criterion for arbitrary loss functions in prediction-based settings, e.g., regression, classification or counts. We extend the prediction intervals to more general error distributions, and propose a fast model-free outlier detection algorithm for regression. Finally, we illustrate the framework via numerical experiments.

* 14 pages, 5 figures

Via

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge