Cihui Pan

EDM: Efficient Deep Feature Matching

Mar 07, 2025

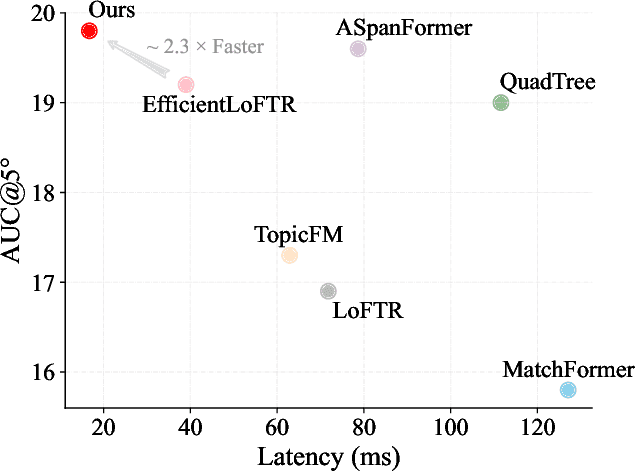

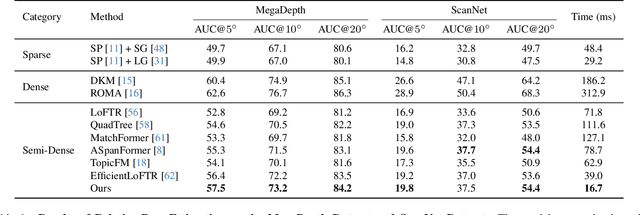

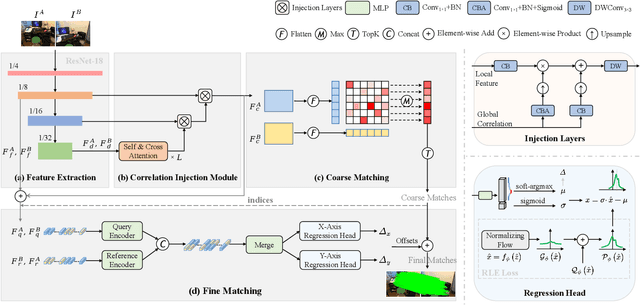

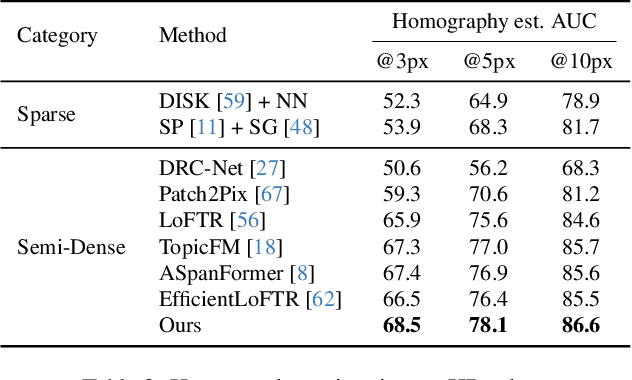

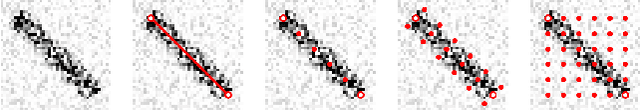

Abstract:Recent feature matching methods have achieved remarkable performance but lack efficiency consideration. In this paper, we revisit the mainstream detector-free matching pipeline and improve all its stages considering both accuracy and efficiency. We propose an Efficient Deep feature Matching network, EDM. We first adopt a deeper CNN with fewer dimensions to extract multi-level features. Then we present a Correlation Injection Module that conducts feature transformation on high-level deep features, and progressively injects feature correlations from global to local for efficient multi-scale feature aggregation, improving both speed and performance. In the refinement stage, a novel lightweight bidirectional axis-based regression head is designed to directly predict subpixel-level correspondences from latent features, avoiding the significant computational cost of explicitly locating keypoints on high-resolution local feature heatmaps. Moreover, effective selection strategies are introduced to enhance matching accuracy. Extensive experiments show that our EDM achieves competitive matching accuracy on various benchmarks and exhibits excellent efficiency, offering valuable best practices for real-world applications. The code is available at https://github.com/chicleee/EDM.

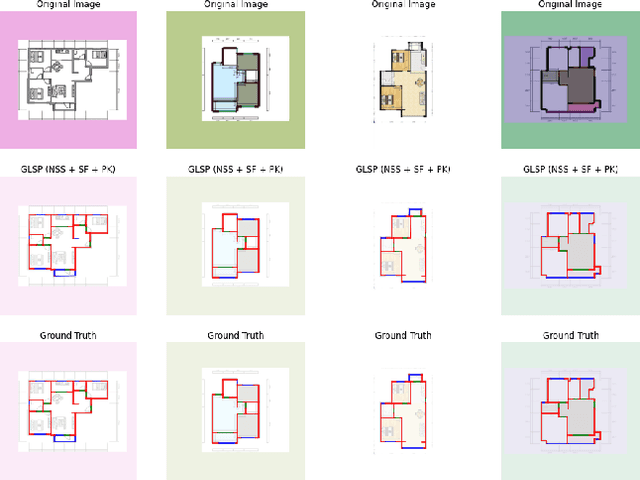

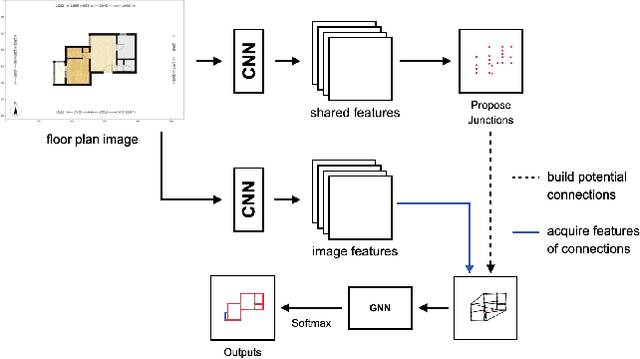

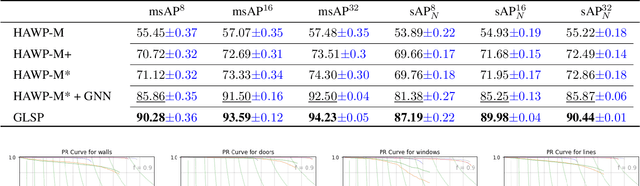

Parsing Line Segments of Floor Plan Images Using Graph Neural Networks

Mar 07, 2023

Abstract:In this paper, we present a GNN-based Line Segment Parser (GLSP), which uses a junction heatmap to predict line segments' endpoints, and graph neural networks to extract line segments and their categories. Different from previous floor plan recognition methods, which rely on semantic segmentation, our proposed method is able to output vectorized line segment and requires less post-processing steps to be put into practical use. Our experiments show that the methods outperform state-of-the-art line segment detection models on multi-class line segment detection tasks with floor plan images. In the paper, we use our floor plan dataset named Large-scale Residential Floor Plan data (LRFP). The dataset contains a total of 271,035 floor plan images. The label corresponding to each picture contains the scale information, the categories and outlines of rooms, and the endpoint positions of line segments such as doors, windows, and walls. Our augmentation method makes the dataset adaptable to the drawing styles of as many countries and regions as possible.

Multi-view Inverse Rendering for Large-scale Real-world Indoor Scenes

Nov 22, 2022

Abstract:We present a multi-view inverse rendering method for large-scale real-world indoor scenes that reconstructs global illumination and physically-reasonable SVBRDFs. Unlike previous representations, where the global illumination of large scenes is simplified as multiple environment maps, we propose a compact representation called Texture-based Lighting (TBL). It consists of 3D meshs and HDR textures, and efficiently models direct and infinite-bounce indirect lighting of the entire large scene. Based on TBL, we further propose a hybrid lighting representation with precomputed irradiance, which significantly improves the efficiency and alleviate the rendering noise in the material optimization. To physically disentangle the ambiguity between materials, we propose a three-stage material optimization strategy based on the priors of semantic segmentation and room segmentation. Extensive experiments show that the proposed method outperforms the state-of-the-arts quantitatively and qualitatively, and enables physically-reasonable mixed-reality applications such as material editing, editable novel view synthesis and relighting. The project page is at https://lzleejean.github.io/IRTex.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge