Christian Tetzlaff

Adding numbers with spiking neural circuits on neuromorphic hardware

Mar 13, 2025Abstract:Progress in neuromorphic computing requires efficient implementation of standard computational problems, like adding numbers. Here we implement one sequential and two parallel binary adders in the Lava software framework, and deploy them to the neuromorphic chip Loihi 2. We describe the time complexity, neuron and synaptic resources, as well as constraints on the bit width of the numbers that can be added with the current implementations. Further, we measure the time required for the addition operation on-chip. Importantly, we encounter trade-offs in terms of time complexity and required chip resources for the three considered adders. While sequential adders have linear time complexity $\bf\mathcal{O}(n)$ and require a linearly increasing number of neurons and synapses with number of bits $n$, the parallel adders have constant time complexity $\bf\mathcal{O}(1)$ and also require a linearly increasing number of neurons, but nonlinearly increasing synaptic resources (scaling with $\bf n^2$ or $\bf n \sqrt{n}$). This trade-off between compute time and chip resources may inform decisions in application development, and the implementations we provide may serve as a building block for further progress towards efficient neuromorphic algorithms.

Robust Computation with Intrinsic Heterogeneity

Dec 06, 2024Abstract:Intrinsic within-type neuronal heterogeneity is a ubiquitous feature of biological systems, with well-documented computational advantages. Recent works in machine learning have incorporated such diversities by optimizing neuronal parameters alongside synaptic connections and demonstrated state-of-the-art performance across common benchmarks. However, this performance gain comes at the cost of significantly higher computational costs, imposed by a larger parameter space. Furthermore, it is unclear how the neuronal parameters, constrained by the biophysics of their surroundings, are globally orchestrated to minimize top-down errors. To address these challenges, we postulate that neurons are intrinsically diverse, and investigate the computational capabilities of such heterogeneous neuronal parameters. Our results show that intrinsic heterogeneity, viewed as a fixed quenched disorder, often substantially improves performance across hundreds of temporal tasks. Notably, smaller but heterogeneous networks outperform larger homogeneous networks, despite consuming less data. We elucidate the underlying mechanisms driving this performance boost and illustrate its applicability to both rate and spiking dynamics. Moreover, our findings demonstrate that heterogeneous networks are highly resilient to severe alterations in their recurrent synaptic hyperparameters, and even recurrent connections removal does not compromise performance. The remarkable effectiveness of heterogeneous networks with small sizes and relaxed connectivity is particularly relevant for the neuromorphic community, which faces challenges due to device-to-device variability. Furthermore, understanding the mechanism of robust computation with heterogeneity also benefits neuroscientists and machine learners.

Plastic Arbor: a modern simulation framework for synaptic plasticity $\unicode{x2013}$ from single synapses to networks of morphological neurons

Nov 25, 2024

Abstract:Arbor is a software library designed for efficient simulation of large-scale networks of biological neurons with detailed morphological structures. It combines customizable neuronal and synaptic mechanisms with high-performance computing, supporting multi-core CPU and GPU systems. In humans and other animals, synaptic plasticity processes play a vital role in cognitive functions, including learning and memory. Recent studies have shown that intracellular molecular processes in dendrites significantly influence single-neuron dynamics. However, for understanding how the complex interplay between dendrites and synaptic processes influences network dynamics, computational modeling is required. To enable the modeling of large-scale networks of morphologically detailed neurons with diverse plasticity processes, we have extended the Arbor library to the Plastic Arbor framework, supporting simulations of a large variety of spike-driven plasticity paradigms. To showcase the features of the new framework, we present examples of computational models, beginning with single-synapse dynamics, progressing to multi-synapse rules, and finally scaling up to large recurrent networks. While cross-validating our implementations by comparison with other simulators, we show that Arbor allows simulating plastic networks of multi-compartment neurons at nearly no additional cost in runtime compared to point-neuron simulations. Using the new framework, we have already been able to investigate the impact of dendritic structures on network dynamics across a timescale of several hours, showing a relation between the length of dendritic trees and the ability of the network to efficiently store information. By our extension of Arbor, we aim to provide a valuable tool that will support future studies on the impact of synaptic plasticity, especially, in conjunction with neuronal morphology, in large networks.

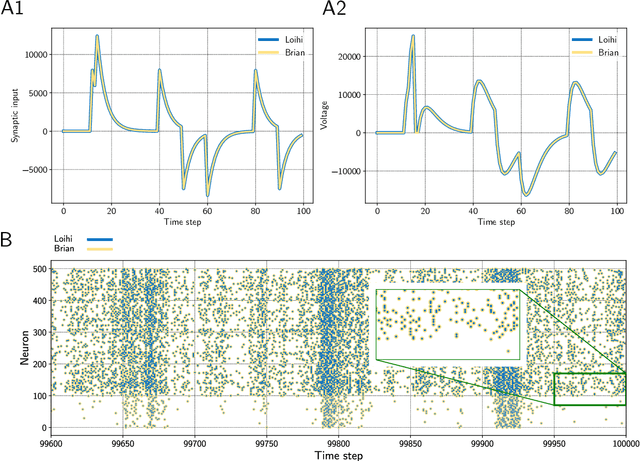

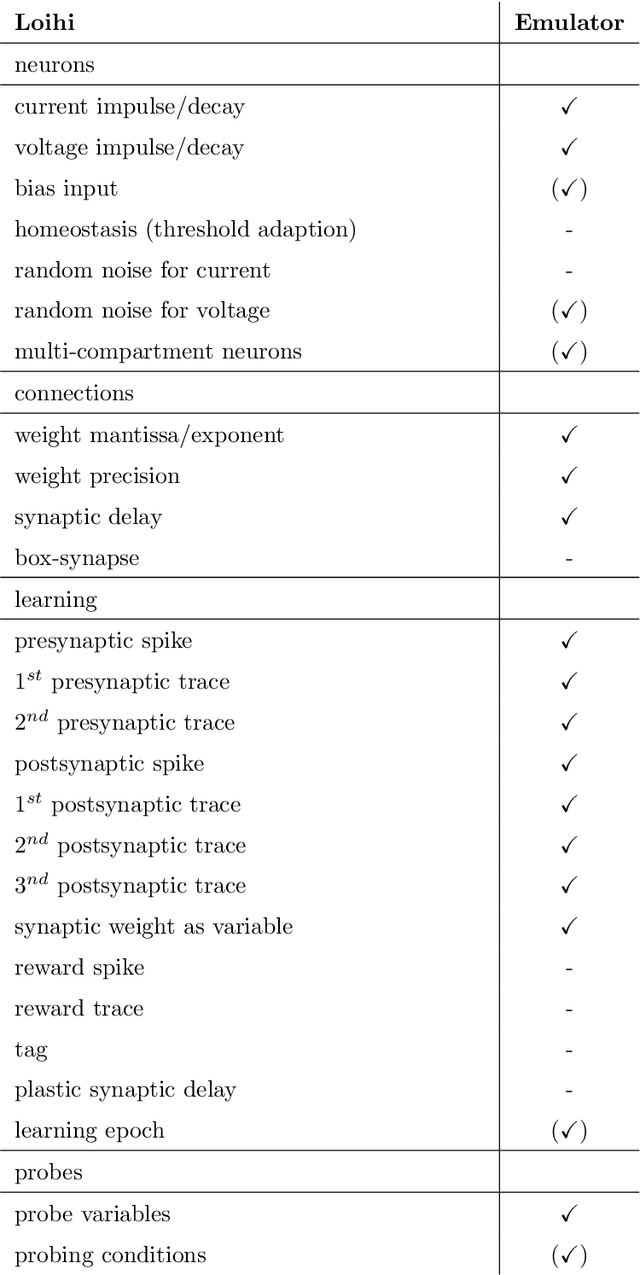

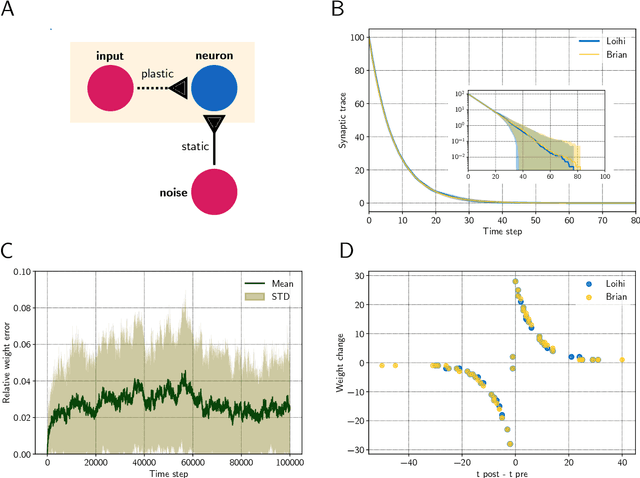

Brian2Loihi: An emulator for the neuromorphic chip Loihi using the spiking neural network simulator Brian

Sep 25, 2021

Abstract:Developing intelligent neuromorphic solutions remains a challenging endeavour. It requires a solid conceptual understanding of the hardware's fundamental building blocks. Beyond this, accessible and user-friendly prototyping is crucial to speed up the design pipeline. We developed an open source Loihi emulator based on the neural network simulator Brian that can easily be incorporated into existing simulation workflows. We demonstrate errorless Loihi emulation in software for a single neuron and for a recurrently connected spiking neural network. On-chip learning is also reviewed and implemented, with reasonable discrepancy due to stochastic rounding. This work provides a coherent presentation of Loihi's computational unit and introduces a new, easy-to-use Loihi prototyping package with the aim to help streamline conceptualisation and deployment of new algorithms.

Robust robotic control on the neuromorphic research chip Loihi

Aug 26, 2020

Abstract:Neuromorphic hardware has several promising advantages compared to von Neumann architectures and is highly interesting for robot control. However, despite the high speed and energy efficiency of neuromorphic computing, algorithms utilizing this hardware in control scenarios are still missing. One problem is the transition from fast spiking activity on the hardware, which acts on a timescale of a few milliseconds, to a control-relevant timescale on the order of hundreds of milliseconds. Another problem is to enable the execution of complex trajectories, requiring the spiking activity to contain sufficient variability, while at the same time, for reliable performance, network dynamics require adequate robustness against noise. In this study we exploit a recently developed biologically-inspired spiking neural network model, the so-called anisotropic network, as the basis for a neuromorphic algorithm for robotic control. For this, we identified and transferred the core principles of the anisotropic network to neuromorphic hardware using Intel's neuromorphic research chip Loihi and validated the system on trajectories from a motor-control task performed by a robot arm. We show that the anisotropic network on Loihi reliably encodes sequential patterns of neural activity, each representing a robotic action, and that the patterns allow the generation of multidimensional trajectories on control-relevant timescales. Taken together, our study presents a new algorithm that allows the control of complex robotic movements using state of the art neuromorphic hardware.

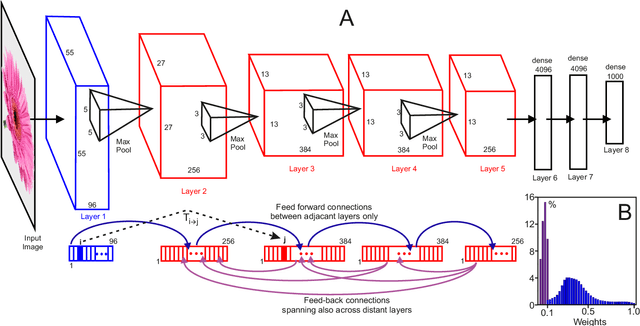

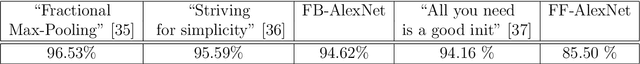

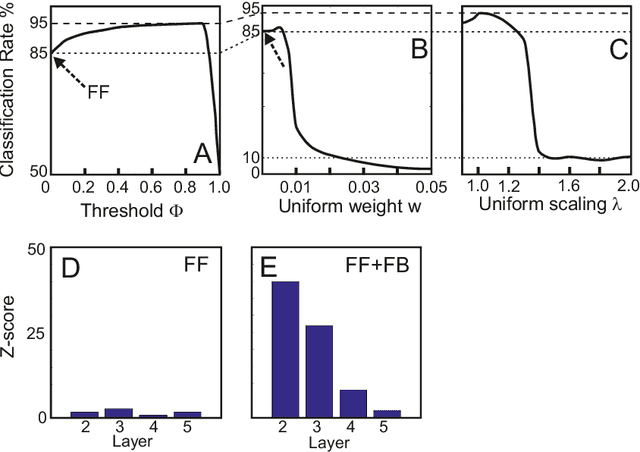

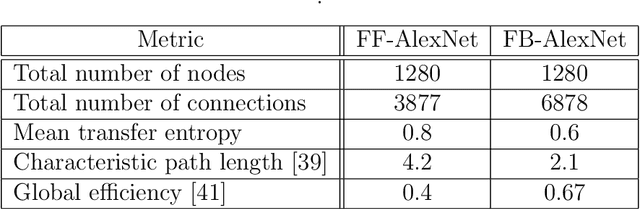

Transfer entropy-based feedback improves performance in artificial neural networks

Jun 22, 2017

Abstract:The structure of the majority of modern deep neural networks is characterized by uni- directional feed-forward connectivity across a very large number of layers. By contrast, the architecture of the cortex of vertebrates contains fewer hierarchical levels but many recurrent and feedback connections. Here we show that a small, few-layer artificial neural network that employs feedback will reach top level performance on a standard benchmark task, otherwise only obtained by large feed-forward structures. To achieve this we use feed-forward transfer entropy between neurons to structure feedback connectivity. Transfer entropy can here intuitively be understood as a measure for the relevance of certain pathways in the network, which are then amplified by feedback. Feedback may therefore be key for high network performance in small brain-like architectures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge