Christian David Marton

Efficient and robust multi-task learning in the brain with modular task primitives

May 28, 2021

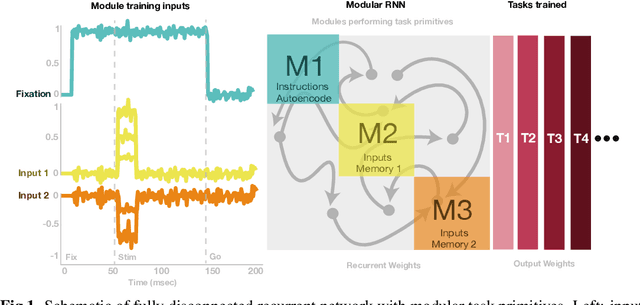

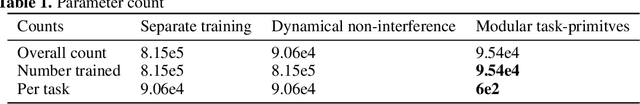

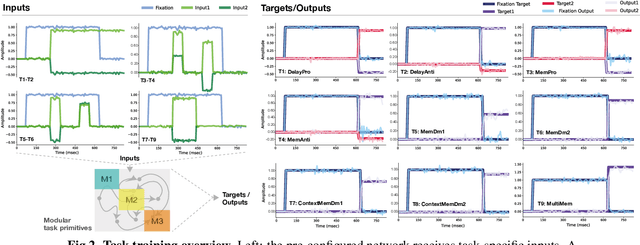

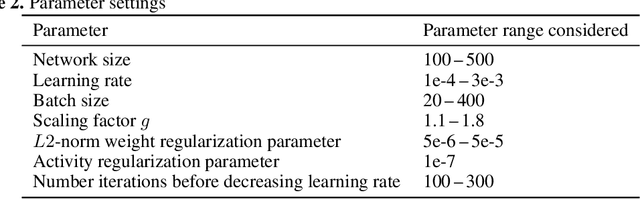

Abstract:In a real-world setting biological agents do not have infinite resources to learn new things. It is thus useful to recycle previously acquired knowledge in a way that allows for faster, less resource-intensive acquisition of multiple new skills. Neural networks in the brain are likely not entirely re-trained with new tasks, but how they leverage existing computations to learn new tasks is not well understood. In this work, we study this question in artificial neural networks trained on commonly used neuroscience paradigms. Building on recent work from the multi-task learning literature, we propose two ingredients: (1) network modularity, and (2) learning task primitives. Together, these ingredients form inductive biases we call structural and functional, respectively. Using a corpus of nine different tasks, we show that a modular network endowed with task primitives allows for learning multiple tasks well while keeping parameter counts, and updates, low. We also show that the skills acquired with our approach are more robust to a broad range of perturbations compared to those acquired with other multi-task learning strategies. This work offers a new perspective on achieving efficient multi-task learning in the brain, and makes predictions for novel neuroscience experiments in which targeted perturbations are employed to explore solution spaces.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge