ChengWei Hu

Cycle Contrastive Adversarial Learning for Unsupervised image Deraining

Jul 16, 2024

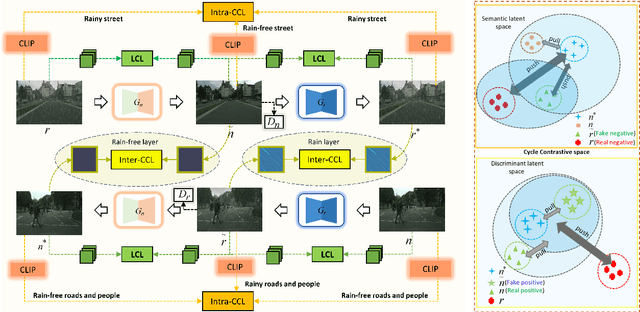

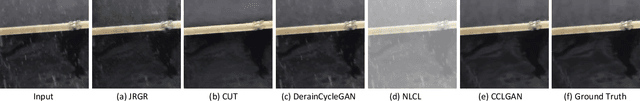

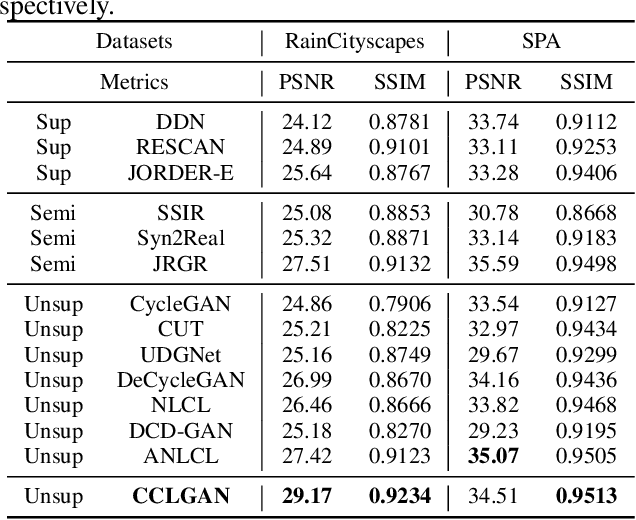

Abstract:To tackle the difficulties in fitting paired real-world data for single image deraining (SID), recent unsupervised methods have achieved notable success. However, these methods often struggle to generate high-quality, rain-free images due to a lack of attention to semantic representation and image content, resulting in ineffective separation of content from the rain layer. In this paper, we propose a novel cycle contrastive generative adversarial network for unsupervised SID, called CCLGAN. This framework combines cycle contrastive learning (CCL) and location contrastive learning (LCL). CCL improves image reconstruction and rain-layer removal by bringing similar features closer and pushing dissimilar features apart in both semantic and discriminative spaces. At the same time, LCL preserves content information by constraining mutual information at the same location across different exemplars. CCLGAN shows superior performance, as extensive experiments demonstrate the benefits of CCLGAN and the effectiveness of its components.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge