Cheng-Yang Chang

Andy

Learnable Mixed-precision and Dimension Reduction Co-design for Low-storage Activation

Jul 19, 2022

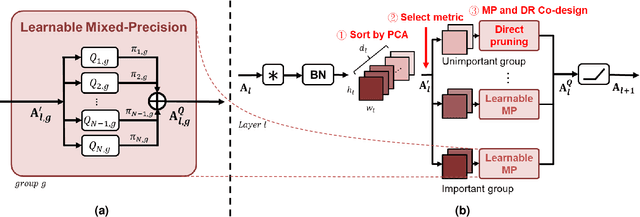

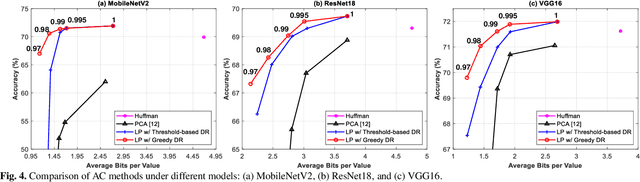

Abstract:Recently, deep convolutional neural networks (CNNs) have achieved many eye-catching results. However, deploying CNNs on resource-constrained edge devices is constrained by limited memory bandwidth for transmitting large intermediated data during inference, i.e., activation. Existing research utilizes mixed-precision and dimension reduction to reduce computational complexity but pays less attention to its application for activation compression. To further exploit the redundancy in activation, we propose a learnable mixed-precision and dimension reduction co-design system, which separates channels into groups and allocates specific compression policies according to their importance. In addition, the proposed dynamic searching technique enlarges search space and finds out the optimal bit-width allocation automatically. Our experimental results show that the proposed methods improve 3.54%/1.27% in accuracy and save 0.18/2.02 bits per value over existing mixed-precision methods on ResNet18 and MobileNetv2, respectively.

Compression-aware Projection with Greedy Dimension Reduction for Convolutional Neural Network Activations

Oct 17, 2021

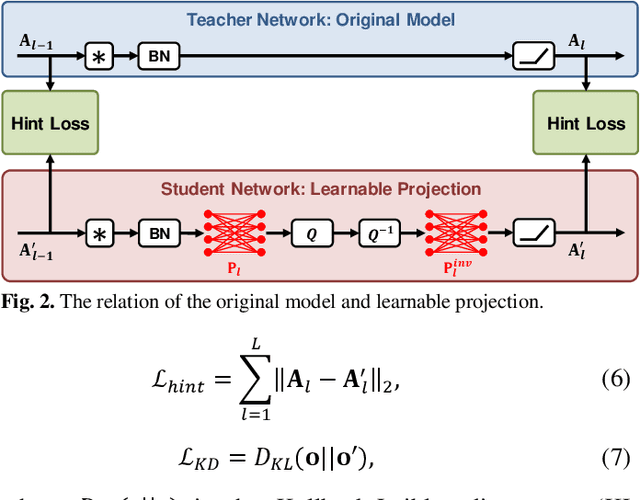

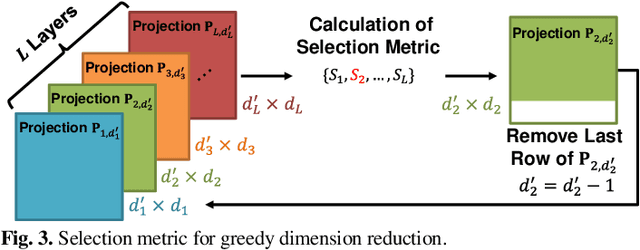

Abstract:Convolutional neural networks (CNNs) achieve remarkable performance in a wide range of fields. However, intensive memory access of activations introduces considerable energy consumption, impeding deployment of CNNs on resourceconstrained edge devices. Existing works in activation compression propose to transform feature maps for higher compressibility, thus enabling dimension reduction. Nevertheless, in the case of aggressive dimension reduction, these methods lead to severe accuracy drop. To improve the trade-off between classification accuracy and compression ratio, we propose a compression-aware projection system, which employs a learnable projection to compensate for the reconstruction loss. In addition, a greedy selection metric is introduced to optimize the layer-wise compression ratio allocation by considering both accuracy and #bits reduction simultaneously. Our test results show that the proposed methods effectively reduce 2.91x~5.97x memory access with negligible accuracy drop on MobileNetV2/ResNet18/VGG16.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge