Chaehyeon Lee

End-to-End Verifiable Decentralized Federated Learning

Apr 19, 2024

Abstract:Verifiable decentralized federated learning (FL) systems combining blockchains and zero-knowledge proofs (ZKP) make the computational integrity of local learning and global aggregation verifiable across workers. However, they are not end-to-end: data can still be corrupted prior to the learning. In this paper, we propose a verifiable decentralized FL system for end-to-end integrity and authenticity of data and computation extending verifiability to the data source. Addressing an inherent conflict of confidentiality and transparency, we introduce a two-step proving and verification (2PV) method that we apply to central system procedures: a registration workflow that enables non-disclosing verification of device certificates and a learning workflow that extends existing blockchain and ZKP-based FL systems through non-disclosing data authenticity proofs. Our evaluation on a prototypical implementation demonstrates the technical feasibility with only marginal overheads to state-of-the-art solutions.

Fair Comparison between Efficient Attentions

Jun 01, 2022

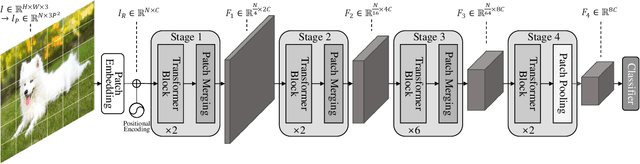

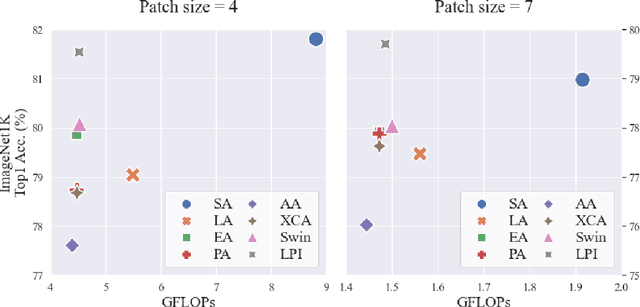

Abstract:Transformers have been successfully used in various fields and are becoming the standard tools in computer vision. However, self-attention, a core component of transformers, has a quadratic complexity problem, which limits the use of transformers in various vision tasks that require dense prediction. Many studies aiming at solving this problem have been reported proposed. However, no comparative study of these methods using the same scale has been reported due to different model configurations, training schemes, and new methods. In our paper, we validate these efficient attention models on the ImageNet1K classification task by changing only the attention operation and examining which efficient attention is better.

Training Domain-invariant Object Detector Faster with Feature Replay and Slow Learner

May 31, 2021

Abstract:In deep learning-based object detection on remote sensing domain, nuisance factors, which affect observed variables while not affecting predictor variables, often matters because they cause domain changes. Previously, nuisance disentangled feature transformation (NDFT) was proposed to build domain-invariant feature extractor with with knowledge of nuisance factors. However, NDFT requires enormous time in a training phase, so it has been impractical. In this paper, we introduce our proposed method, A-NDFT, which is an improvement to NDFT. A-NDFT utilizes two acceleration techniques, feature replay and slow learner. Consequently, on a large-scale UAVDT benchmark, it is shown that our framework can reduce the training time of NDFT from 31 hours to 3 hours while still maintaining the performance. The code will be made publicly available online.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge