Caixing Wang

Optimal Kernel Quantile Learning with Random Features

Aug 24, 2024Abstract:The random feature (RF) approach is a well-established and efficient tool for scalable kernel methods, but existing literature has primarily focused on kernel ridge regression with random features (KRR-RF), which has limitations in handling heterogeneous data with heavy-tailed noises. This paper presents a generalization study of kernel quantile regression with random features (KQR-RF), which accounts for the non-smoothness of the check loss in KQR-RF by introducing a refined error decomposition and establishing a novel connection between KQR-RF and KRR-RF. Our study establishes the capacity-dependent learning rates for KQR-RF under mild conditions on the number of RFs, which are minimax optimal up to some logarithmic factors. Importantly, our theoretical results, utilizing a data-dependent sampling strategy, can be extended to cover the agnostic setting where the target quantile function may not precisely align with the assumed kernel space. By slightly modifying our assumptions, the capacity-dependent error analysis can also be applied to cases with Lipschitz continuous losses, enabling broader applications in the machine learning community. To validate our theoretical findings, simulated experiments and a real data application are conducted.

Distributed High-Dimensional Quantile Regression: Estimation Efficiency and Support Recovery

May 13, 2024

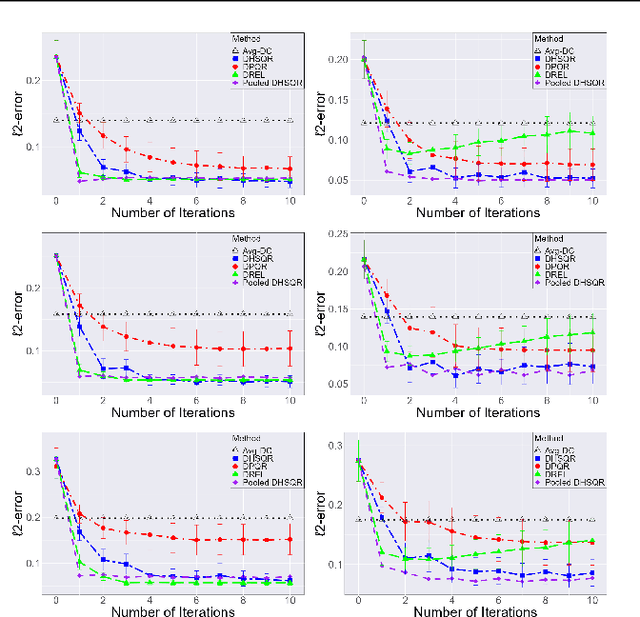

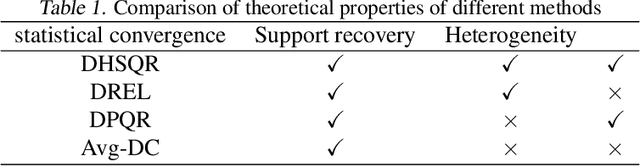

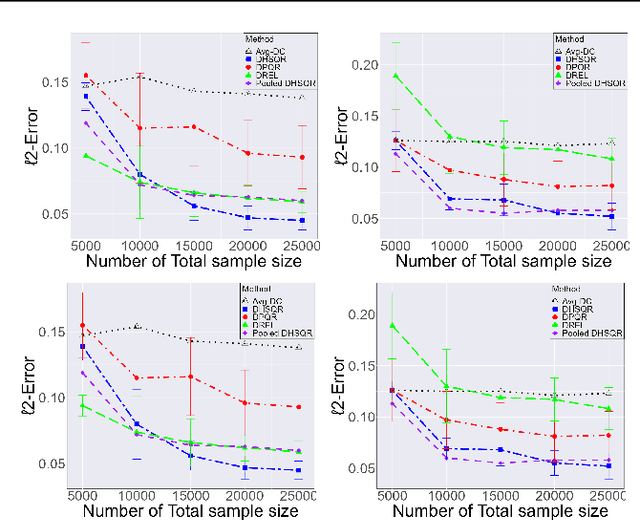

Abstract:In this paper, we focus on distributed estimation and support recovery for high-dimensional linear quantile regression. Quantile regression is a popular alternative tool to the least squares regression for robustness against outliers and data heterogeneity. However, the non-smoothness of the check loss function poses big challenges to both computation and theory in the distributed setting. To tackle these problems, we transform the original quantile regression into the least-squares optimization. By applying a double-smoothing approach, we extend a previous Newton-type distributed approach without the restrictive independent assumption between the error term and covariates. An efficient algorithm is developed, which enjoys high computation and communication efficiency. Theoretically, the proposed distributed estimator achieves a near-oracle convergence rate and high support recovery accuracy after a constant number of iterations. Extensive experiments on synthetic examples and a real data application further demonstrate the effectiveness of the proposed method.

Minimax Optimal Transfer Learning for Kernel-based Nonparametric Regression

Oct 21, 2023

Abstract:In recent years, transfer learning has garnered significant attention in the machine learning community. Its ability to leverage knowledge from related studies to improve generalization performance in a target study has made it highly appealing. This paper focuses on investigating the transfer learning problem within the context of nonparametric regression over a reproducing kernel Hilbert space. The aim is to bridge the gap between practical effectiveness and theoretical guarantees. We specifically consider two scenarios: one where the transferable sources are known and another where they are unknown. For the known transferable source case, we propose a two-step kernel-based estimator by solely using kernel ridge regression. For the unknown case, we develop a novel method based on an efficient aggregation algorithm, which can automatically detect and alleviate the effects of negative sources. This paper provides the statistical properties of the desired estimators and establishes the minimax optimal rate. Through extensive numerical experiments on synthetic data and real examples, we validate our theoretical findings and demonstrate the effectiveness of our proposed method.

Towards a Unified Analysis of Kernel-based Methods Under Covariate Shift

Oct 19, 2023Abstract:Covariate shift occurs prevalently in practice, where the input distributions of the source and target data are substantially different. Despite its practical importance in various learning problems, most of the existing methods only focus on some specific learning tasks and are not well validated theoretically and numerically. To tackle this problem, we propose a unified analysis of general nonparametric methods in a reproducing kernel Hilbert space (RKHS) under covariate shift. Our theoretical results are established for a general loss belonging to a rich loss function family, which includes many commonly used methods as special cases, such as mean regression, quantile regression, likelihood-based classification, and margin-based classification. Two types of covariate shift problems are the focus of this paper and the sharp convergence rates are established for a general loss function to provide a unified theoretical analysis, which concurs with the optimal results in literature where the squared loss is used. Extensive numerical studies on synthetic and real examples confirm our theoretical findings and further illustrate the effectiveness of our proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge