Bryar Shareef

NULLBUS: Multimodal Mixed-Supervision for Breast Ultrasound Segmentation via Nullable Global-Local Prompts

Dec 23, 2025Abstract:Breast ultrasound (BUS) segmentation provides lesion boundaries essential for computer-aided diagnosis and treatment planning. While promptable methods can improve segmentation performance and tumor delineation when text or spatial prompts are available, many public BUS datasets lack reliable metadata or reports, constraining training to small multimodal subsets and reducing robustness. We propose NullBUS, a multimodal mixed-supervision framework that learns from images with and without prompts in a single model. To handle missing text, we introduce nullable prompts, implemented as learnable null embeddings with presence masks, enabling fallback to image-only evidence when metadata are absent and the use of text when present. Evaluated on a unified pool of three public BUS datasets, NullBUS achieves a mean IoU of 0.8568 and a mean Dice of 0.9103, demonstrating state-of-the-art performance under mixed prompt availability.

XBusNet: Text-Guided Breast Ultrasound Segmentation via Multimodal Vision-Language Learning

Sep 08, 2025Abstract:Background: Precise breast ultrasound (BUS) segmentation supports reliable measurement, quantitative analysis, and downstream classification, yet remains difficult for small or low-contrast lesions with fuzzy margins and speckle noise. Text prompts can add clinical context, but directly applying weakly localized text-image cues (e.g., CAM/CLIP-derived signals) tends to produce coarse, blob-like responses that smear boundaries unless additional mechanisms recover fine edges. Methods: We propose XBusNet, a novel dual-prompt, dual-branch multimodal model that combines image features with clinically grounded text. A global pathway based on a CLIP Vision Transformer encodes whole-image semantics conditioned on lesion size and location, while a local U-Net pathway emphasizes precise boundaries and is modulated by prompts that describe shape, margin, and Breast Imaging Reporting and Data System (BI-RADS) terms. Prompts are assembled automatically from structured metadata, requiring no manual clicks. We evaluate on the Breast Lesions USG (BLU) dataset using five-fold cross-validation. Primary metrics are Dice and Intersection over Union (IoU); we also conduct size-stratified analyses and ablations to assess the roles of the global and local paths and the text-driven modulation. Results: XBusNet achieves state-of-the-art performance on BLU, with mean Dice of 0.8765 and IoU of 0.8149, outperforming six strong baselines. Small lesions show the largest gains, with fewer missed regions and fewer spurious activations. Ablation studies show complementary contributions of global context, local boundary modeling, and prompt-based modulation. Conclusions: A dual-prompt, dual-branch multimodal design that merges global semantics with local precision yields accurate BUS segmentation masks and improves robustness for small, low-contrast lesions.

Breast Ultrasound Tumor Classification Using a Hybrid Multitask CNN-Transformer Network

Aug 04, 2023

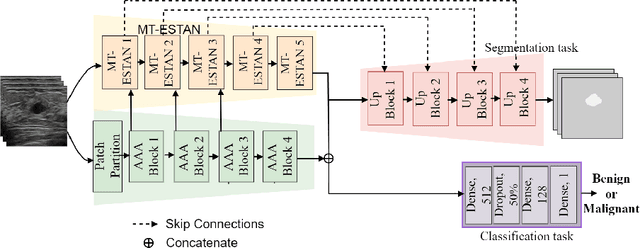

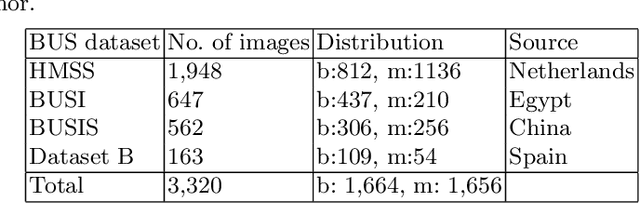

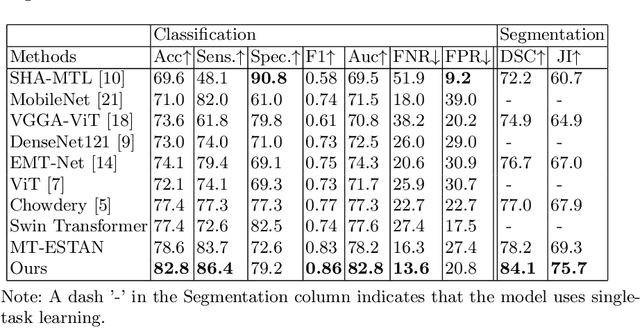

Abstract:Capturing global contextual information plays a critical role in breast ultrasound (BUS) image classification. Although convolutional neural networks (CNNs) have demonstrated reliable performance in tumor classification, they have inherent limitations for modeling global and long-range dependencies due to the localized nature of convolution operations. Vision Transformers have an improved capability of capturing global contextual information but may distort the local image patterns due to the tokenization operations. In this study, we proposed a hybrid multitask deep neural network called Hybrid-MT-ESTAN, designed to perform BUS tumor classification and segmentation using a hybrid architecture composed of CNNs and Swin Transformer components. The proposed approach was compared to nine BUS classification methods and evaluated using seven quantitative metrics on a dataset of 3,320 BUS images. The results indicate that Hybrid-MT-ESTAN achieved the highest accuracy, sensitivity, and F1 score of 82.7%, 86.4%, and 86.0%, respectively.

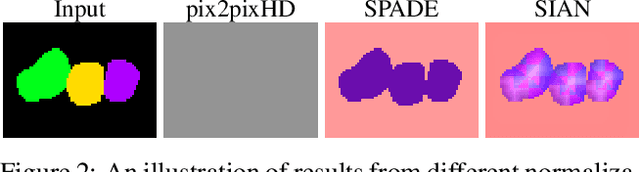

SIAN: Style-Guided Instance-Adaptive Normalization for Multi-Organ Histopathology Image Synthesis

Sep 02, 2022

Abstract:Existing deep networks for histopathology image synthesis cannot generate accurate boundaries for clustered nuclei and cannot output image styles that align with different organs. To address these issues, we propose a style-guided instance-adaptive normalization (SIAN) to synthesize realistic color distributions and textures for different organs. SIAN contains four phases, semantization, stylization, instantiation, and modulation. The four phases work together and are integrated into a generative network to embed image semantics, style, and instance-level boundaries. Experimental results demonstrate the effectiveness of all components in SIAN, and show that the proposed method outperforms the state-of-the-art conditional GANs for histopathology image synthesis using the Frechet Inception Distance (FID), structural similarity Index (SSIM), detection quality(DQ), segmentation quality(SQ), and panoptic quality(PQ). Furthermore, the performance of a segmentation network could be significantly improved by incorporating synthetic images generated using SIAN.

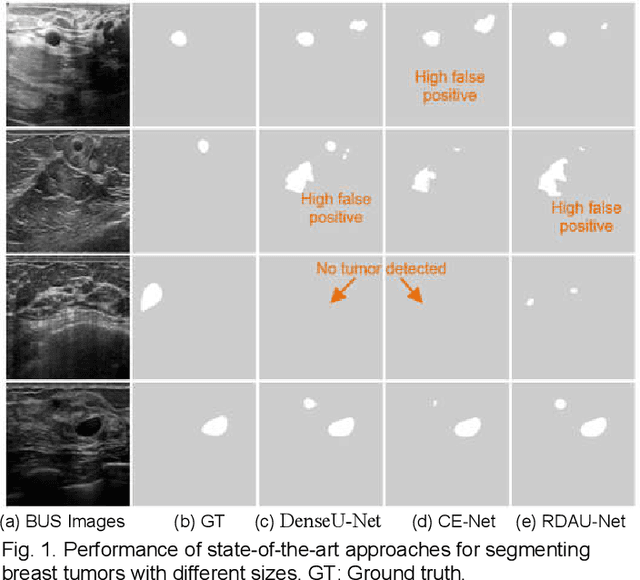

ESTAN: Enhanced Small Tumor-Aware Network for Breast Ultrasound Image Segmentation

Sep 27, 2020

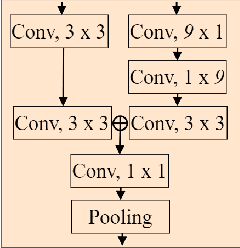

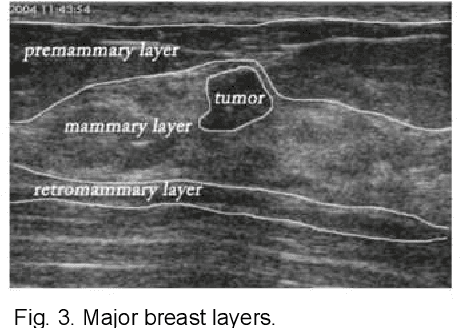

Abstract:Breast tumor segmentation is a critical task in computer-aided diagnosis (CAD) systems for breast cancer detection because accurate tumor size, shape and location are important for further tumor quantification and classification. However, segmenting small tumors in ultrasound images is challenging, due to the speckle noise, varying tumor shapes and sizes among patients, and the existence of tumor-like image regions. Recently, deep learning-based approaches have achieved great success for biomedical image analysis, but current state-of-the-art approaches achieve poor performance for segmenting small breast tumors. In this paper, we propose a novel deep neural network architecture, namely Enhanced Small Tumor-Aware Network (ESTAN), to accurately and robustly segment breast tumors. ESTAN introduces two encoders to extract and fuse image context information at different scales and utilizes row-column-wise kernels in the encoder to adapt to breast anatomy. We validate the proposed approach and compare it to nine state-of-the-art approaches on three public breast ultrasound datasets using seven quantitative metrics. The results demonstrate that the proposed approach achieves the best overall performance and outperforms all other approaches on small tumor segmentation.

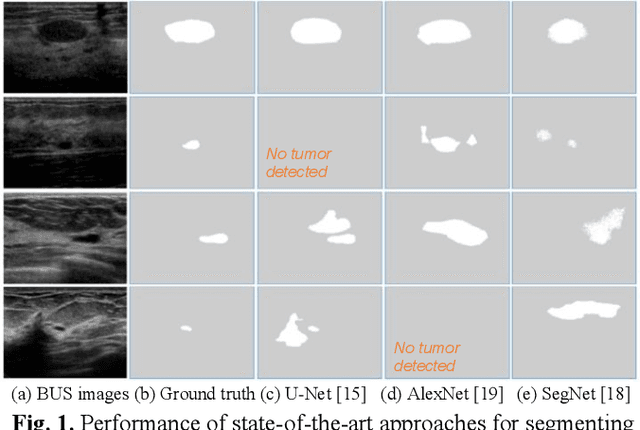

Stan: Small tumor-aware network for breast ultrasound image segmentation

Feb 03, 2020

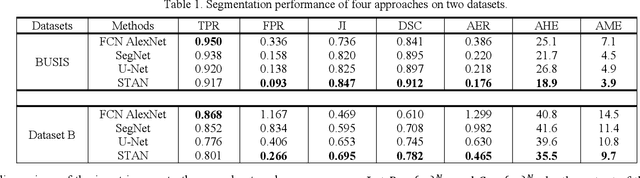

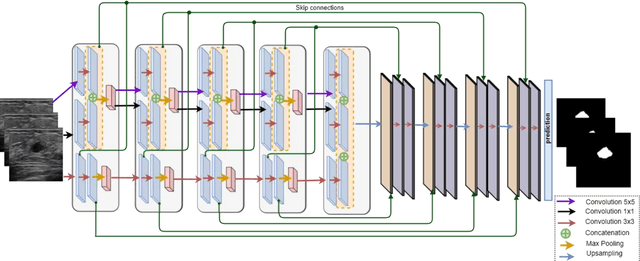

Abstract:Breast tumor segmentation provides accurate tumor boundary, and serves as a key step toward further cancer quantification. Although deep learning-based approaches have been proposed and achieved promising results, existing approaches have difficulty in detecting small breast tumors. The capacity to detecting small tumors is particularly important in finding early stage cancers using computer-aided diagnosis (CAD) systems. In this paper, we propose a novel deep learning architecture called Small Tumor-Aware Network (STAN), to improve the performance of segmenting tumors with different size. The new architecture integrates both rich context information and high-resolution image features. We validate the proposed approach using seven quantitative metrics on two public breast ultrasound datasets. The proposed approach outperformed the state-of-the-art approaches in segmenting small breast tumors. Index

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge