Bobbili Veerendra Raj Kumar

Class adaptive threshold and negative class guided noisy annotation robust Facial Expression Recognition

May 03, 2023

Abstract:The hindering problem in facial expression recognition (FER) is the presence of inaccurate annotations referred to as noisy annotations in the datasets. These noisy annotations are present in the datasets inherently because the labeling is subjective to the annotator, clarity of the image, etc. Recent works use sample selection methods to solve this noisy annotation problem in FER. In our work, we use a dynamic adaptive threshold to separate confident samples from non-confident ones so that our learning won't be hampered due to non-confident samples. Instead of discarding the non-confident samples, we impose consistency in the negative classes of those non-confident samples to guide the model to learn better in the positive class. Since FER datasets usually come with 7 or 8 classes, we can correctly guess a negative class by 85% probability even by choosing randomly. By learning "which class a sample doesn't belong to", the model can learn "which class it belongs to" in a better manner. We demonstrate proposed framework's effectiveness using quantitative as well as qualitative results. Our method performs better than the baseline by a margin of 4% to 28% on RAFDB and 3.3% to 31.4% on FERPlus for various levels of synthetic noisy labels in the aforementioned datasets.

ABAW : Facial Expression Recognition in the wild

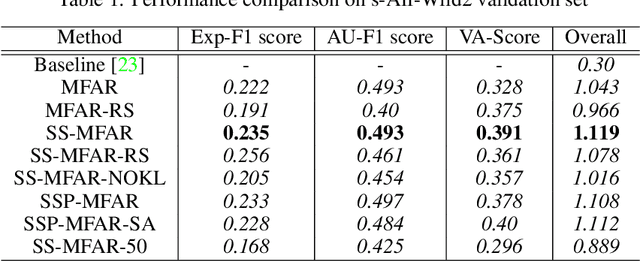

Mar 17, 2023Abstract:The fifth Affective Behavior Analysis in-the-wild (ABAW) competition has multiple challenges such as Valence-Arousal Estimation Challenge, Expression Classification Challenge, Action Unit Detection Challenge, Emotional Reaction Intensity Estimation Challenge. In this paper we have dealt only expression classification challenge using multiple approaches such as fully supervised, semi-supervised and noisy label approach. Our approach using noise aware model has performed better than baseline model by 10.46% and semi supervised model has performed better than baseline model by 9.38% and the fully supervised model has performed better than the baseline by 9.34%

Dynamic Adaptive Threshold based Learning for Noisy Annotations Robust Facial Expression Recognition

Aug 22, 2022

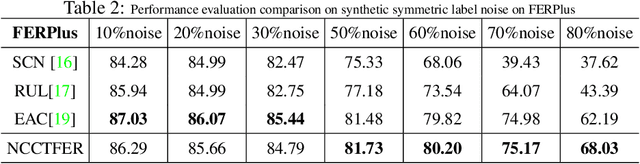

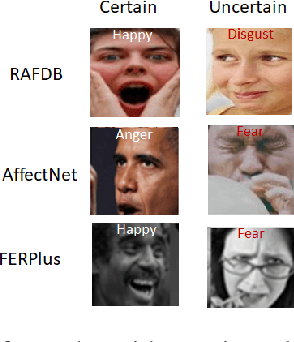

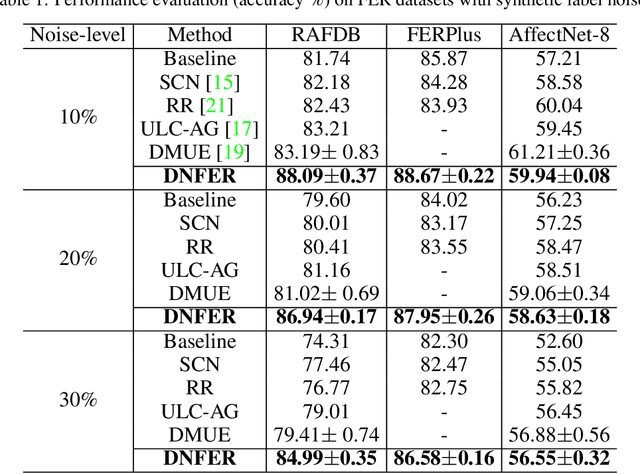

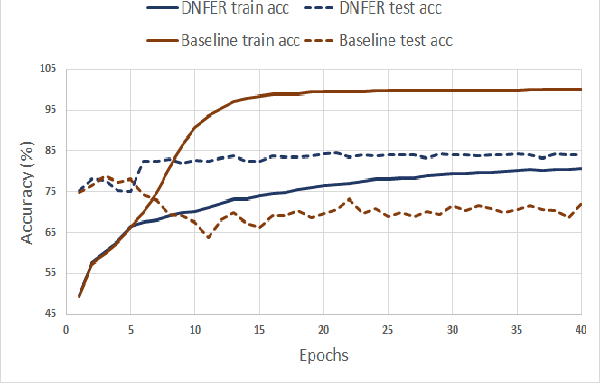

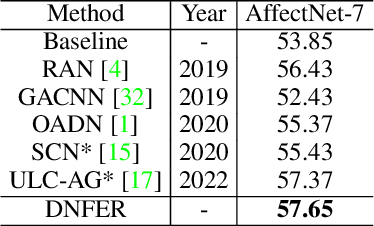

Abstract:The real-world facial expression recognition (FER) datasets suffer from noisy annotations due to crowd-sourcing, ambiguity in expressions, the subjectivity of annotators and inter-class similarity. However, the recent deep networks have strong capacity to memorize the noisy annotations leading to corrupted feature embedding and poor generalization. To handle noisy annotations, we propose a dynamic FER learning framework (DNFER) in which clean samples are selected based on dynamic class specific threshold during training. Specifically, DNFER is based on supervised training using selected clean samples and unsupervised consistent training using all the samples. During training, the mean posterior class probabilities of each mini-batch is used as dynamic class-specific threshold to select the clean samples for supervised training. This threshold is independent of noise rate and does not need any clean data unlike other methods. In addition, to learn from all samples, the posterior distributions between weakly-augmented image and strongly-augmented image are aligned using an unsupervised consistency loss. We demonstrate the robustness of DNFER on both synthetic as well as on real noisy annotated FER datasets like RAFDB, FERPlus, SFEW and AffectNet.

SS-MFAR : Semi-supervised Multi-task Facial Affect Recognition

Jul 19, 2022

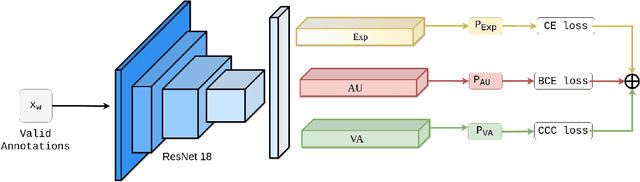

Abstract:Automatic affect recognition has applications in many areas such as education, gaming, software development, automotives, medical care, etc. but it is non trivial task to achieve appreciable performance on in-the-wild data sets. In-the-wild data sets though represent real-world scenarios better than synthetic data sets, the former ones suffer from the problem of incomplete labels. Inspired by semi-supervised learning, in this paper, we introduce our submission to the Multi-Task-Learning Challenge at the 4th Affective Behavior Analysis in-the-wild (ABAW) 2022 Competition. The three tasks that are considered in this challenge are valence-arousal(VA) estimation, classification of expressions into 6 basic (anger, disgust, fear, happiness, sadness, surprise), neutral, and the 'other' category and 12 action units(AU) numbered AU-\{1,2,4,6,7,10,12,15,23,24,25,26\}. Our method Semi-supervised Multi-task Facial Affect Recognition titled \textbf{SS-MFAR} uses a deep residual network with task specific classifiers for each of the tasks along with adaptive thresholds for each expression class and semi-supervised learning for the incomplete labels. Source code is available at https://github.com/1980x/ABAW2022DMACS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge