Bahador Rashidi

Mutual Information Analysis in Multimodal Learning Systems

May 21, 2024

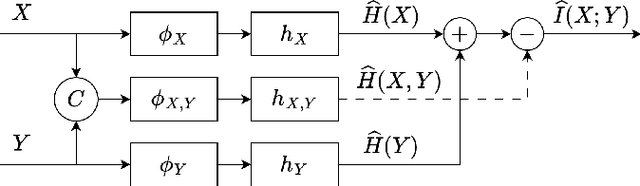

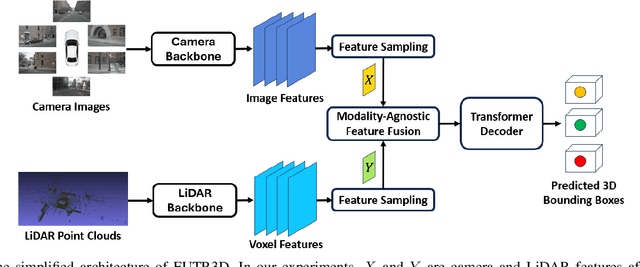

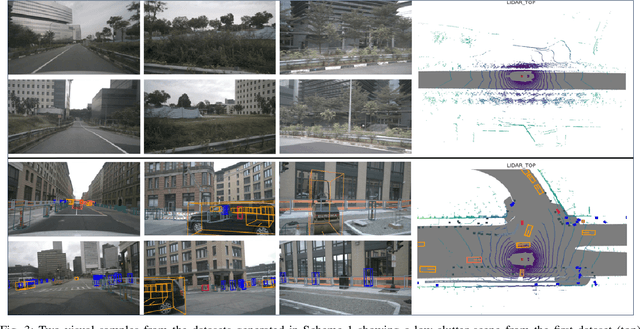

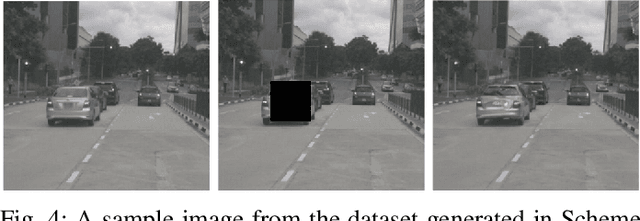

Abstract:In recent years, there has been a significant increase in applications of multimodal signal processing and analysis, largely driven by the increased availability of multimodal datasets and the rapid progress in multimodal learning systems. Well-known examples include autonomous vehicles, audiovisual generative systems, vision-language systems, and so on. Such systems integrate multiple signal modalities: text, speech, images, video, LiDAR, etc., to perform various tasks. A key issue for understanding such systems is the relationship between various modalities and how it impacts task performance. In this paper, we employ the concept of mutual information (MI) to gain insight into this issue. Taking advantage of the recent progress in entropy modeling and estimation, we develop a system called InfoMeter to estimate MI between modalities in a multimodal learning system. We then apply InfoMeter to analyze a multimodal 3D object detection system over a large-scale dataset for autonomous driving. Our experiments on this system suggest that a lower MI between modalities is beneficial for detection accuracy. This new insight may facilitate improvements in the development of future multimodal learning systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge