Babatunji Omoniwa

Density-Aware Reinforcement Learning to Optimise Energy Efficiency in UAV-Assisted Networks

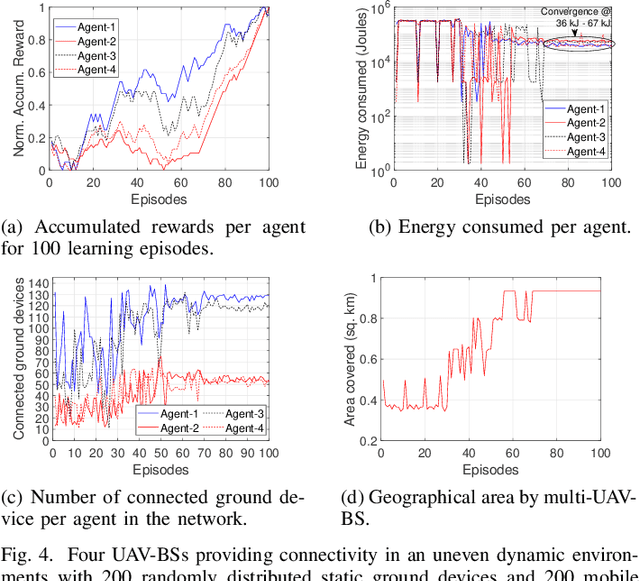

Jun 14, 2023Abstract:Unmanned aerial vehicles (UAVs) serving as aerial base stations can be deployed to provide wireless connectivity to mobile users, such as vehicles. However, the density of vehicles on roads often varies spatially and temporally primarily due to mobility and traffic situations in a geographical area, making it difficult to provide ubiquitous service. Moreover, as energy-constrained UAVs hover in the sky while serving mobile users, they may be faced with interference from nearby UAV cells or other access points sharing the same frequency band, thereby impacting the system's energy efficiency (EE). Recent multi-agent reinforcement learning (MARL) approaches applied to optimise the users' coverage worked well in reasonably even densities but might not perform as well in uneven users' distribution, i.e., in urban road networks with uneven concentration of vehicles. In this work, we propose a density-aware communication-enabled multi-agent decentralised double deep Q-network (DACEMAD-DDQN) approach that maximises the total system's EE by jointly optimising the trajectory of each UAV, the number of connected users, and the UAVs' energy consumption while keeping track of dense and uneven users' distribution. Our result outperforms state-of-the-art MARL approaches in terms of EE by as much as 65% - 85%.

Optimising Energy Efficiency in UAV-Assisted Networks using Deep Reinforcement Learning

Apr 04, 2022

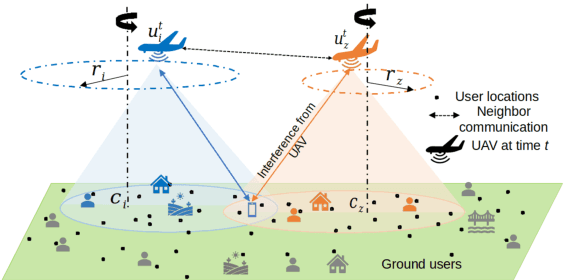

Abstract:In this letter, we study the energy efficiency (EE) optimisation of unmanned aerial vehicles (UAVs) providing wireless coverage to static and mobile ground users. Recent multi-agent reinforcement learning approaches optimise the system's EE using a 2D trajectory design, neglecting interference from nearby UAV cells. We aim to maximise the system's EE by jointly optimising each UAV's 3D trajectory, number of connected users, and the energy consumed, while accounting for interference. Thus, we propose a cooperative Multi-Agent Decentralised Double Deep Q-Network (MAD-DDQN) approach. Our approach outperforms existing baselines in terms of EE by as much as 55 -- 80%.

Multi-Agent Deep Reinforcement Learning For Optimising Energy Efficiency of Fixed-Wing UAV Cellular Access Points

Nov 03, 2021

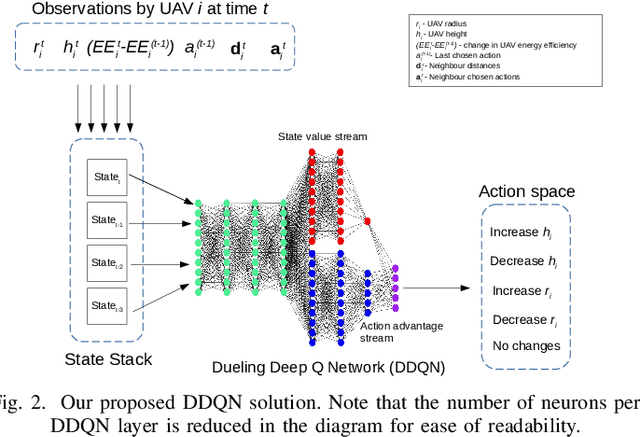

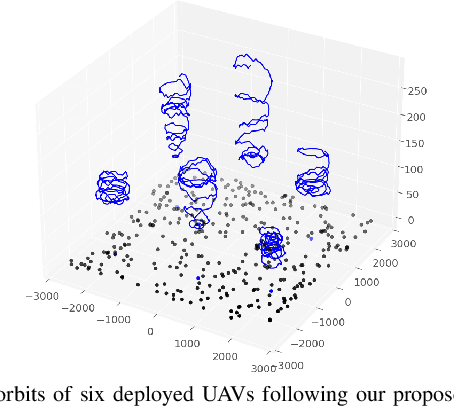

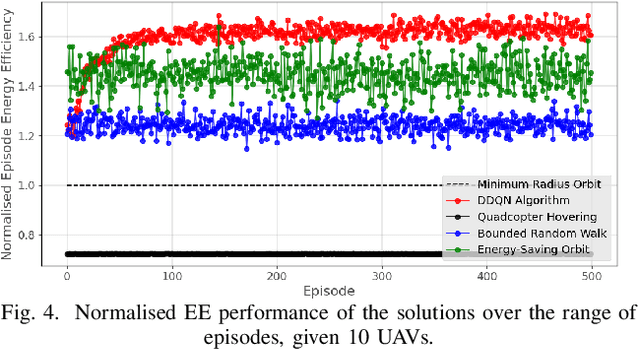

Abstract:Unmanned Aerial Vehicles (UAVs) promise to become an intrinsic part of next generation communications, as they can be deployed to provide wireless connectivity to ground users to supplement existing terrestrial networks. The majority of the existing research into the use of UAV access points for cellular coverage considers rotary-wing UAV designs (i.e. quadcopters). However, we expect fixed-wing UAVs to be more appropriate for connectivity purposes in scenarios where long flight times are necessary (such as for rural coverage), as fixed-wing UAVs rely on a more energy-efficient form of flight when compared to the rotary-wing design. As fixed-wing UAVs are typically incapable of hovering in place, their deployment optimisation involves optimising their individual flight trajectories in a way that allows them to deliver high quality service to the ground users in an energy-efficient manner. In this paper, we propose a multi-agent deep reinforcement learning approach to optimise the energy efficiency of fixed-wing UAV cellular access points while still allowing them to deliver high-quality service to users on the ground. In our decentralized approach, each UAV is equipped with a Dueling Deep Q-Network (DDQN) agent which can adjust the 3D trajectory of the UAV over a series of timesteps. By coordinating with their neighbours, the UAVs adjust their individual flight trajectories in a manner that optimises the total system energy efficiency. We benchmark the performance of our approach against a series of heuristic trajectory planning strategies, and demonstrate that our method can improve the system energy efficiency by as much as 70%.

Energy-aware placement optimization of UAV base stations via decentralized multi-agent Q-learning

Jun 01, 2021

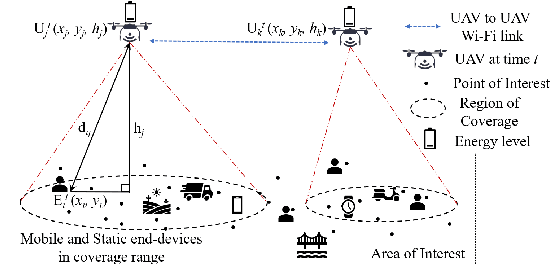

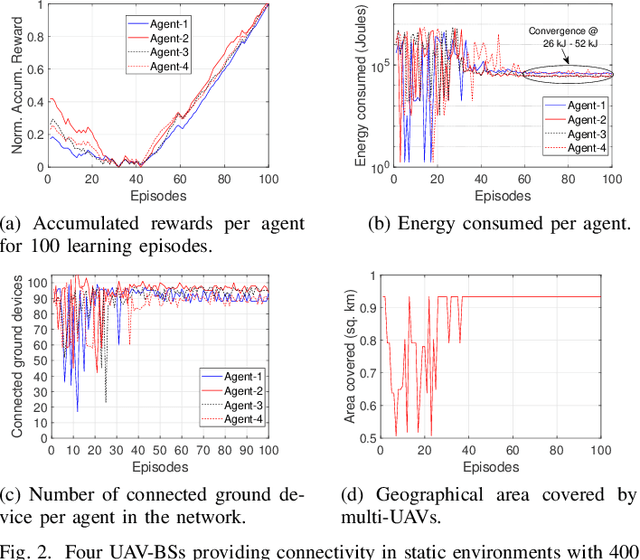

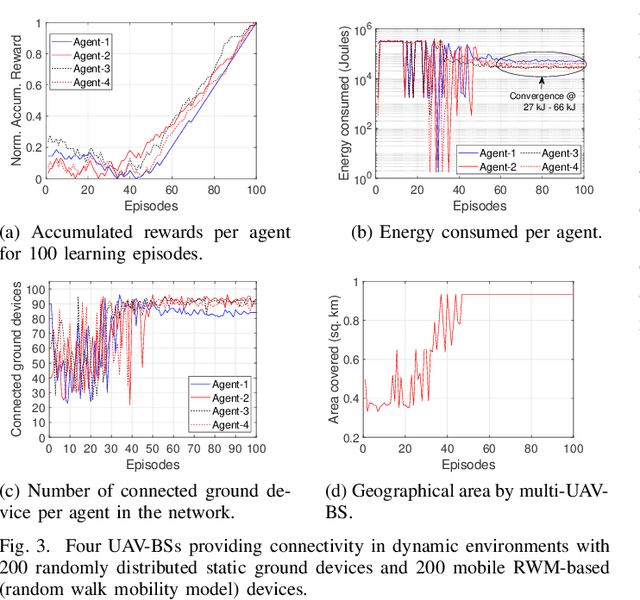

Abstract:Unmanned aerial vehicles serving as aerial base stations (UAV-BSs) can be deployed to provide wireless connectivity to ground devices in events of increased network demand, points-of-failure in existing infrastructure, or disasters. However, it is challenging to conserve the energy of UAVs during prolonged coverage tasks, considering their limited on-board battery capacity. Reinforcement learning-based (RL) approaches have been previously used to improve energy utilization of multiple UAVs, however, a central cloud controller is assumed to have complete knowledge of the end-devices' locations, i.e., the controller periodically scans and sends updates for UAV decision-making. This assumption is impractical in dynamic network environments with mobile ground devices. To address this problem, we propose a decentralized Q-learning approach, where each UAV-BS is equipped with an autonomous agent that maximizes the connectivity to ground devices while improving its energy utilization. Experimental results show that the proposed design significantly outperforms the centralized approaches in jointly maximizing the number of connected ground devices and the energy utilization of the UAV-BSs.

A reinforcement learning approach to improve communication performance and energy utilization in fog-based IoT

Jun 01, 2021

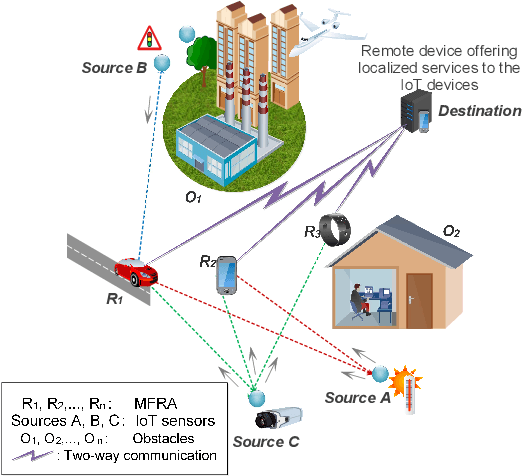

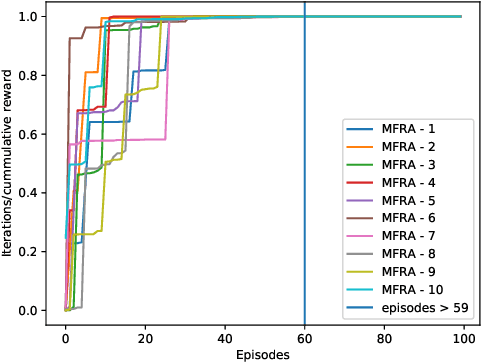

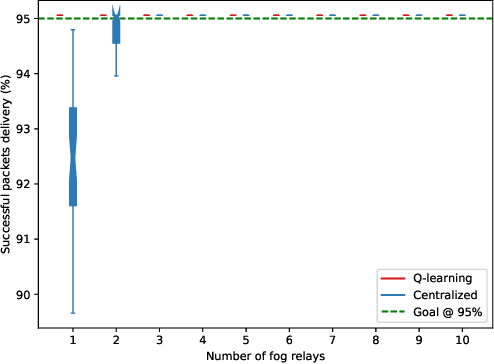

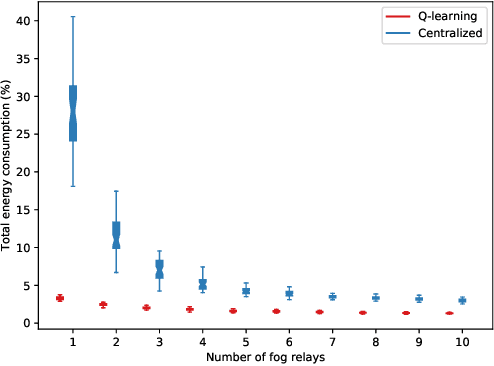

Abstract:Recent research has shown the potential of using available mobile fog devices (such as smartphones, drones, domestic and industrial robots) as relays to minimize communication outages between sensors and destination devices, where localized Internet-of-Things services (e.g., manufacturing process control, health and security monitoring) are delivered. However, these mobile relays deplete energy when they move and transmit to distant destinations. As such, power-control mechanisms and intelligent mobility of the relay devices are critical in improving communication performance and energy utilization. In this paper, we propose a Q-learning-based decentralized approach where each mobile fog relay agent (MFRA) is controlled by an autonomous agent which uses reinforcement learning to simultaneously improve communication performance and energy utilization. Each autonomous agent learns based on the feedback from the destination and its own energy levels whether to remain active and forward the message, or become passive for that transmission phase. We evaluate the approach by comparing with the centralized approach, and observe that with lesser number of MFRAs, our approach is able to ensure reliable delivery of data and reduce overall energy cost by 56.76\% -- 88.03\%.

Using Artificial Neural Network Techniques for Prediction of Electric Energy Consumption

Dec 06, 2014

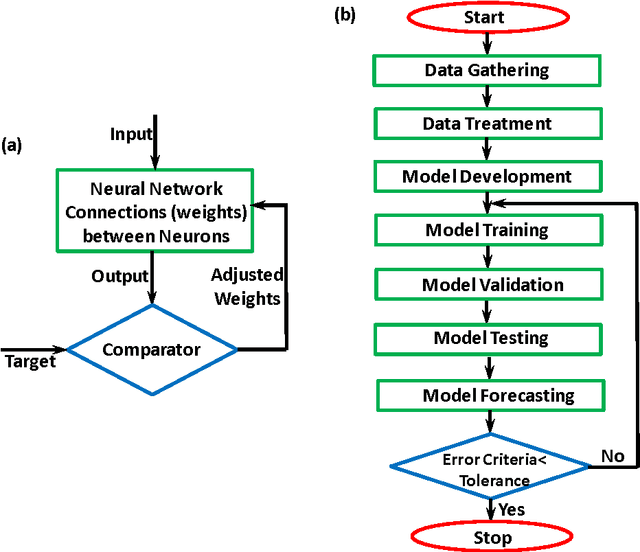

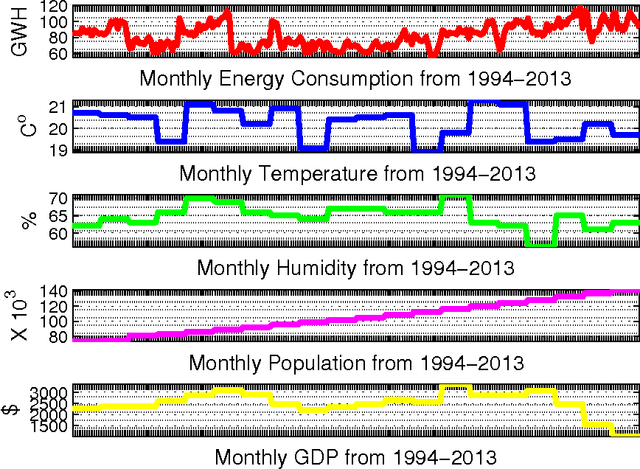

Abstract:Due to imprecision and uncertainties in predicting real world problems, artificial neural network (ANN) techniques have become increasingly useful for modeling and optimization. This paper presents an artificial neural network approach for forecasting electric energy consumption. For effective planning and operation of power systems, optimal forecasting tools are needed for energy operators to maximize profit and also to provide maximum satisfaction to energy consumers. Monthly data for electric energy consumed in the Gaza strip was collected from year 1994 to 2013. Data was trained and the proposed model was validated using 2-Fold and K-Fold cross validation techniques. The model has been tested with actual energy consumption data and yields satisfactory performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge